I know that Numpy can use different backends like OpenBLAS or MKL. I have also read that MKL is heavily optimized for Intel, so usually people suggest to use OpenBLAS on AMD, right?

I use the following test code:

import numpy as np

def testfunc(x):

np.random.seed(x)

X = np.random.randn(2000, 4000)

np.linalg.eigh(X @ X.T)

%timeit testfunc(0)

I have tested this code using different CPUs:

- On Intel Xeon E5-1650 v3, this code performs in 0.7s using 6 out of 12 cores.

- On AMD Ryzen 5 2600, this code performs in 1.45s using all 12 cores.

- On AMD Ryzen Threadripper 3970X, this code performs in 1.55s using all 64 cores.

I am using the same Conda environment on all three systems. According to np.show_config(), the Intel system uses the MKL backend for Numpy (libraries = ['mkl_rt', 'pthread']), whereas the AMD systems use OpenBLAS (libraries = ['openblas', 'openblas']). The CPU core usage was determined by observing top in a Linux shell:

- For the Intel Xeon E5-1650 v3 CPU (6 physical cores), it shows 12 cores (6 idling).

- For the AMD Ryzen 5 2600 CPU (6 physical cores), it shows 12 cores (none idling).

- For the AMD Ryzen Threadripper 3970X CPU (32 physical cores), it shows 64 cores (none idling).

The above observations give rise to the following questions:

- Is that normal, that linear algebra on up-to-date AMD CPUs using OpenBLAS is that much slower than on a six-year-old Intel Xeon? (also addressed in Update 3)

- Judging by the observations of the CPU load, it looks like Numpy utilizes the multi-core environment in all three cases. How can it be that the Threadripper is even slower than the Ryzen 5, even though it has almost six times as many physical cores? (also see Update 3)

- Is there anything that can be done to speed up the computations on the Threadripper? (partially answered in Update 2)

Update 1: The OpenBLAS version is 0.3.6. I read somewhere, that upgrading to a newer version might help, however, with OpenBLAS updated to 0.3.10, the performance for testfunc is still 1.55s on AMD Ryzen Threadripper 3970X.

Update 2: Using the MKL backend for Numpy in conjunction with the environment variable MKL_DEBUG_CPU_TYPE=5 (as described here) reduces the run time for testfunc on AMD Ryzen Threadripper 3970X to only 0.52s, which is actually more or less satisfying. FTR, setting this variable via ~/.profile did not work for me on Ubuntu 20.04. Also, setting the variable from within Jupyter did not work. So instead I put it into ~/.bashrc which works now. Anyways, performing 35% faster than an old Intel Xeon, is this all we get, or can we get more out of it?

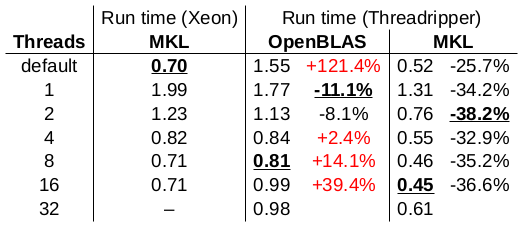

Update 3: I play around with the number of threads used by MKL/OpenBLAS:

The run times are reported in seconds. The best result of each column is underlined. I used OpenBLAS 0.3.6 for this test. The conclusions from this test:

- The single-core performance of the Threadripper using OpenBLAS is a bit better than the single-core performance of the Xeon (11% faster), however, its single-core performance is even better when using MKL (34% faster).

- The multi-core performance of the Threadripper using OpenBLAS is ridiculously worse than the multi-core performance of the Xeon. What is going on here?

- The Threadripper performs overall better than the Xeon, when MKL is used (26% to 38% faster than Xeon). The overall best performance is achieved by the Threadripper using 16 threads and MKL (36% faster than Xeon).

Update 4: Just for clarification. No, I do not think that (a) this or (b) that answers this question. (a) suggests that "OpenBLAS does nearly as well as MKL", which is a strong contradiction to the numbers I observed. According to my numbers, OpenBLAS performs ridiculously worse than MKL. The question is why. (a) and (b) both suggest using MKL_DEBUG_CPU_TYPE=5 in conjunction with MKL to achieve maximum performance. This might be right, but it does neither explain why OpenBLAS is that dead slow. Neither it explains, why even with MKL and MKL_DEBUG_CPU_TYPE=5 the 32-core Threadripper is only 36% faster than the six-year-old 6-core Xeon.

OPENBLAS_NUM_THREADS,MKL_NUM_THREADS). Server processors have slower per-core speed, and multicore speedups in BLAS libraries are usually very appalling. – Ilsenp.linalg.eigh(X @ X.T). Also set theMKL_NUM_THREADSto the number of physical threads. This BLAS algortihms usually scale negative with virtual cores. – GradeyMKL_DEBUG_CPU_TYPE=5for your 32 core CPU (xxx_cpuinfo.txt) – Gradeygrep MHz /proc/cpuinfoor whatever). Ideally run your program underperfon Linux:perf stat my_benchmarkto record HW performance counters which includes thecyclesevent, and will calculate the average clock speed the CPU actually ran at over the benchmark interval. (By dividingcyclesby thetask-clockkernel event.) – Propertiedperf statand not using Python's own%timeit-instruction. This means that now theimport numpy-instruction also is measured. This leads to different results. This is why I decided to summarize them in a Google Sheet first: If you think that this experiments provides more important insights, I will replace the experiment and the results in my original question. Let me know! – Myersperf stat -p $PIDafter initializing, so it attaches right as you're starting the benchmark. – PropertiedMKL_DEBUG_CPU_TYPE-hack, the speed is ok. – MyersRyzen/python/numpy). Disclaimer: The question I linked to is my own question. – LakeshaOpenBLAS performs ridiculously worse than MKL. The question is ... why OpenBLAS is that dead slowis the same in my opinion (or very similar) as askingwhy is an open-source software implementation slower than a closed-source software implementation?which can not be answered because the closed-source software is not available. – Lakesha32-core Threadripper is only 36% faster than the six-year-old 6-core Xeonhow you profile and generate your measurements matters a lot when doing comparisons like this. If you post your benchmarking code, then someone could at least answer the question of 'why is my benchmarking code slower given x,y,z' (for example: Intel-Python benchmark code is open-sourced to show how/why so much faster). – LakeshaX = np.random.randn(2000, 4000)most probably doesn't parallelise well. You have to use internal numpy benchmark for fair comparison numpy.org/doc/stable/benchmarking.html. You have to limit number of cores too because your matrices or test conditions might be to weak(small) for available resources (CPU core count and etc) which actually slows instead of boosts. – Urquhart