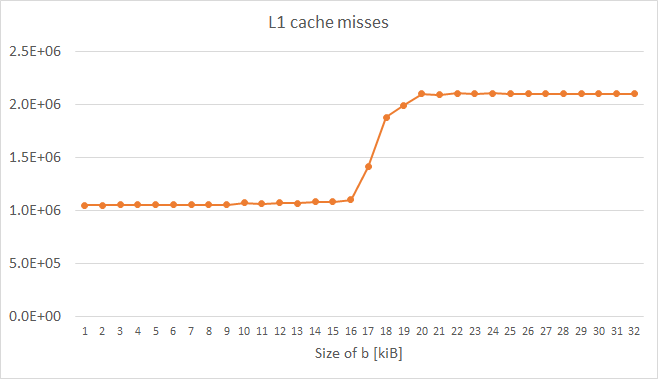

I am aware of multiple questions on this topic, however, I haven't seen any clear answers nor any benchmark measurements. I thus created a simple program that works with two arrays of integers. The first array a is very large (64 MB) and the second array b is small to fit into L1 cache. The program iterates over a and adds its elements to corresponding elements of b in a modular sense (when the end of b is reached, the program starts from its beginning again). The measured numbers of L1 cache misses for different sizes of b is as follows:

The measurements were made on a Xeon E5 2680v3 Haswell type CPU with 32 kiB L1 data cache. Therefore, in all the cases, b fitted into L1 cache. However, the number of misses grew considerably by around 16 kiB of b memory footprint. This might be expected since the loads of both a and b causes invalidation of cache lines from the beginning of b at this point.

There is absolutely no reason to keep elements of a in cache, they are used only once. I therefore run a program variant with non-temporal loads of a data, but the number of misses did not change. I also run a variant with non-temporal prefetching of a data, but still with the very same results.

My benchmark code is as follows (variant w/o non-temporal prefetching shown):

int main(int argc, char* argv[])

{

uint64_t* a;

const uint64_t a_bytes = 64 * 1024 * 1024;

const uint64_t a_count = a_bytes / sizeof(uint64_t);

posix_memalign((void**)(&a), 64, a_bytes);

uint64_t* b;

const uint64_t b_bytes = atol(argv[1]) * 1024;

const uint64_t b_count = b_bytes / sizeof(uint64_t);

posix_memalign((void**)(&b), 64, b_bytes);

__m256i ones = _mm256_set1_epi64x(1UL);

for (long i = 0; i < a_count; i += 4)

_mm256_stream_si256((__m256i*)(a + i), ones);

// load b into L1 cache

for (long i = 0; i < b_count; i++)

b[i] = 0;

int papi_events[1] = { PAPI_L1_DCM };

long long papi_values[1];

PAPI_start_counters(papi_events, 1);

uint64_t* a_ptr = a;

const uint64_t* a_ptr_end = a + a_count;

uint64_t* b_ptr = b;

const uint64_t* b_ptr_end = b + b_count;

while (a_ptr < a_ptr_end) {

#ifndef NTLOAD

__m256i aa = _mm256_load_si256((__m256i*)a_ptr);

#else

__m256i aa = _mm256_stream_load_si256((__m256i*)a_ptr);

#endif

__m256i bb = _mm256_load_si256((__m256i*)b_ptr);

bb = _mm256_add_epi64(aa, bb);

_mm256_store_si256((__m256i*)b_ptr, bb);

a_ptr += 4;

b_ptr += 4;

if (b_ptr >= b_ptr_end)

b_ptr = b;

}

PAPI_stop_counters(papi_values, 1);

std::cout << "L1 cache misses: " << papi_values[0] << std::endl;

free(a);

free(b);

}

What I wonder is whether CPU vendors support or are going to support non-temporal loads / prefetching or any other way how to label some data as not-being-hold in cache (e.g., to tag them as LRU). There are situations, e.g., in HPC, where similar scenarios are common in practice. For example, in sparse iterative linear solvers / eigensolvers, matrix data are usually very large (larger than cache capacities), but vectors are sometimes small enough to fit into L3 or even L2 cache. Then, we would like to keep them there at all costs. Unfortunately, loading of matrix data can cause invalidation of especially x-vector cache lines, even though in each solver iteration, matrix elements are used only once and there is no reason to keep them in cache after they have been processed.

UPDATE

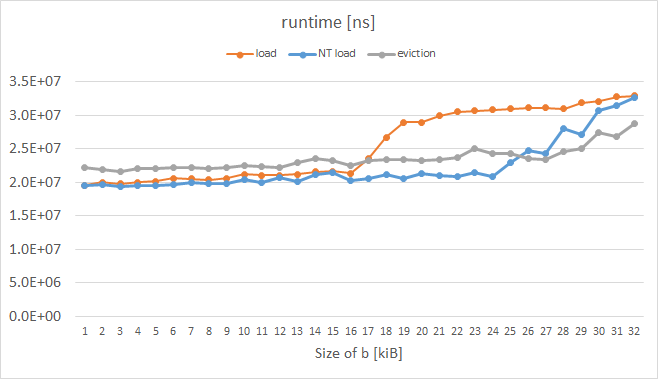

I just did a similar experiment on an Intel Xeon Phi KNC, while measuring runtime instead of L1 misses (I haven't find a way how to measure them reliably; PAPI and VTune gave weird metrics.) The results are here:

The orange curve represents ordinary loads and it has the expected shape. The blue curve represents loads with so-call eviction hint (EH) set in the instruction prefix and the gray curve represents a case where each cache line of a was manually evicted; both these tricks enabled by KNC obviously worked as we wanted to for b over 16 kiB. The code of the measured loop is as follows:

while (a_ptr < a_ptr_end) {

#ifdef NTLOAD

__m512i aa = _mm512_extload_epi64((__m512i*)a_ptr,

_MM_UPCONV_EPI64_NONE, _MM_BROADCAST64_NONE, _MM_HINT_NT);

#else

__m512i aa = _mm512_load_epi64((__m512i*)a_ptr);

#endif

__m512i bb = _mm512_load_epi64((__m512i*)b_ptr);

bb = _mm512_or_epi64(aa, bb);

_mm512_store_epi64((__m512i*)b_ptr, bb);

#ifdef EVICT

_mm_clevict(a_ptr, _MM_HINT_T0);

#endif

a_ptr += 8;

b_ptr += 8;

if (b_ptr >= b_ptr_end)

b_ptr = b;

}

UPDATE 2

On Xeon Phi, icpc generated for normal-load variant (orange curve) prefetching for a_ptr:

400e93: 62 d1 78 08 18 4c 24 vprefetch0 [r12+0x80]

When I manually (by hex-editing the executable) modified this to:

400e93: 62 d1 78 08 18 44 24 vprefetchnta [r12+0x80]

I got the desired resutls, even better than the blue/gray curves. However, I was not able to force the compiler to generate non-temporal prefetchnig for me, even by using #pragma prefetch a_ptr:_MM_HINT_NTA before the loop :(