I'm afraid your understanding is completely backwards. :)

Think of "standard in", "standard out", and "standard error" from the program's perspective, not from the kernel's perspective.

When a program needs to print output, it normally prints to "standard out". A program typically prints output to standard out with printf, which prints ONLY to standard out.

When a program needs to print error information (not necessarily exceptions, those are a programming-language construct, imposed at a much higher level), it normally prints to "standard error". It normally does so with fprintf, which accepts a file stream to use when printing. The file stream could be any file opened for writing: standard out, standard error, or any other file that has been opened with fopen or fdopen.

"standard in" is used when the file needs to read input, using fread or fgets, or getchar.

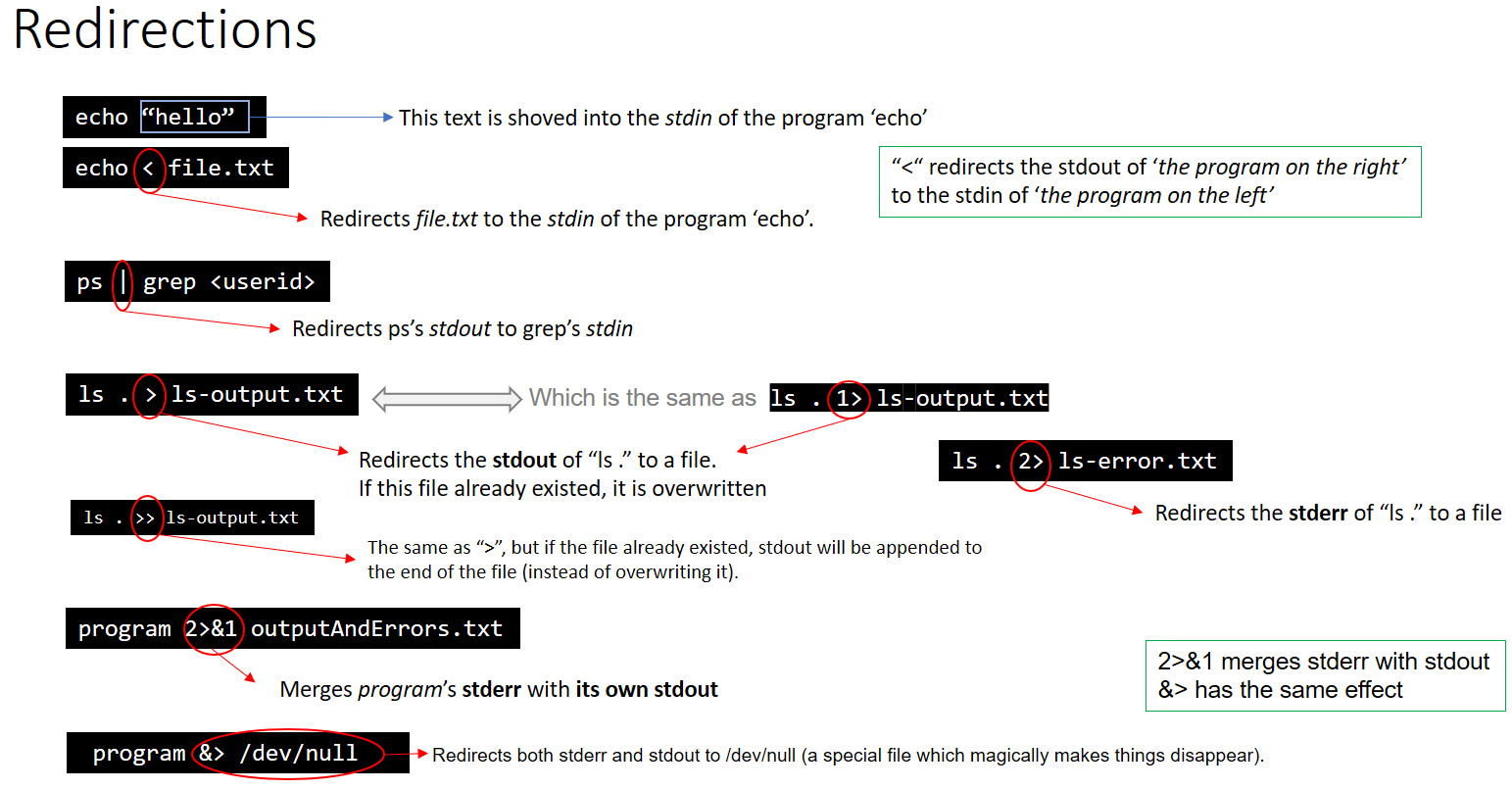

Any of these files can be easily redirected from the shell, like this:

cat /etc/passwd > /tmp/out # redirect cat's standard out to /tmp/foo

cat /nonexistant 2> /tmp/err # redirect cat's standard error to /tmp/error

cat < /etc/passwd # redirect cat's standard input to /etc/passwd

Or, the whole enchilada:

cat < /etc/passwd > /tmp/out 2> /tmp/err

There are two important caveats: First, "standard in", "standard out", and "standard error" are just a convention. They are a very strong convention, but it's all just an agreement that it is very nice to be able to run programs like this: grep echo /etc/services | awk '{print $2;}' | sort and have the standard outputs of each program hooked into the standard input of the next program in the pipeline.

Second, I've given the standard ISO C functions for working with file streams (FILE * objects) -- at the kernel level, it is all file descriptors (int references to the file table) and much lower-level operations like read and write, which do not do the happy buffering of the ISO C functions. I figured to keep it simple and use the easier functions, but I thought all the same you should know the alternatives. :)