Was looking for the same kind of problem.

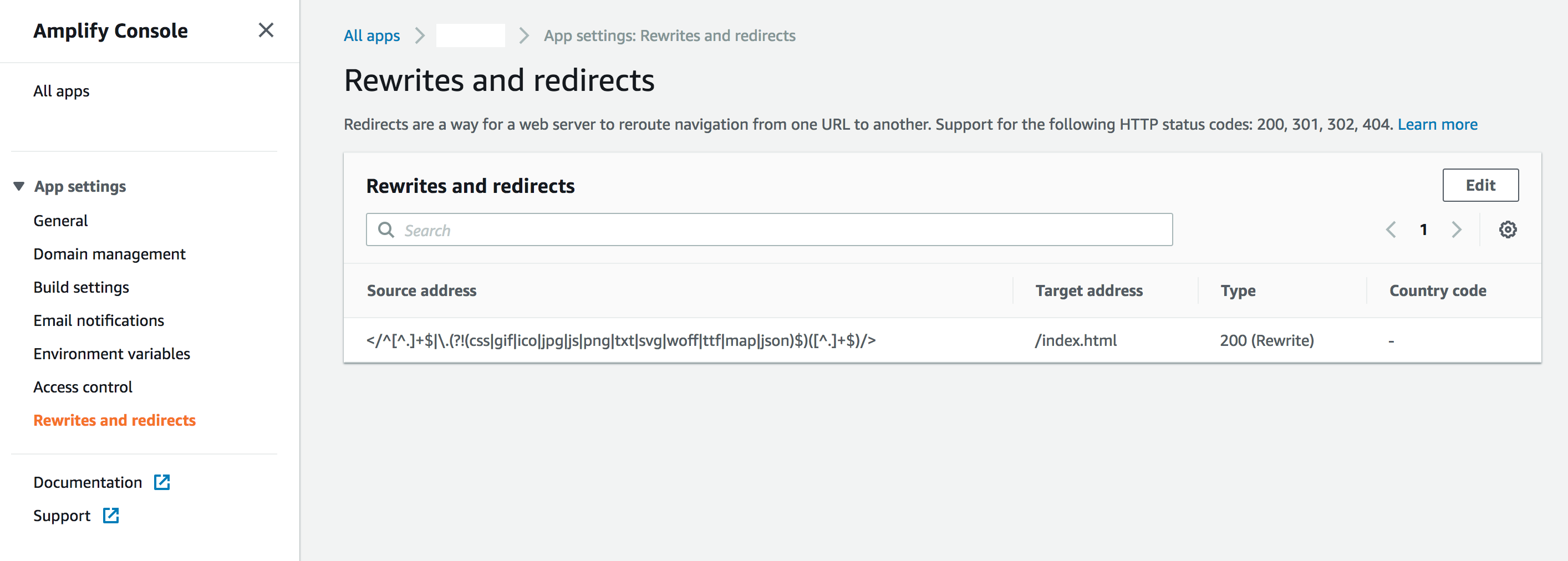

I ended up using a mix of the suggested solutions described above.

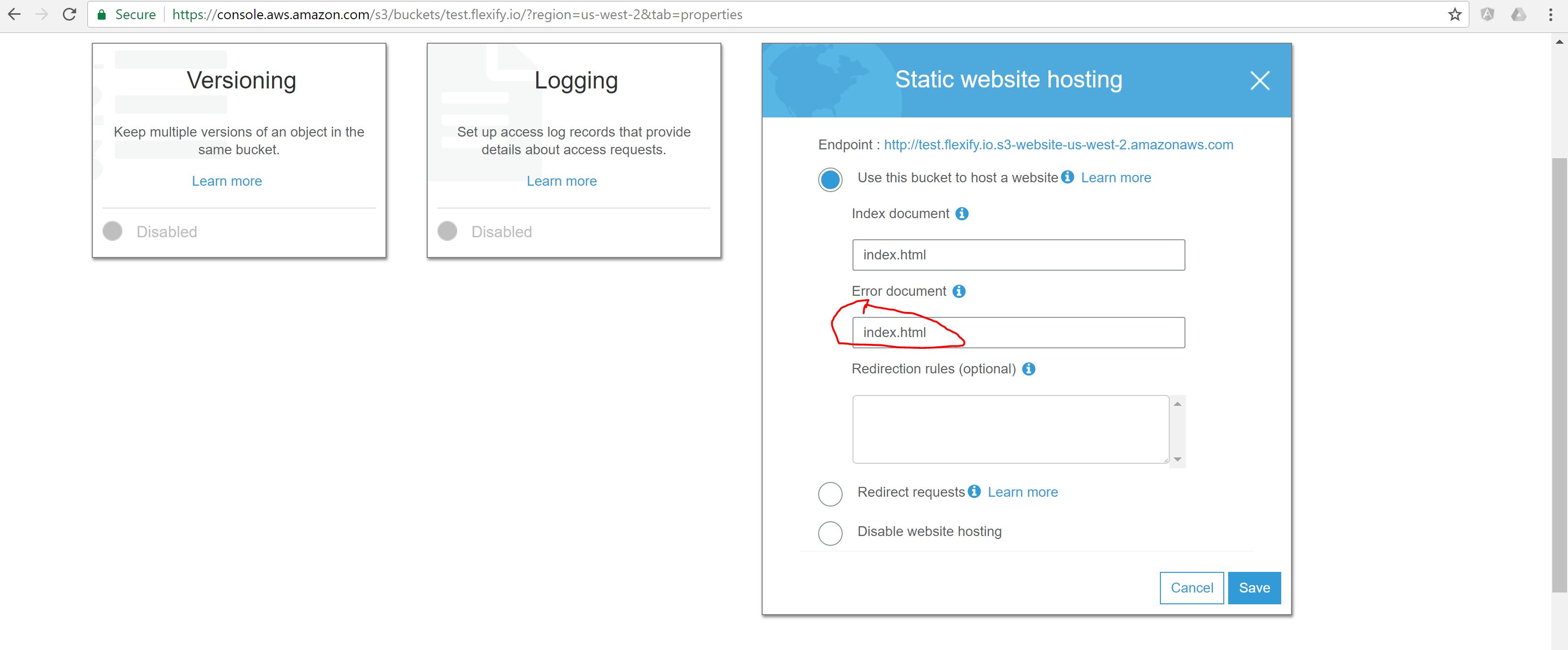

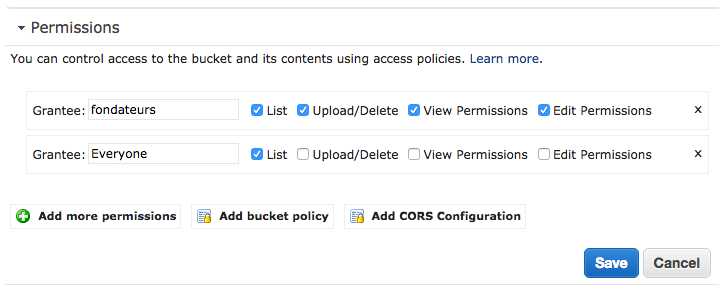

First, I have an s3 bucket with multiple folders, each folder represents a react/redux website.

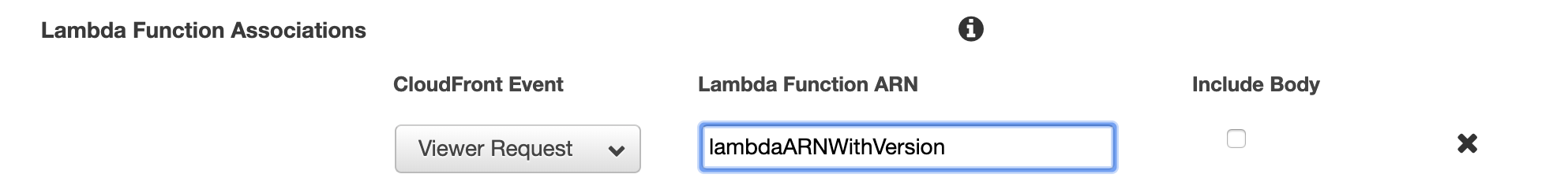

I also use CloudFront for cache invalidation.

So I had to use Routing Rules for supporting 404 and redirect them to an hash config:

<RoutingRules>

<RoutingRule>

<Condition>

<KeyPrefixEquals>website1/</KeyPrefixEquals>

<HttpErrorCodeReturnedEquals>404</HttpErrorCodeReturnedEquals>

</Condition>

<Redirect>

<Protocol>https</Protocol>

<HostName>my.example.com</HostName>

<ReplaceKeyPrefixWith>website1#</ReplaceKeyPrefixWith>

</Redirect>

</RoutingRule>

<RoutingRule>

<Condition>

<KeyPrefixEquals>website2/</KeyPrefixEquals>

<HttpErrorCodeReturnedEquals>404</HttpErrorCodeReturnedEquals>

</Condition>

<Redirect>

<Protocol>https</Protocol>

<HostName>my.example.com</HostName>

<ReplaceKeyPrefixWith>website2#</ReplaceKeyPrefixWith>

</Redirect>

</RoutingRule>

<RoutingRule>

<Condition>

<KeyPrefixEquals>website3/</KeyPrefixEquals>

<HttpErrorCodeReturnedEquals>404</HttpErrorCodeReturnedEquals>

</Condition>

<Redirect>

<Protocol>https</Protocol>

<HostName>my.example.com</HostName>

<ReplaceKeyPrefixWith>website3#</ReplaceKeyPrefixWith>

</Redirect>

</RoutingRule>

</RoutingRules>

In my JS code, I needed to handle it with a baseName config for react-router.

First of all, make sure your dependencies are interoperable, I had installed history==4.0.0 which was incompatible with react-router==3.0.1.

My dependencies are:

- "history": "3.2.0",

- "react": "15.4.1",

- "react-redux": "4.4.6",

- "react-router": "3.0.1",

- "react-router-redux": "4.0.7",

I've created a history.js file for loading history:

import {useRouterHistory} from 'react-router';

import createBrowserHistory from 'history/lib/createBrowserHistory';

export const browserHistory = useRouterHistory(createBrowserHistory)({

basename: '/website1/',

});

browserHistory.listen((location) => {

const path = (/#(.*)$/.exec(location.hash) || [])[1];

if (path) {

browserHistory.replace(path);

}

});

export default browserHistory;

This piece of code allow to handle the 404 sent by the sever with an hash, and replace them in history for loading our routes.

You can now use this file for configuring your store ans your Root file.

import {routerMiddleware} from 'react-router-redux';

import {applyMiddleware, compose} from 'redux';

import rootSaga from '../sagas';

import rootReducer from '../reducers';

import {createInjectSagasStore, sagaMiddleware} from './redux-sagas-injector';

import {browserHistory} from '../history';

export default function configureStore(initialState) {

const enhancers = [

applyMiddleware(

sagaMiddleware,

routerMiddleware(browserHistory),

)];

return createInjectSagasStore(rootReducer, rootSaga, initialState, compose(...enhancers));

}

import React, {PropTypes} from 'react';

import {Provider} from 'react-redux';

import {Router} from 'react-router';

import {syncHistoryWithStore} from 'react-router-redux';

import MuiThemeProvider from 'material-ui/styles/MuiThemeProvider';

import getMuiTheme from 'material-ui/styles/getMuiTheme';

import variables from '!!sass-variable-loader!../../../css/variables/variables.prod.scss';

import routesFactory from '../routes';

import {browserHistory} from '../history';

const muiTheme = getMuiTheme({

palette: {

primary1Color: variables.baseColor,

},

});

const Root = ({store}) => {

const history = syncHistoryWithStore(browserHistory, store);

const routes = routesFactory(store);

return (

<Provider {...{store}}>

<MuiThemeProvider muiTheme={muiTheme}>

<Router {...{history, routes}} />

</MuiThemeProvider>

</Provider>

);

};

Root.propTypes = {

store: PropTypes.shape({}).isRequired,

};

export default Root;

You'll notice with this configuration I use redux injector and an Homebrew sagas injector for loading JavaScript asynchrounously via routing.

Don't mind with theses lines.