Many many people have similar problems working with UTF-8 text on platforms that have 8-bit system encodings (Windows). Encoding in R can be tricky, because different methods handle encoding and conversions differently, and what appears to work fine on one platform (OS X or Linux) works poorly on another.

The problem has to do with your output connection and how Windows handles encodings and text connections. I've tried to replicate the problem using some Hebrew texts in both UTF-8 and an 8-bit encoding. We'll walk through the file reading issues as well, since there could be some snags there too.

For Tests

Created a short Hebrew language text file, encoded as UTF-8: hebrew-utf8.txt

Created a short Hebrew language text file, encoded as ISO-8859-8: hebrew-iso-8859-8.txt. (Note: You might need to tell your browser about the encoding in order to view this one properly - that's the case for Safari for instance.)

Ways to read the files

Now let's experiment. I am using Windows 7 for these tests (it actually works in OS X, my usual OS).

lines <- readLines("http://kenbenoit.net/files/hebrew-utf8.txt")

lines

## [1] "העברי ×”×•× ×—×‘×¨ בקבוצה ×”×›× ×¢× ×™×ª של שפות שמיות."

## [2] "זו היתה ×©×¤×ª× ×©×œ ×”×™×”×•×“×™× ×ž×•×§×“×, ×בל מן 586 ×œ×¤× ×”\"ס ×–×” התחיל להיות מוחלף על ידי ב×רמית."

That failed because it assumed the encoding was your system encoding, Windows-1252. But because no conversion occurred when you read the files, you can fix this just by setting the Encoding bit to UTF-8:

# this sets the bit for UTF-8

Encoding(lines) <- "UTF-8"

lines

## [1] "העברי הוא חבר בקבוצה הכנענית של שפות שמיות."

## [2] "זו היתה שפתם של היהודים מוקדם, אבל מן 586 לפנה\"ס זה התחיל להיות מוחלף על ידי בארמית."

But better to do this when you read the file:

# this does it in one pass

lines2 <- readLines("http://kenbenoit.net/files/hebrew-utf8.txt", encoding = "UTF-8")

lines2[1]

## [1] "העברי הוא חבר בקבוצה הכנענית של שפות שמיות."

Encoding(lines2)

## [1] "UTF-8" "UTF-8"

Now look at what happens if we try to read the same text, but encoded as the 8-bit ISO Hebrew code page.

lines3 <- readLines("http://kenbenoit.net/files/hebrew-iso-8859-8.txt")

lines3[1]

## [1] "äòáøé äåà çáø á÷áåöä äëðòðéú ùì ùôåú ùîéåú."

Setting the Encoding bit is of no help here, because what was read does not map to the Unicode code points for Hebrew, and Encoding() does no actual encoding conversion, it merely sets an extra bit that can be used to tell R one of a few possible encoding values. We could have solved this by adding encoding = "ISO-8859-8" to the readLines() call. We can also convert the text after loading, using iconv():

# this will not fix things

Encoding(lines3) <- "UTF-8"

lines3[1]

## [1] "\xe4\xf2\xe1\xf8\xe9 \xe4\xe5\xe0 \xe7\xe1\xf8 \xe1\xf7\xe1\xe5\xf6\xe4 \xe4\xeb\xf0\xf2\xf0\xe9\xfa \xf9\xec \xf9\xf4\xe5\xfa \xf9\xee\xe9\xe5\xfa."

# but this will

iconv(lines3, "ISO-8859-8", "UTF-8")[1]

## [1] "העברי הוא חבר בקבוצה הכנענית של שפות שמיות."

Overall I think the method used above for lines2 is the best approach.

How to output the files, preserving encoding

Now to your question about how to write this: The safest way is to control your connection at a low level, where you can specify the encoding. Otherwise, the default is for R/Windows to choose your system encoding, which will lose the UTF-8. I thought this would work, which does work absolutely fine in OS X - and on OS X also works fine calling writeLines() just naming a text file without the textConnection.

## to write lines, use the encoding option of a connection object

f <- file("hebrew-output-UTF-8.txt", open = "wt", encoding = "UTF-8")

writeLines(lines2, f)

close(f)

But it does not work on Windows. You can see the Windows 7 results here: hebrew-output-UTF-8-file_encoding.txt.

So, here is how to do it in Windows: Once you are sure your text is encoded as UTF-8, just write it as raw bytes, without using any encoding, like this:

writeLines(lines2, "hebrew-output-UTF-8-useBytesTRUE.txt", useBytes = TRUE)

You can see the results at hebrew-output-UTF-8-useBytesTRUE.txt, which is now UTF-8 and looks correct.

Added for write.csv

Note that the only reason you would want to do this is to make the .csv file available for import into other software, such as Excel. (And good luck working with UTF-8 in Excel/Windows...) Otherwise, you should just write the data.table as binary using write(myDataFrame, file = "myDataFrame.RData"). But if you really need to output .csv, then:

How to write UTF-8 .csv files from a data.table in Windows

The problem with writing UTF-8 files using write.table() and write.csv() is that these open text connections, and Windows has limitations about encodings and text connections with respect to UTF-8. (This post offers a helpful explanation.) Following from an SO answer posted here, we can override this to write our own function to output UTF-8 .csv files.

This assumes that you have already set the Encoding() for any character elements to "UTF-8" (which happens upon import above for lines2).

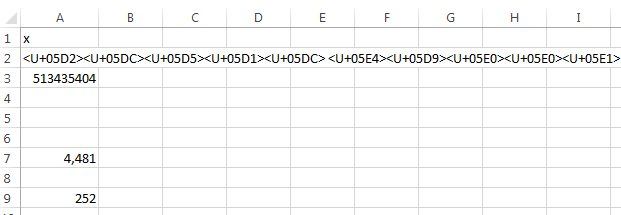

df <- data.frame(int = 1:2, text = lines2, stringsAsFactors = FALSE)

write_utf8_csv <- function(df, file) {

firstline <- paste('"', names(df), '"', sep = "", collapse = " , ")

data <- apply(df, 1, function(x) {paste('"', x, '"', sep = "", collapse = " , ")})

writeLines(c(firstline, data), file , useBytes = TRUE)

}

write_utf8_csv(df, "df_csv.txt")

When we now look at that file in non-Unicode-challenged OS, it now looks fine:

KBsMBP15-2:Desktop kbenoit$ cat df_csv.txt

"int" , "text"

"1" , "העברי הוא חבר בקבוצה הכנענית של שפות שמיות."

"2" , "זו היתה שפתם של היהודים מוקדם, אבל מן 586 לפנה"ס זה התחיל להיות מוחלף על ידי בארמית."

KBsMBP15-2:Desktop kbenoit$ file df_csv.txt

df_csv.txt: UTF-8 Unicode text, with CRLF line terminators

readLines()statement. Please include a few lines of the input file, and the command you used to read it. Also you want to make sure thatstringsAsFactors = FALSEin thedata.frame()command. – Langouste