I have a question in mind which relates to the usage of pybrain to do regression of a time series. I plan to use the LSTM layer in pybrain to train and predict a time series.

I found an example code here in the link below

Request for example: Recurrent neural network for predicting next value in a sequence

In the example above, the network is able to predict a sequence after its being trained. But the issue is, network takes in all the sequential data by feeding it in one go to the input layer. For example, if the training data has 10 features each, the 10 features will be simultaneously fed into 10 input nodes at one time.

From my understanding, this is no longer a time series prediction am I right? Since there is no difference in terms of the time each feature is fed into the network? Correct me if I am wrong on this.

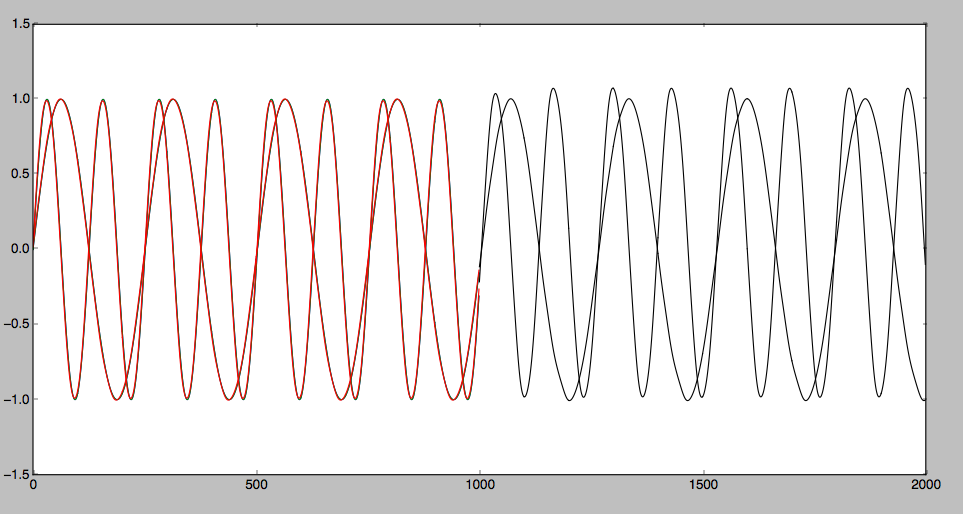

Therefore, what I am trying to achieve is a recurrent network that has only ONE input node, and ONE output node. The input node is where all the time series data will be fed sequentially at different time steps. The network will be trained to reproduce the input at the output node.

Could you please suggest or guide me in constructing the network I mentioned? Thank you very much in advance.