After reading this post (answer on StackOverflow) (at the optimization section), I was wondering why conditional moves are not vulnerable for Branch Prediction Failure. I found on an article on cond moves here (PDF by AMD). Also there, they claim the performance advantage of cond. moves. But why is this? I don't see it. At the moment that that ASM-instruction is evaluated, the result of the preceding CMP instruction is not known yet.

Mis-predicted branches are expensive

A modern processor generally executes between one and three instructions each cycle if things go well (if it does not stall waiting for data dependencies for these instructions to arrive from previous instructions or from memory).

The statement above holds surprisingly well for tight loops, but this shouldn't blind you to one additional dependency that can prevent an instruction to be executed when its cycle comes: for an instruction to be executed, the processor must have started to fetch and decode it 15-20 cycles before.

What should the processor do when it encounters a branch? Fetching and decoding both targets does not scale (if more branches follow, an exponential number of paths would have to be fetched in parallel). So the processor only fetches and decodes one of the two branches, speculatively.

This is why mis-predicted branches are expensive: they cost the 15-20 cycles that are usually invisible because of an efficient instruction pipeline.

Conditional move is never very expensive

Conditional move does not require prediction, so it can never have this penalty. It has data dependencies, same as ordinary instructions. In fact, a conditional move has more data dependencies than ordinary instructions, because the data dependencies include both “condition true” and “condition false” cases. After an instruction that conditionally moves r1 to r2, the contents of r2 seem to depend on both the previous value of r2 and on r1. A well-predicted conditional branch allows the processor to infer more accurate dependencies. But data dependencies typically take one-two cycles to arrive, if they need time to arrive at all.

Note that a conditional move from memory to register would sometimes be a dangerous bet: if the condition is such that the value read from memory is not assigned to the register, you have waited on memory for nothing. But the conditional move instructions offered in instruction sets are typically register to register, preventing this mistake on the part of the programmer.

cmov is typically the same as add-with-carry. It looks the same to the out-of-order scheduler in the CPU: 2 integer regs and FLAGS input, 1 integer reg output. (Only difference is it doesn't write FLAGS). The danger with cmov is creating a data dependency at all, if it couples two things together or creates a loop carried dep chain. gcc optimization flag -O3 makes code slower than -O2 where GCC uses it wrong. See also Is CMOVcc considered a branching instruction? –

Afterworld adc is that cmov can take more than one flag input, hence for separately renamed flags it either needs to force the relevant flags together with a merging uop, or accept 2 flag inputs (like jcc seems to do on recent chips). –

Premiere It is all about the instruction pipeline. Remember, modern CPUs run their instructions in a pipeline, which yields a significant performance boost when the execution flow is predictable by the CPU.

cmov

add eax, ebx

cmp eax, 0x10

cmovne ebx, ecx

add eax, ecx

At the moment that that ASM-instruction is evaluated, the result of the preceding CMP instruction is not known yet.

Perhaps, but the CPU still knows that the instruction following the cmov will be executed right after, regardless of the result from the cmp and cmov instruction. The next instruction may thus safely be fetched/decoded ahead of time, which is not the case with branches.

The next instruction could even execute before the cmov does (in my example this would be safe)

branch

add eax, ebx

cmp eax, 0x10

je .skip

mov ebx, ecx

.skip:

add eax, ecx

In this case, when the CPU's decoder sees je .skip it will have to choose whether to continue prefetching/decoding instructions either 1) from the next instruction, or 2) from the jump target. The CPU will guess that this forward conditional branch won't happen, so the next instruction mov ebx, ecx will go into the pipeline.

A couple of cycles later, the je .skip is executed and the branch is taken. Oh crap! Our pipeline now holds some random junk that should never be executed. The CPU has to flush all its cached instructions and start fresh from .skip:.

That is the performance penalty of mispredicted branches, which can never happen with cmov since it doesn't alter the execution flow.

Indeed the result may not yet be known, but if other circumstances permit (in particular, the dependency chain) the cpu can reorder and execute instructions following the cmov. Since there is no branching involved, those instructions need to be evaluated in any case.

Consider this example:

cmoveq edx, eax

add ecx, ebx

mov eax, [ecx]

The two instructions following the cmov do not depend on the result of the cmov, so they can be executed even while the cmov itself is pending (this is called out of order execution). Even if they can't be executed, they can still be fetched and decoded.

A branching version could be:

jne skip

mov edx, eax

skip:

add ecx, ebx

mov eax, [ecx]

The problem here is that control flow is changing and the cpu isn't clever enough to see that it could just "insert" the skipped mov instruction if the branch was mispredicted as taken - instead it throws away everything it did after the branch, and restarts from scratch. This is where the penalty comes from.

You should read these. With Fog+Intel, just search for CMOV.

Linus Torvald's critique of CMOV circa 2007

Agner Fog's comparison of microarchitectures

Intel® 64 and IA-32 Architectures Optimization Reference Manual

Short answer, correct predictions are 'free' while conditional branch mispredicts can cost 14-20 cycles on Haswell. However, CMOV is never free. Still I think CMOV is a LOT better now than when Torvalds ranted. There is no single one correct for all time on all processors ever answer.

cmov is still a data dependency, so can create loop-carried dependency chains that branch-prediction would have hidden. Intel Broadwell/Skylake decode it to a single uop instead of 2 (Haswell and earlier), so it is a bit less expensive now. Sandybridge and later's uop cache means the decode-throughput penalty for multi-uop instructions is usually not a factor, too. Still, it doesn't change the fundamental difference between a data and a control dependency. Also, x86 cmov still doesn't have a form with an immediate operand, so x = x<3 ? x : 3 is still clunky to implement. –

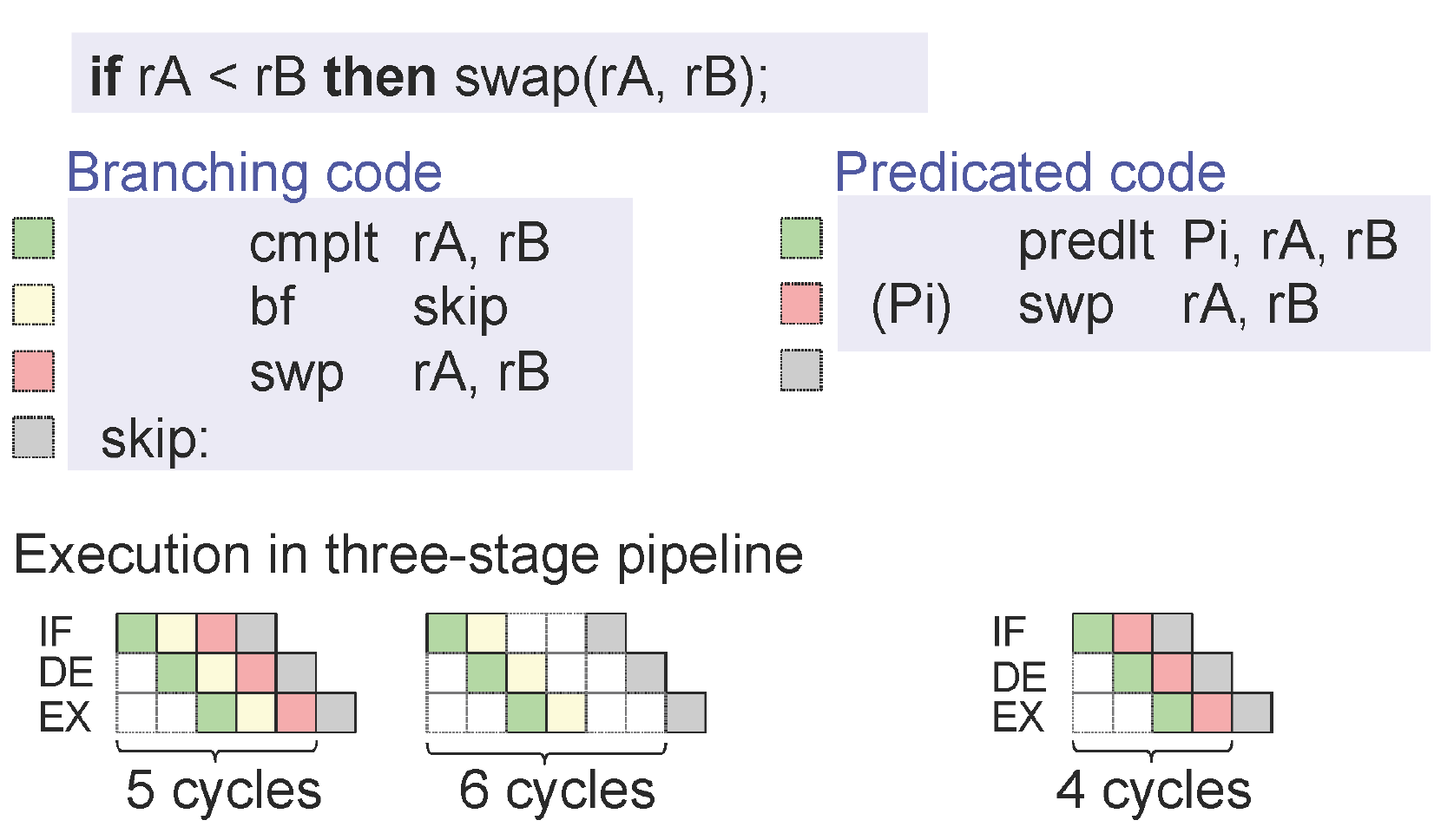

Afterworld I have this illustration from [Peter Puschner et al.] slide which explains how it transforms into single path code, and speedup the execution.

cmp / swplt, if it had a swap / exchange instruction.) Anyway, modern CPUs don't generally have bubbles from taken branches, they have bubbles from mispredicts: #11228309. In high-throughput code, correctly-predicted taken branches can reduce decode / front-end bandwidth some, though. –

Afterworld © 2022 - 2024 — McMap. All rights reserved.