This is from personal experience, however please have a look at this blog entry it explains very well different types of the engines:

Mongo Blog v3

![Wired Tiger vs MMAPv1]()

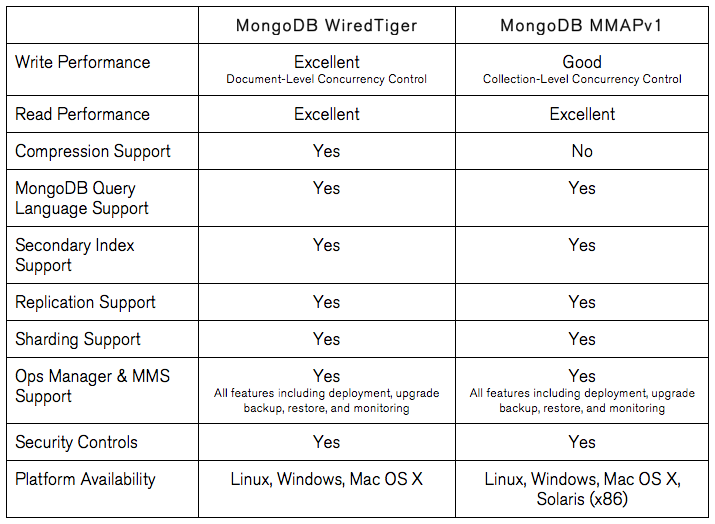

Comparing the MongoDB WiredTiger and MMAPv1 storage engines.Higher Performance & Efficiency Between 7x and 10x Greater Write Performance

MongoDB 3.0 provides more granular document-level concurrency control, delivering between 7x and 10x greater throughput for most write-intensive applications, while maintaining predictable low latency.

For me choice was very simple, I needed document level locks which makes WiredTiger ideal choice, we don't have Enterprise version of mongo hence in memory engine is not available. MMAPv1 Btree is very basic technique to map memory to hard drive and not very efficient.

The MMAP storage engine uses a process called “record allocation” to grab disk space for document storage. All records are contiguously located on disk, and when a document becomes larger than the allocated record, it must allocate a new record. New allocations require moving a document and updating all indexes that refer to the document, which takes more time than in-place updates and leads to storage fragmentation. Furthermore, MMAPv1 in it’s current iterations usually leads to high space utilization on your filesystem due to over-allocation of record space and it’s lack of support for compression.

As mentioned previously, a storage engine’s locking scheme is one of the most important factors in overall database performance. MMAPv1 has collection-level locking – meaning only one insert, update or delete operation can use a collection at a time. This type of locking scheme creates a very common scenario in concurrent workloads, where update/delete/insert operations are always waiting for the operation(s) in front of them to complete. Furthermore, oftentimes those operations are flowing in more quickly than they can be completed in serial fashion by the storage engine. To put it in context, imagine a giant supermarket on Sunday afternoon that only has one checkout line open: plenty of customers, but low throughput!

Everyone has different requirements, but for most cases WiredTiger would be ideal choice the fact that it makes atomic operations on document level and not collection level has a great advantage, you simply can't beat that.

More reads and not a lot of writes

If reading is your main concern here is one way to address that.

You can tweak Mongo Driver Read Preference Modes in the following way:

- Setup Replica Set, say 1 master and 3 secondaries.

- Set write concern to majority this would make

write a bit slower (trade off).

- Set read preference to secondary.

This setup will perform very well when you have a lot of reads, but as a tradeoff write would be slower. However throughput of read data would be great.

I hope this helps if you have additional questions add them as a comment and I will try to address it in this answer.

Also you can check MMAPv1 vs WiredTiger review and notice how he changed his mind from MMAPv1 to WiredTiger. The seller is document locking that performance you just can't beat.

For new projects, I use WiredTiger now. Since a migration from a compressed to an uncompressed WiredTiger storage is rather easy, I tend to start with compression to enhance the CPU utilization ("get more bang for the buck"). Should the compression have a noticeable impact on performance or UX, I migrate to uncompressed WiredTiger.

MongoDB database profiler

Best way of determining your database needs is to setup test cluster and run application on it with MongoDB profiler

Like most database profilers, the MongoDB profiler can be configured to only write profile information about queries that took longer than a given threshold. So once you know slow queries you can figure out if it reads vs writes or cpu vs ram and go from there.