A correctly formatted UTF8 file can have a Byte Order Mark as its first three octets. These are the hex values 0xEF, 0xBB, 0xBF. These octets serve to mark the file as UTF8 (since they are not relevant as "byte order" information).1 If this BOM does not exist, the consumer/reader is left to infer the encoding type of the text. Readers that are not UTF8 capable will read the bytes as some other encoding such as Windows-1252 and display the characters  at the start of the file.

There is a known bug where Excel, upon opening UTF8 CSV files via file association, assumes that they are in a single-byte encoding, disregarding the presence of the UTF8 BOM. This can not be fixed by any system default codepage or language setting. The BOM will not clue in Excel - it just won't work. (A minority report claims that the BOM sometimes triggers the "Import Text" wizard.) This bug appears to exist in Excel 2003 and earlier. Most reports (amidst the answers here) say that this is fixed in Excel 2007 and newer.

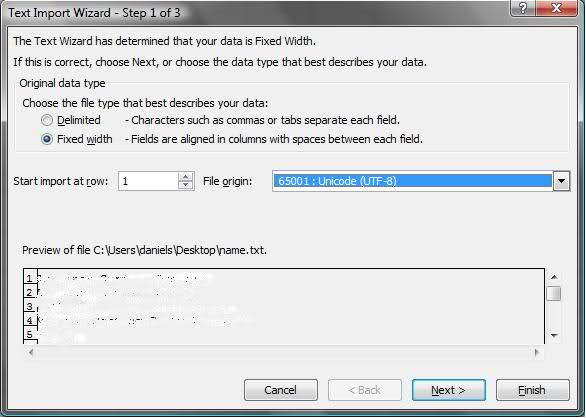

Note that you can always* correctly open UTF8 CSV files in Excel using the "Import Text" wizard, which allows you to specify the encoding of the file you're opening. Of course this is much less convenient.

Readers of this answer are most likely in a situation where they don't particularly support Excel < 2007, but are sending raw UTF8 text to Excel, which is misinterpreting it and sprinkling your text with à and other similar Windows-1252 characters. Adding the UTF8 BOM is probably your best and quickest fix.

If you are stuck with users on older Excels, and Excel is the only consumer of your CSVs, you can work around this by exporting UTF16 instead of UTF8. Excel 2000 and 2003 will double-click-open these correctly. (Some other text editors can have issues with UTF16, so you may have to weigh your options carefully.)

* Except when you can't, (at least) Excel 2011 for Mac's Import Wizard does not actually always work with all encodings, regardless of what you tell it. </anecdotal-evidence> :)