After reading AWS documentation I am still not clear about cloudwatch metrics statistics average and maximum, specifically for ECS CPUUtilization.

I have a AWS ECS cluster fargate setup, a service with minimum count of 2 healthy task. I have enabled autoscaling using AWS/ECS CPUUtilization for ClusterName my and ServiceName. A Cloudwatch alarm triggers is configured to trigger when average cpu utilization is more than 75% for one minute period for 3 data points.

I also have a health check setup with a frequency of 30 seconds and timeout of 5 mins and

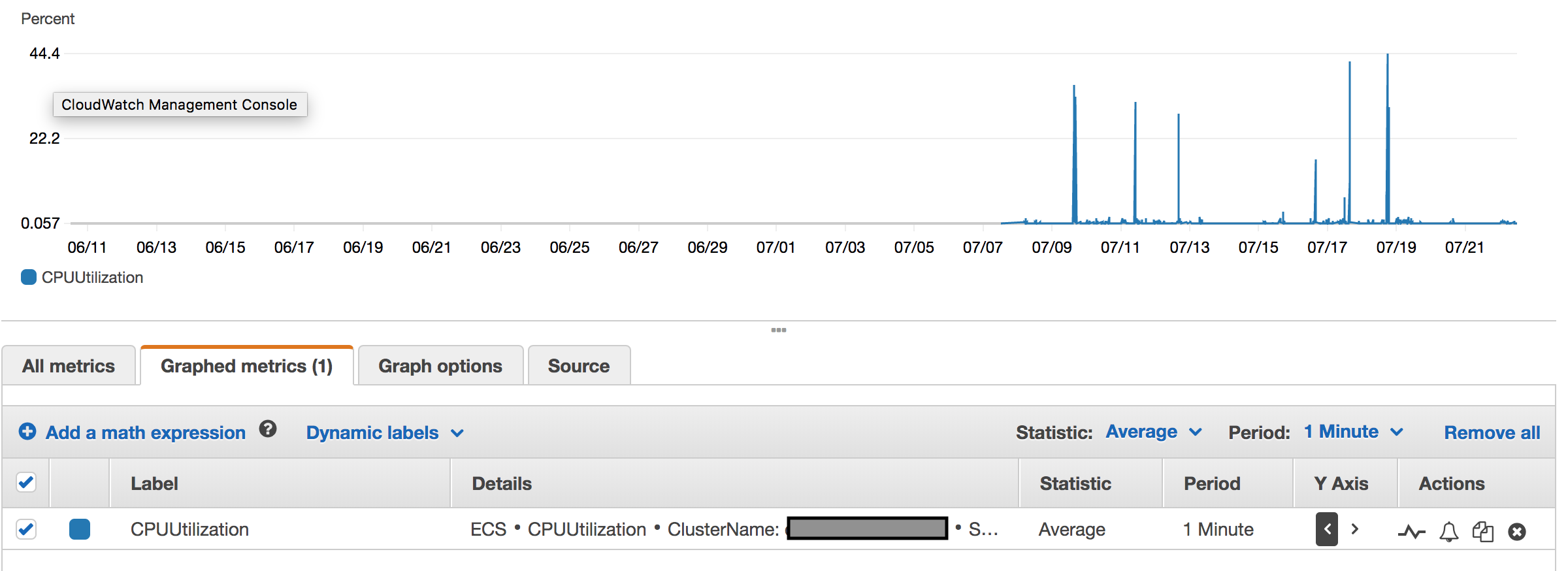

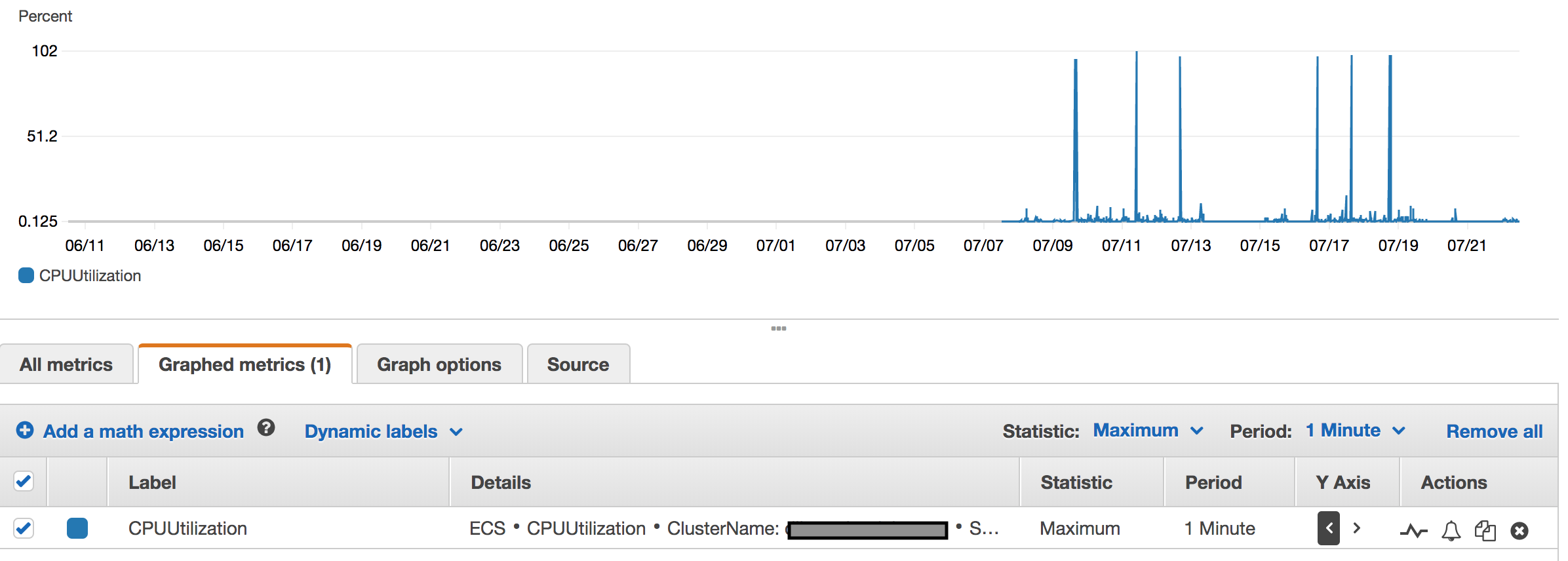

I ran a performance script to test the autoscaling behavior, but I am noticing the service gets marked as unhealthy and new tasks gets created. When I check the cpuutilization metric, for average statistics it shows around 44% utilization but for maximum statistics it shows more than hundred percent, screenshots attached.

Average

So what is average and maximum here, does this mean average is average cpu utilization of both my instances? and maximum shows one of my instance's cpu utilization more than 100?