I've been messing with Keras, and like it so far. There's one big issue I have been having, when working with fairly deep networks: When calling model.train_on_batch, or model.fit etc., Keras allocates significantly more GPU memory than what the model itself should need. This is not caused by trying to train on some really large images, it's the network model itself that seems to require a lot of GPU memory. I have created this toy example to show what I mean. Here's essentially what's going on:

I first create a fairly deep network, and use model.summary() to get the total number of parameters needed for the network (in this case 206538153, which corresponds to about 826 MB). I then use nvidia-smi to see how much GPU memory Keras has allocated, and I can see that it makes perfect sense (849 MB).

I then compile the network, and can confirm that this does not increase GPU memory usage. And as we can see in this case, I have almost 1 GB of VRAM available at this point.

Then I try to feed a simple 16x16 image and a 1x1 ground truth to the network, and then everything blows up, because Keras starts allocating lots of memory again, for no reason that is obvious to me. Something about training the network seems to require a lot more memory than just having the model, which doesn't make sense to me. I have trained significantly deeper networks on this GPU in other frameworks, so that makes me think that I'm using Keras wrong (or there's something wrong in my setup, or in Keras, but of course that's hard to know for sure).

Here's the code:

from scipy import misc

import numpy as np

from keras.models import Sequential

from keras.layers import Dense, Activation, Convolution2D, MaxPooling2D, Reshape, Flatten, ZeroPadding2D, Dropout

import os

model = Sequential()

model.add(Convolution2D(256, 3, 3, border_mode='same', input_shape=(16,16,1)))

model.add(MaxPooling2D(pool_size=(2,2), strides=(2,2)))

model.add(Convolution2D(512, 3, 3, border_mode='same'))

model.add(MaxPooling2D(pool_size=(2,2), strides=(2,2)))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(Convolution2D(1024, 3, 3, border_mode='same'))

model.add(MaxPooling2D(pool_size=(2,2), strides=(2,2)))

model.add(Convolution2D(256, 3, 3, border_mode='same'))

model.add(Convolution2D(32, 3, 3, border_mode='same'))

model.add(MaxPooling2D(pool_size=(2,2)))

model.add(Flatten())

model.add(Dense(4))

model.add(Dense(1))

model.summary()

os.system("nvidia-smi")

raw_input("Press Enter to continue...")

model.compile(optimizer='sgd',

loss='mse',

metrics=['accuracy'])

os.system("nvidia-smi")

raw_input("Compiled model. Press Enter to continue...")

n_batches = 1

batch_size = 1

for ibatch in range(n_batches):

x = np.random.rand(batch_size, 16,16,1)

y = np.random.rand(batch_size, 1)

os.system("nvidia-smi")

raw_input("About to train one iteration. Press Enter to continue...")

model.train_on_batch(x, y)

print("Trained one iteration")

Which gives the following output for me:

Using Theano backend.

Using gpu device 0: GeForce GTX 960 (CNMeM is disabled, cuDNN 5103)

/usr/local/lib/python2.7/dist-packages/theano/sandbox/cuda/__init__.py:600: UserWarning: Your cuDNN version is more recent than the one Theano officially supports. If you see any problems, try updating Theano or downgrading cuDNN to version 5.

warnings.warn(warn)

____________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

====================================================================================================

convolution2d_1 (Convolution2D) (None, 16, 16, 256) 2560 convolution2d_input_1[0][0]

____________________________________________________________________________________________________

maxpooling2d_1 (MaxPooling2D) (None, 8, 8, 256) 0 convolution2d_1[0][0]

____________________________________________________________________________________________________

convolution2d_2 (Convolution2D) (None, 8, 8, 512) 1180160 maxpooling2d_1[0][0]

____________________________________________________________________________________________________

maxpooling2d_2 (MaxPooling2D) (None, 4, 4, 512) 0 convolution2d_2[0][0]

____________________________________________________________________________________________________

convolution2d_3 (Convolution2D) (None, 4, 4, 1024) 4719616 maxpooling2d_2[0][0]

____________________________________________________________________________________________________

convolution2d_4 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_3[0][0]

____________________________________________________________________________________________________

convolution2d_5 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_4[0][0]

____________________________________________________________________________________________________

convolution2d_6 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_5[0][0]

____________________________________________________________________________________________________

convolution2d_7 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_6[0][0]

____________________________________________________________________________________________________

convolution2d_8 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_7[0][0]

____________________________________________________________________________________________________

convolution2d_9 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_8[0][0]

____________________________________________________________________________________________________

convolution2d_10 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_9[0][0]

____________________________________________________________________________________________________

convolution2d_11 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_10[0][0]

____________________________________________________________________________________________________

convolution2d_12 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_11[0][0]

____________________________________________________________________________________________________

convolution2d_13 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_12[0][0]

____________________________________________________________________________________________________

convolution2d_14 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_13[0][0]

____________________________________________________________________________________________________

convolution2d_15 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_14[0][0]

____________________________________________________________________________________________________

convolution2d_16 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_15[0][0]

____________________________________________________________________________________________________

convolution2d_17 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_16[0][0]

____________________________________________________________________________________________________

convolution2d_18 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_17[0][0]

____________________________________________________________________________________________________

convolution2d_19 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_18[0][0]

____________________________________________________________________________________________________

convolution2d_20 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_19[0][0]

____________________________________________________________________________________________________

convolution2d_21 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_20[0][0]

____________________________________________________________________________________________________

convolution2d_22 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_21[0][0]

____________________________________________________________________________________________________

convolution2d_23 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_22[0][0]

____________________________________________________________________________________________________

convolution2d_24 (Convolution2D) (None, 4, 4, 1024) 9438208 convolution2d_23[0][0]

____________________________________________________________________________________________________

maxpooling2d_3 (MaxPooling2D) (None, 2, 2, 1024) 0 convolution2d_24[0][0]

____________________________________________________________________________________________________

convolution2d_25 (Convolution2D) (None, 2, 2, 256) 2359552 maxpooling2d_3[0][0]

____________________________________________________________________________________________________

convolution2d_26 (Convolution2D) (None, 2, 2, 32) 73760 convolution2d_25[0][0]

____________________________________________________________________________________________________

maxpooling2d_4 (MaxPooling2D) (None, 1, 1, 32) 0 convolution2d_26[0][0]

____________________________________________________________________________________________________

flatten_1 (Flatten) (None, 32) 0 maxpooling2d_4[0][0]

____________________________________________________________________________________________________

dense_1 (Dense) (None, 4) 132 flatten_1[0][0]

____________________________________________________________________________________________________

dense_2 (Dense) (None, 1) 5 dense_1[0][0]

====================================================================================================

Total params: 206538153

____________________________________________________________________________________________________

None

Thu Oct 6 09:05:42 2016

+------------------------------------------------------+

| NVIDIA-SMI 352.63 Driver Version: 352.63 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 GeForce GTX 960 Off | 0000:01:00.0 On | N/A |

| 30% 37C P2 28W / 120W | 1082MiB / 2044MiB | 9% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| 0 1796 G /usr/bin/X 155MiB |

| 0 2597 G compiz 65MiB |

| 0 5966 C python 849MiB |

+-----------------------------------------------------------------------------+

Press Enter to continue...

Thu Oct 6 09:05:44 2016

+------------------------------------------------------+

| NVIDIA-SMI 352.63 Driver Version: 352.63 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 GeForce GTX 960 Off | 0000:01:00.0 On | N/A |

| 30% 38C P2 28W / 120W | 1082MiB / 2044MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| 0 1796 G /usr/bin/X 155MiB |

| 0 2597 G compiz 65MiB |

| 0 5966 C python 849MiB |

+-----------------------------------------------------------------------------+

Compiled model. Press Enter to continue...

Thu Oct 6 09:05:44 2016

+------------------------------------------------------+

| NVIDIA-SMI 352.63 Driver Version: 352.63 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 GeForce GTX 960 Off | 0000:01:00.0 On | N/A |

| 30% 38C P2 28W / 120W | 1082MiB / 2044MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| 0 1796 G /usr/bin/X 155MiB |

| 0 2597 G compiz 65MiB |

| 0 5966 C python 849MiB |

+-----------------------------------------------------------------------------+

About to train one iteration. Press Enter to continue...

Error allocating 37748736 bytes of device memory (out of memory). Driver report 34205696 bytes free and 2144010240 bytes total

Traceback (most recent call last):

File "memtest.py", line 65, in <module>

model.train_on_batch(x, y)

File "/usr/local/lib/python2.7/dist-packages/keras/models.py", line 712, in train_on_batch

class_weight=class_weight)

File "/usr/local/lib/python2.7/dist-packages/keras/engine/training.py", line 1221, in train_on_batch

outputs = self.train_function(ins)

File "/usr/local/lib/python2.7/dist-packages/keras/backend/theano_backend.py", line 717, in __call__

return self.function(*inputs)

File "/usr/local/lib/python2.7/dist-packages/theano/compile/function_module.py", line 871, in __call__

storage_map=getattr(self.fn, 'storage_map', None))

File "/usr/local/lib/python2.7/dist-packages/theano/gof/link.py", line 314, in raise_with_op

reraise(exc_type, exc_value, exc_trace)

File "/usr/local/lib/python2.7/dist-packages/theano/compile/function_module.py", line 859, in __call__

outputs = self.fn()

MemoryError: Error allocating 37748736 bytes of device memory (out of memory).

Apply node that caused the error: GpuContiguous(GpuDimShuffle{3,2,0,1}.0)

Toposort index: 338

Inputs types: [CudaNdarrayType(float32, 4D)]

Inputs shapes: [(1024, 1024, 3, 3)]

Inputs strides: [(1, 1024, 3145728, 1048576)]

Inputs values: ['not shown']

Outputs clients: [[GpuDnnConv{algo='small', inplace=True}(GpuContiguous.0, GpuContiguous.0, GpuAllocEmpty.0, GpuDnnConvDesc{border_mode='half', subsample=(1, 1), conv_mode='conv', precision='float32'}.0, Constant{1.0}, Constant{0.0}), GpuDnnConvGradI{algo='none', inplace=True}(GpuContiguous.0, GpuContiguous.0, GpuAllocEmpty.0, GpuDnnConvDesc{border_mode='half', subsample=(1, 1), conv_mode='conv', precision='float32'}.0, Constant{1.0}, Constant{0.0})]]

HINT: Re-running with most Theano optimization disabled could give you a back-trace of when this node was created. This can be done with by setting the Theano flag 'optimizer=fast_compile'. If that does not work, Theano optimizations can be disabled with 'optimizer=None'.

HINT: Use the Theano flag 'exception_verbosity=high' for a debugprint and storage map footprint of this apply node.

A few things to note:

- I have tried both Theano and TensorFlow backends. Both have the same problems, and run out of memory at the same line. In TensorFlow, it seems that Keras preallocates a lot of memory (about 1.5 GB) so nvidia-smi doesn't help us track what's going on there, but I get the same out-of-memory exceptions. Again, this points towards an error in (my usage of) Keras (although it's hard to be certain about such things, it could be something with my setup).

- I tried using CNMEM in Theano, which behaves like TensorFlow: It preallocates a large amount of memory (about 1.5 GB) yet crashes in the same place.

- There are some warnings about the CudNN-version. I tried running the Theano backend with CUDA but not CudNN and I got the same errors, so that is not the source of the problem.

- If you want to test this on your own GPU, you might want to make the network deeper/shallower depending on how much GPU memory you have to test this.

- My configuration is as follows: Ubuntu 14.04, GeForce GTX 960, CUDA 7.5.18, CudNN 5.1.3, Python 2.7, Keras 1.1.0 (installed via pip)

- I've tried changing the compilation of the model to use different optimizers and losses, but that doesn't seem to change anything.

- I've tried changing the train_on_batch function to use fit instead, but it has the same problem.

- I saw one similar question here on StackOverflow - Why does this Keras model require over 6GB of memory? - but as far as I can tell, I don't have those issues in my configuration. I've never had multiple versions of CUDA installed, and I've double checked my PATH, LD_LIBRARY_PATH and CUDA_ROOT variables more times than I can count.

- Julius suggested that the activation parameters themselves take up GPU memory. If this is true, can somebody explain it a bit more clearly? I have tried changing the activation function of my convolution layers to functions that are clearly hard-coded with no learnable parameters as far as I can tell, and that doesn't change anything. Also, it seems unlikely that these parameters would take up almost as much memory as the rest of the network itself.

- After thorough testing, the largest network I can train is about 453 MB of parameters, out of my ~2 GB of GPU RAM. Is this normal?

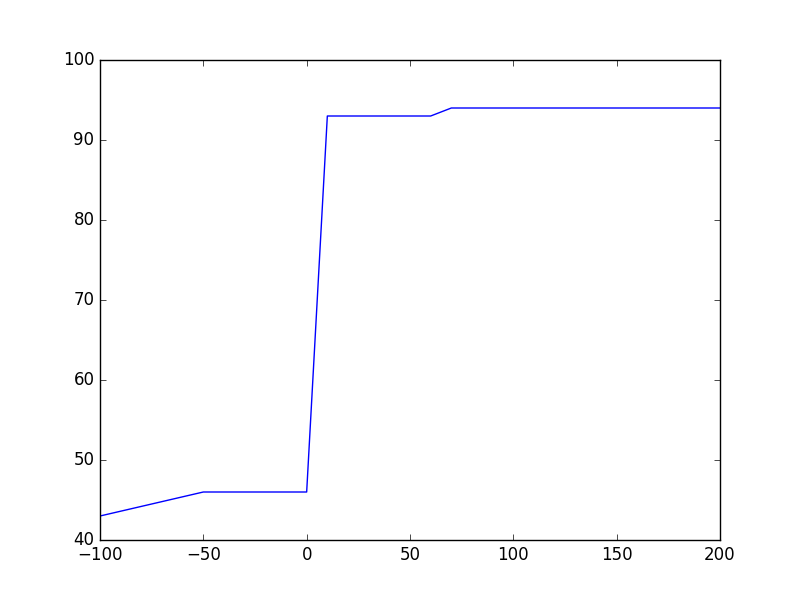

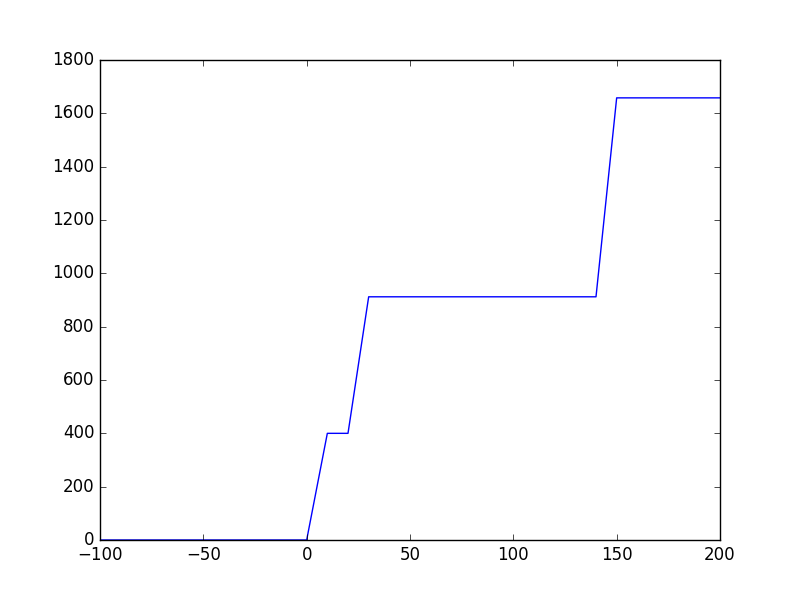

- After testing Keras on some smaller CNNs that do fit in my GPU, I can see that there are very sudden spikes in GPU RAM usage. If I run a network with about 100 MB of parameters, 99% of the time during training it'll be using less than 200 MB of GPU RAM. But every once in a while, memory usage spikes to about 1.3 GB. It seems safe to assume that it's these spikes that are causing my problems. I've never seen these spikes in other frameworks, but they might be there for a good reason? If anybody knows what causes them, and if there's a way to avoid them, please chime in!