If you want to keep the memory usage constant as you get more and more data, then you're going to have to resample that data somehow. That implies that you must apply some sort of rebinning scheme. You can wait until you acquire a certain amount of raw inputs before beginning the rebinning, but you cannot avoid it entirely.

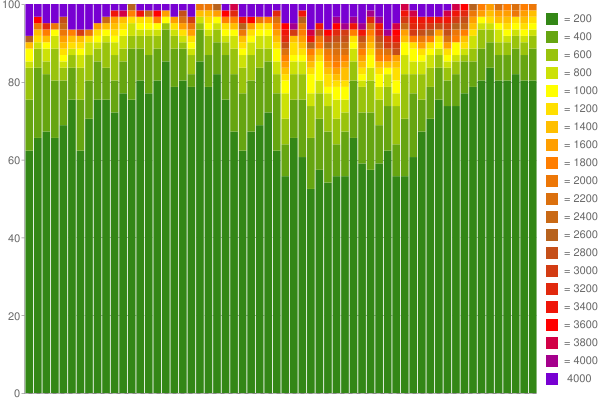

So your question is really asking "what's the best way of dynamically binning my data"? There are lots of approaches, but if you want to minimise your assumptions about the range or distribution of values you may receive, then a simple approach is to average over buckets of fixed size k, with logarithmically distributed widths. For example, lets say you want to hold 1000 values in memory at any one time. Pick a size for k, say 100. Pick your minimum resolution, say 1ms. Then

- The first bucket deals with values between 0-1ms (width=1ms)

- Second bucket: 1-3ms (w=2ms)

- Third bucket: 3-7ms (w=4ms)

- Fourth bucket: 7-15ms (w=8ms)

- ...

- Tenth bucket: 511-1023ms (w=512ms)

This type of log-scaled approach is similar to the chunking systems used in hash table algorithms, used by some filesystems and memory allocation algorithms. It works well when your data has a large dynamic range.

As new values come in, you can choose how you want to resample, depending on your requirements. For example, you could track a moving average, use a first-in-first-out, or some other more sophisticated method. See the Kademlia algorithm for one approach (used by Bittorrent).

Ultimately, rebinning must lose you some information. Your choices regarding the binning will determine the specifics of what information is lost. Another way of saying this is that the constant size memory store implies a trade-off between dynamic range and the sampling fidelity; how you make that trade-off is up to you, but like any sampling problem, there's no getting around this basic fact.

If you're really interested in the pros and cons, then no answer on this forum can hope to be sufficient. You should look into sampling theory. There's a huge amount of research on this topic available.

For what it's worth, I suspect that your server times will have a relatively small dynamic range, so a more relaxed scaling to allow higher sampling of common values may provide more accurate results.

Edit: To answer your comment, here's an example of a simple binning algorithm.

- You store 1000 values, in 10 bins. Each bin therefore holds 100 values. Assume each bin is implemented as a dynamic array (a 'list', in Perl or Python terms).

When a new value comes in:

- Determine which bin it should be stored in, based on the bin limits you've chosen.

- If the bin is not full, append the value to the bin list.

- If the bin is full, remove the value at the top of the bin list, and append the new value to the bottom of the bin list. This means old values are thrown away over time.

To find the 90th percentile, sort bin 10. The 90th percentile is the first value in the sorted list (element 900/1000).

If you don't like throwing away old values, then you can implement some alternative scheme to use instead. For example, when a bin becomes full (reaches 100 values, in my example), you could take the average of the oldest 50 elements (i.e. the first 50 in the list), discard those elements, and then append the new average element to the bin, leaving you with a bin of 51 elements that now has space to hold 49 new values. This is a simple example of rebinning.

Another example of rebinning is downsampling; throwing away every 5th value in a sorted list, for example.

I hope this concrete example helps. The key point to take away is that there are lots of ways of achieving a constant memory aging algorithm; only you can decide what is satisfactory given your requirements.