I have a lambda function which does a transaction in DynamoDB similar to this.

try {

const reservationId = genId();

await transactionFn();

return {

statusCode: 200,

body: JSON.stringify({id: reservationId})

};

async function transactionFn() {

try {

await docClient.transactWrite({

TransactItems: [

{

Put: {

TableName: ReservationTable,

Item: {

reservationId,

userId,

retryCount: Number(retryCount),

}

}

},

{

Update: {

TableName: EventDetailsTable,

Key: {eventId},

ConditionExpression: 'available >= :minValue',

UpdateExpression: `set available = available - :val, attendees= attendees + :val, lastUpdatedDate = :updatedAt`,

ExpressionAttributeValues: {

":val": 1,

":updatedAt": currentTime,

":minValue": 1

}

}

}

]

}).promise();

return true

} catch (e) {

const transactionConflictError = e.message.search("TransactionConflict") !== -1;

// const throttlingException = e.code === 'ThrottlingException';

console.log("transactionFn:transactionConflictError:", transactionConflictError);

if (transactionConflictError) {

retryCount += 1;

await transactionFn();

return;

}

// if(throttlingException){

//

// }

console.log("transactionFn:e.code:", JSON.stringify(e));

throw e

}

}

It just updating 2 tables on api call. If it encounter a transaction conflict error, it simply retry the transaction by recursively calling the function.

The eventDetails table is getting too much db updates. ( checked it with aws Contributor Insights) so, made provisioned unit to a higher value than earlier.

For reservationTable Provisioned capacity is on Demand.

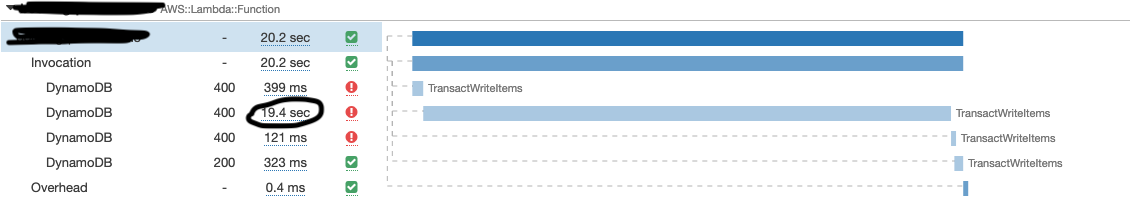

When I do load test over this api with 400 (or more) users using JMeter (master slave configuration) I am getting Throttled error for some api calls and some api took more than 20 sec to respond. When I checked X-Ray for this api found that, DynamoDB is taking too much time for this transasction for the slower api calls.

Even with much fixed provisioning ( I tried on demand scaling too ) , I am getting throttled exception for api calls.

ProvisionedThroughputExceededException: The level of configured provisioned throughput for the table was exceeded.

Consider increasing your provisioning level with the UpdateTable API.

UPDATE

And one more thing. When I do the load testing, I am always uses the same eventId. It means, I am always updating the same row for all the api requests. I have found this article, which says that, a single partition can only have upto 1000 WCU. Since I am always updating the same row in the eventDetails table during load testing, is that causing this issue ?