Edit, 2016 — Why not both?

If you're interested in Postgres vs. Lucene, why not both? Check out the ZomboDB extension for Postgres, which integrates Elasticsearch as a first-class index type. Still a fairly early project but it looks really promising to me.

(Technically not available on Heroku, but still worth looking at.)

Disclosure: I'm a cofounder of the Websolr and Bonsai Heroku add-ons, so my perspective is a bit biased toward Lucene.

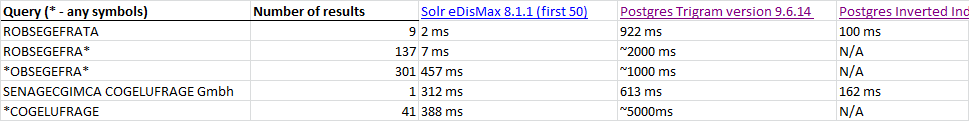

My read on Postgres full-text search is that it is pretty solid for straightforward use cases, but there are a number of reasons why Lucene (and thus Solr and ElasticSearch) is superior both in terms of performance and functionality.

For starters, jpountz provides a truly excellent technical answer to the question, Why is Solr so much faster than Postgres? It's worth a couple of reads through to really digest.

I also commented on a recent RailsCast episode comparing relative advantages and disadvantages of Postgres full-text search versus Solr. Let me recap that here:

Pragmatic advantages to Postgres

- Reuse an existing service that you're already running instead of setting up and maintaining (or paying for) something else.

- Far superior to the fantastically slow SQL

LIKE operator.

- Less hassle keeping data in sync since it's all in the same database — no application-level integration with some external data service API.

Advantages to Solr (or ElasticSearch)

Off the top of my head, in no particular order…

- Scale your indexing and search load separately from your regular database load.

- More flexible term analysis for things like accent normalizing, linguistic stemming, N-grams, markup removal… Other cool features like spellcheck, "rich content" (e.g., PDF and Word) extraction…

- Solr/Lucene can do everything on the Postgres full-text search TODO list just fine.

- Much better and faster term relevancy ranking, efficiently customizable at search time.

- Probably faster search performance for common terms or complicated queries.

- Probably more efficient indexing performance than Postgres.

- Better tolerance for change in your data model by decoupling indexing from your primary data store

Clearly I think a dedicated search engine based on Lucene is the better option here. Basically, you can think of Lucene as the de facto open source repository of search expertise.

But if your only other option is the LIKE operator, then Postgres full-text search is a definite win.