Updated: June 11, 2024.

TL;DR

ARAnchor (iOS)

ARAnchor is an invisible trackable object that holds a 3D model at anchor's position. Think of ARAnchor as a parent transform node of your model that you can translate, rotate and scale like any other node in SceneKit or RealityKit. Every 3D model has a pivot point, right? So, this pivot point must match a location of an ARAnchor in AR app.

If you're not using anchors in ARKit or ARCore app (in RealityKit iOS, however, it's impossible not to use anchors because they are integral part of a scene), your 3D models may drift from where they were placed, and this will dramatically impact app’s realism and user experience. Hence, anchors are crucial elements of any AR scene.

![enter image description here]()

According to ARKit 2017 documentation:

ARAnchor is a real-world position and orientation that can be used for placing objects in AR Scene. Adding an anchor to the session helps ARKit to optimize world-tracking accuracy in the area around that anchor, so that virtual objects appear to stay in place relative to the real world. If a virtual object moves, remove the corresponding anchor from the old position and add one at the new position.

ARAnchor is a parent class of other 10 anchors' types in ARKit, hence all those subclasses inherit from ARAnchor. Usually you do not use ARAnchor directly. I must also say that ARAnchor and Feature Points have nothing in common. Feature Points are rather special visual elements for tracking and debugging.

ARAnchor doesn't automatically track a real world target. When you need automation, you have to use renderer() or session() delegate methods that can be implemented in case you comformed to ARSCNViewDelegate or ARSessionDelegate protocols, respectively.

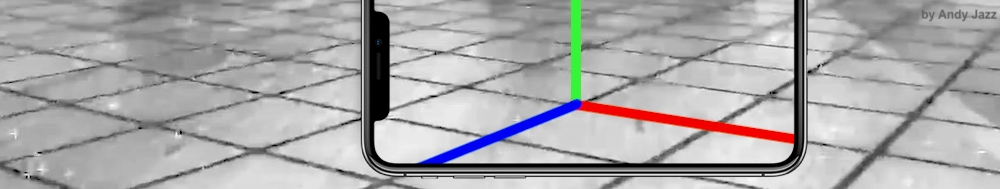

Here's an image with visual representation of plane anchor. Keep in mind: you can neither see a detected plane nor its corresponding ARPlaneAnchor, by default. So, if you want to see the anchor in a scene, you must "visualize" it using three thin SCNCylinder primitives. Each color of the cylinder represents a particular axis: so RGB is XYZ.

![enter image description here]()

In ARKit you can automatically add ARAnchors to your scene using different scenarios:

ARPlaneAnchor

- If horizontal and/or vertical

planeDetection instance property is ON, ARKit is able to add ARPlaneAnchors to anchors' collection in the running session. Sometimes activated planeDetection considerably increases a time required for scene understanding stage.

ARImageAnchor (conforms to ARTrackable protocol)

- This type of anchors contains information about a transform of a detected image – anchor is placed at image's center – on world-tracking or image-tracking config. To activate image tracking, use

detectionImages instance property. In ARKit 2.0 you can totally track up to 25 images, in ARKit 3.0 / 4.0 – up to 100 images, respectively. But, in both cases, not more than just 4 images simultaneously. However, it was promised, that in ARKit 5.0 / 6.0, you can detect and track up to 100 images at a time (but it's still not implemented yet).

ARBodyAnchor (conforms to ARTrackable protocol)

- You can turn on body tracking by running a session based on

ARBodyTrackingConfig(). You'll get ARBodyAnchor at a Root Joint of a real performer's skeleton or, in other words, at pelvis position of a tracked character.

ARFaceAnchor (conforms to ARTrackable protocol)

- Face Anchor stores information about head's topology, pose and face expression. You can track

ARFaceAnchor with a help of the front TrueDepth camera. When face is detected, Face Anchor will be attached slightly behind a nose, in the center of a face. In ARKit 2.0 you can track just one face, in ARKit 3.0 and higher – up to 3 faces, simultaneously. However, the number of tracked faces depends on presence of a TrueDepth sensor and processor version: gadgets with TrueDepth camera can track up to 3 faces, gadgets with A12+ chipset, but without TrueDepth camera, can also track up to 3 faces.

ARObjectAnchor

- This anchor's type keeps an information about 6 Degrees of Freedom (position and orientation) of a real-world 3D object detected in a world-tracking session. Remember that you need to specify

ARReferenceObject instances for detectionObjects property of session config.

AREnvironmentProbeAnchor

- Probe Anchor provides environmental lighting information for a specific area of space in a world-tracking session. ARKit's Artificial Intelligence uses it to supply reflective shaders with environmental reflections.

ARParticipantAnchor

- This is an indispensable anchor type for multiuser AR experiences. If you want to employ it, use

true value for isCollaborationEnabled property in ARWorldTrackingConfig. Then import MultipeerConnectivity framework.

ARMeshAnchor

- ARKit and LiDAR subdivide the reconstructed real-world scene surrounding the user into mesh anchors with corresponding polygonal geometry. Mesh anchors constantly update their data as ARKit refines its understanding of the real world. Although ARKit updates a mesh to reflect a change in the physical environment, the mesh's subsequent change is not intended to reflect in real time. Sometimes your reconstructed scene can have up to

30-40 anchors or even more. This is due to the fact that each classified object (wall, chair, door or table) has its own personal anchor. Each ARMeshAnchor stores data about corresponding vertices, one of eight cases of classification, its faces and vertices' normals.

ARGeoAnchor (conforms to ARTrackable protocol)

- In ARKit 4.0+ there's a geo anchor (a.k.a. location anchor) that tracks a geographic location using GPS, Apple Maps and additional environment data coming from Apple servers. This type of anchor identifies a specific area in the world that the app can refer to. When a user moves around the scene, the session updates a location anchor’s transform based on coordinates and device’s compass heading of a geo anchor. Look at the list of supported cities.

ARAppClipCodeAnchor (conforms to ARTrackable protocol)

- This anchor tracks the position and orientation of App Clip Code in the physical environment in ARKit 4.0+. You can use App Clip Codes to enable users to discover your App Clip in the real world. There are NFC-integrated App Clip Code and scan-only App Clip Code.

![enter image description here]()

There are also other regular approaches to create anchors in AR session:

This code snippet shows you how to use an ARPlaneAnchor in a delegate's method: renderer(_:didAdd:for:):

func renderer(_ renderer: SCNSceneRenderer,

didAdd node: SCNNode,

for anchor: ARAnchor) {

guard let planeAnchor = anchor as? ARPlaneAnchor

else { return }

let grid = Grid(anchor: planeAnchor)

node.addChildNode(grid)

}

Anchor (visionOS)

ARKit 2023-2024 API has been significantly modified for use on visionOS. The main innovation is the creation of three protocols (composition over inheritance, remember?): Anchor, its offspring TrackableAnchor, and DataProvider. Here are ten types of visionOS ARKit anchors that are structs now, not classes. Each anchor type has a corresponding provider object, for example: ImageAnchor has an ImageTrackingProvider, HandAnchor has a HandTrackingProvider, and so on.

@available(visionOS 1.0, *)

public struct WorldAnchor : TrackableAnchor, @unchecked Sendable

@available(visionOS 1.0, *)

// The position and orientation of Apple Vision Pro headset.

public struct DeviceAnchor : TrackableAnchor, @unchecked Sendable

@available(visionOS 1.0, *)

public struct PlaneAnchor : Anchor, @unchecked Sendable

@available(visionOS 1.0, *)

public struct HandAnchor : TrackableAnchor, @unchecked Sendable

@available(visionOS 1.0, *)

public struct MeshAnchor : Anchor, @unchecked Sendable

@available(visionOS 1.0, *)

public struct ImageAnchor : TrackableAnchor, @unchecked Sendable

@available(visionOS 2.0, *)

// Represents a tracked room.

public struct RoomAnchor : Anchor, @unchecked Sendable, Equatable

@available(visionOS 2.0, *)

// Represents a detected barcode or QR-code.

public struct BarcodeAnchor : Anchor, @unchecked Sendable

@available(visionOS 2.0, *)

// Represents a tracked reference object (like ARObjectAnchor in iOS).

public struct ObjectAnchor : TrackableAnchor, @unchecked Sendable, Equatable

@available(visionOS 2.0, *)

// Represents an environment probe in the world.

public struct EnvironmentProbeAnchor : Anchor, @unchecked Sendable, Equatable

Among the innovations worth noting in ARKit under visionOS, are the appearance of the ARKitSession object that is capable of running several providers and the Pose structure (XYZ position and XYZ rotation), an analogue of which has long been at the disposal of ARCore developers. visionOS ARKit API has a new level of automation – now WorldAnchors include an auto-persistence feature.

AnchorEntity (iOS and visionOS)

AnchorEntity is alpha and omega in RealityKit. According to RealityKit documentation 2019:

AnchorEntity is an anchor that tethers virtual content to a real-world object in an AR session.

RealityKit framework and Reality Composer app were announced at WWDC'19. They have a new class named AnchorEntity. You can use AnchorEntity as the root point of any entities' hierarchy, and you must add it to the Scene anchors collection. AnchorEntity automatically tracks real world target. In RealityKit and Reality Composer AnchorEntity is at the top of hierarchy. This anchor is able to hold a hundred of models and in this case it's more stable than if you use 100 personal anchors for each model.

Let's see how it looks in a code:

func makeUIView(context: Context) -> ARView {

let arView = ARView(frame: .zero)

let modelAnchor = try! Experience.loadModel()

arView.scene.anchors.append(modelAnchor)

return arView

}

AnchorEntity has three components:

To find out the difference between ARAnchor and AnchorEntity look at THIS POST.

Here are nine AnchorEntity's cases available in RealityKit for iOS:

// Fixed position in the AR scene

AnchorEntity(.world(transform: mtx))

// For body tracking (a.k.a. Motion Capture)

AnchorEntity(.body)

// Pinned to the tracking camera

AnchorEntity(.camera)

// For face tracking (Selfie Camera config)

AnchorEntity(.face)

// For image tracking config

AnchorEntity(.image(group: "GroupName", name: "forModel"))

// For object tracking config

AnchorEntity(.object(group: "GroupName", name: "forObject"))

// For plane detection with surface classification

AnchorEntity(.plane([.any], classification: [.seat], minimumBounds: [1, 1]))

// When you use ray-casting

AnchorEntity(raycastResult: myRaycastResult)

// When you use ARAnchor with a given identifier

AnchorEntity(.anchor(identifier: uuid))

// Creates anchor entity on a basis of ARAnchor

AnchorEntity(anchor: arAnchor)

And here are only two AnchorEntity's cases available in RealityKit for macOS:

// Fixed world position in VR scene

AnchorEntity(.world(transform: mtx))

// Camera transform

AnchorEntity(.camera)

👓 In addition to the above, visionOS allows you to use three more anchors: 👓

// Camera Position anchor

AnchorEntity(.head)

// User's hand anchor, taking into account a chirality

AnchorEntity(.hand(.right, location: .thumbTip))

// Real-world's Object anchor

AnchorEntity(.referenceObject(from: .init(name: "...")))

Usually, a chirality term means the lack of symmetry with respect to the right and left sides. However, in RealityKit, chirality is about using the three cases: .either, .left and .right. Also, there are five cases describing anchor's location: .wrist, .palm, .thumbTip, .indexFingerTip, .aboveHand.

If you want to get access to all 27 joints of left/right hand's skeletal structure, then you should use visionOS ARKit's trackable HandAnchor.

You can use any ARAnchor's subclass for AnchorEntity needs:

var anchor = AnchorEntity()

func session(_ session: ARSession, didAdd anchors: [ARAnchor]) {

guard let faceAnchor = anchors.first as? ARFaceAnchor

else { return }

arView.session.add(anchor: faceAnchor) // ARKit Session

self.anchor = AnchorEntity(anchor: faceAnchor)

anchor.addChild(model)

arView.scene.anchors.append(self.anchor) // RealityKit Scene

}

RealityKit gives you the ability to reanchor your model. Imagine the scenario where you started your scene with image or body tracking but need to continue with world tracking.

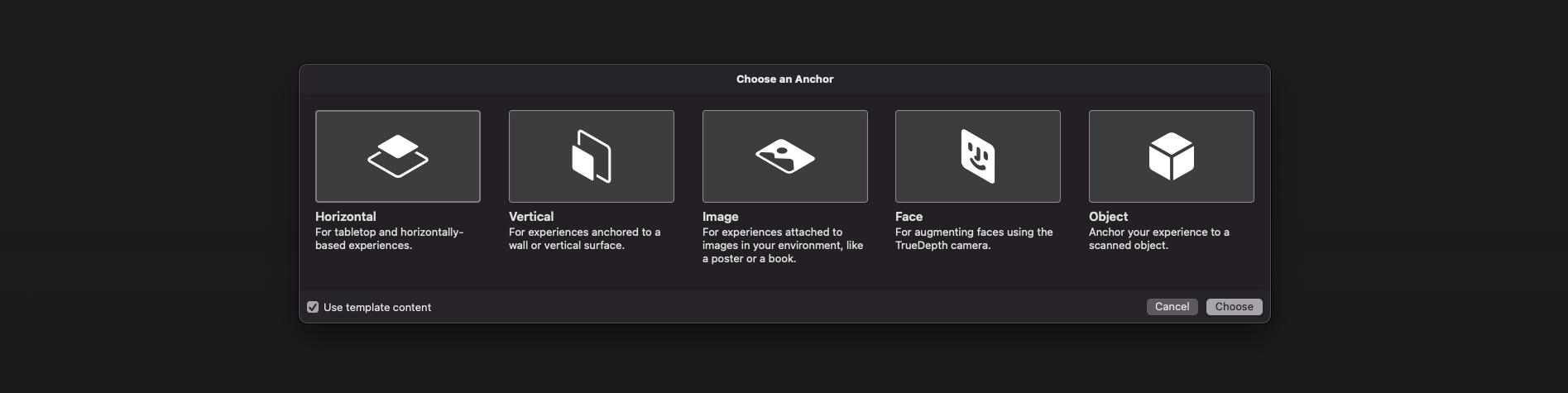

iOS Reality Composer 1.5 anchors

At the moment (June 2024) iOS Reality Composer has just 4 types of AnchorEntities:

![enter image description here]()

// 1a

AnchorEntity(plane: .horizontal)

// 1b

AnchorEntity(plane: .vertical)

// 2

AnchorEntity(.image(group: "GroupName", name: "forModel"))

// 3

AnchorEntity(.face)

// 4

AnchorEntity(.object(group: "GroupName", name: "forObject"))

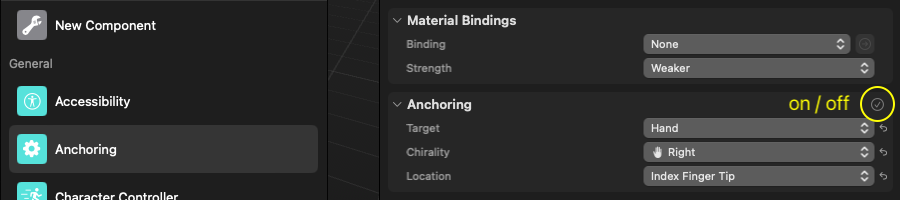

visionOS Reality Composer Pro 2.0 anchors

The paradox of using anchors in RealityKit for visionOS is that anchors are an option, not a required element of the scene, as they are in iOS. This works the same way as in an ARKit + SceneKit powered app. So, there is no rule without an exception.

At the moment (June 2024) visionOS Reality Composer Pro has just 5 types of AnchorEntities:

![enter image description here]()

// 1

AnchorEntity(.world(transform: .init()))

// 2a

AnchorEntity(.plane(.horizontal, classification: .floor, minimumBounds: [0.1,0.1]))

// 2b

AnchorEntity(.plane(.vertical, classification: .wall, minimumBounds: [0.1,0.1]))

// 3

AnchorEntity(.head)

// 4a

AnchorEntity(.hand(.left, location: .palm))

// 4b

AnchorEntity(.hand(.right, location: .thumbTip))

// 5

AnchorEntity(.referenceObject(from: .init(name: "string")))

AR USD Schemas

And of course, I should say a few words about preliminary anchors. There are 3 preliminary anchoring types (July 2022) for those who prefer Python scripting for USDZ models – these are plane, image and face preliminary anchors. Look at this code snippet to find out how to implement a schema pythonically.

def Cube "ImageAnchoredBox"(prepend apiSchemas = ["Preliminary_AnchoringAPI"])

{

uniform token preliminary:anchoring:type = "image"

rel preliminary: imageAnchoring:referenceImage = <ImageReference>

def Preliminary_ReferenceImage "ImageReference"

{

uniform asset image = @somePicture.jpg@

uniform double physicalWidth = 45

}

}

If you want to know more about AR USD Schemas, read this story on Meduim.

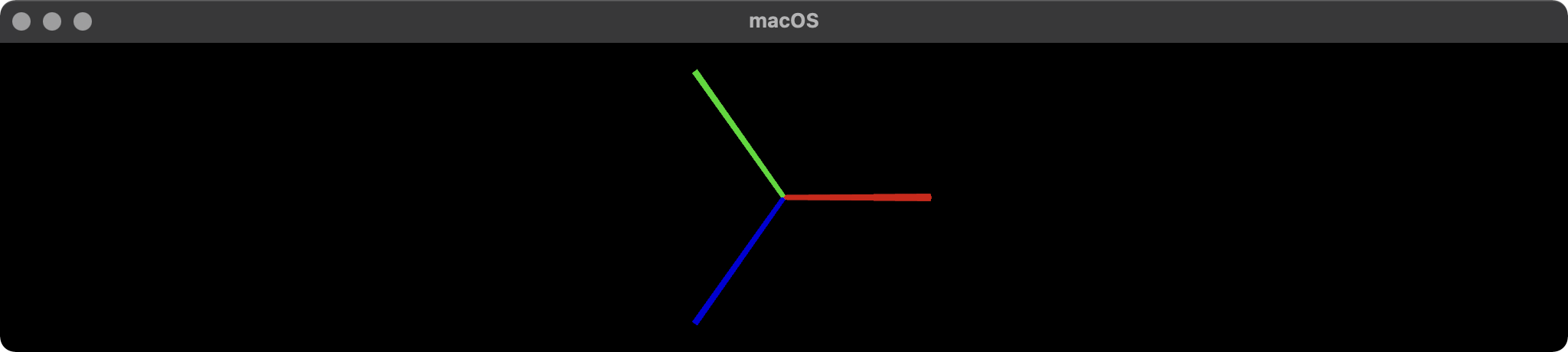

Visualizing AnchorEntity

Here's an example of how to visualize anchors in RealityKit (mac version).

import AppKit

import RealityKit

class ViewController: NSViewController {

@IBOutlet var arView: ARView!

var model = Entity()

let anchor = AnchorEntity()

fileprivate func visualAnchor() -> Entity {

let colors: [SimpleMaterial.Color] = [.red, .green, .blue]

for index in 0...2 {

let box: MeshResource = .generateBox(size: [0.20, 0.005, 0.005])

let material = UnlitMaterial(color: colors[index])

let entity = ModelEntity(mesh: box, materials: [material])

if index == 0 {

entity.position.x += 0.1

} else if index == 1 {

entity.transform = Transform(pitch: 0, yaw: 0, roll: .pi/2)

entity.position.y += 0.1

} else if index == 2 {

entity.transform = Transform(pitch: 0, yaw: -.pi/2, roll: 0)

entity.position.z += 0.1

}

model.scale *= 1.5

self.model.addChild(entity)

}

return self.model

}

override func awakeFromNib() {

anchor.addChild(self.visualAnchor())

arView.scene.addAnchor(anchor)

}

}

![enter image description here]()

About ArAnchors in ARCore

At the end of my post, I would like to talk about five types of anchors that are used in ARCore 1.40+. Google's official documentation says the following about anchors: "ArAnchor describes a fixed location and orientation in the real world". ARCore anchors work similarly to ARKit anchors in many aspects.

Let's take a look at ArAnchors' types:

Local anchors

- are stored with the app locally, and valid only for that instance of the app. The user must be physically at the location where they are placing the anchor. Anchor can be attached to Trackable or ARCore Session.

Cloud Anchors

- are stored in Google Cloud and may be shared between app instances. The user must be physically at the location where they are placing the anchor. Cloud Anchors are anchors that are hosted in the cloud (thanks to the

Persistent Cloud Anchors API), you can create a cloud anchor that can be resolved for 1 to 365 days after creation. They can be resolved by multiple users to establish a common frame of reference across users and their devices.

Geospatial anchors

- are based on geodetic latitude, longitude, and altitude, plus Google's

Visual Positioning System data, to provide precise location almost anywhere in the world. These anchors may be shared between app instances. The user may place an anchor from a remote location as long as the app is connected to the internet and able to use the VPS.

Terrain anchors

- is rather a subtype of Geospatial anchors that allow you to place AR objects using just latitude and longitude, leveraging information from Google Maps to automatically find the precise altitude above a ground.

Rooftop anchors

- are the part of Streetscape Geometry API and Scene Semantics API that provides the geometry of buildings and terrain in a scene. The geometry can be used for occlusion, rendering, or placing AR content via hit-tests (up to 100 meters). Streetscape Geometry data is obtained through Google Street View imagery. As the name suggests, Rooftop Anchors are placed on rooftops.

These Kotlin code snippets show you how to use a Geospatial anchors and Rooftop anchors.

Geospatial anchors

fun configureSession(session: Session) {

session.configure(

session.config.apply {

geospatialMode = Config.GeospatialMode.ENABLED

}

)

}

val earth = session?.earth ?: return

if (earth.trackingState != TrackingState.TRACKING) { return }

earthAnchor?.detach()

val altitude = earth.cameraGeospatialPose.altitude - 1

val qx = 0f; val qy = 0f; val qz = 0f; val qw = 1f

earthAnchor = earth.createAnchor(latLng.latitude,

latLng.longitude,

altitude,

qx, qy, qz, qw)

Rooftop anchors

streetscapeGeometryMode = Config.StreetscapeGeometryMode.ENABLED

val streetscapeGeo = session.getAllTrackables(StreetscapeGeometry::class.java)

streetscapeGeometryRenderer.render(render, streetscapeGeo)

val centerHits = frame.hitTest(centerCoords[0], centerCoords[1])

val hit = centerHits.firstOrNull {

val trackable = it.trackable

trackable is StreetscapeGeometry &&

trackable.type == StreetscapeGeometry.Type.BUILDING

} ?: return

val transformedPose = ObjectPlacementHelper.createStarPose(hit.hitPose)

val anchor = hit.trackable.createAnchor(transformedPose)

starAnchors.add(anchor)

val earth = session?.earth ?: return

val geospatialPose = earth.getGeospatialPose(transformedPose)

earth.resolveAnchorOnRooftopAsync(geospatialPose.latitude,

geospatialPose.longitude,

0.0,

transformedPose.qx(),

transformedPose.qy(),

transformedPose.qz(),

transformedPose.qw() ) { anchor, state ->

if (!state.isError) {

balloonAnchors.add(anchor)

}

}