This seems like a very important issue for this library, and so far I don't see a decisive answer, although it seems like for the most part, the answer is 'No.'

Right now, any method that uses the transformer api in sklearn returns a numpy array as its results. Usually this is fine, but if you're chaining together a multi-step process that expands or reduces the number of columns, not having a clean way to track how they relate to the original column labels makes it difficult to use this section of the library to its fullest.

As an example, here's a snippet that I just recently used, where the inability to map new columns to the ones originally in the dataset was a big drawback:

numeric_columns = train.select_dtypes(include=np.number).columns.tolist()

cat_columns = train.select_dtypes(include=np.object).columns.tolist()

numeric_pipeline = make_pipeline(SimpleImputer(strategy='median'), StandardScaler())

cat_pipeline = make_pipeline(SimpleImputer(strategy='most_frequent'), OneHotEncoder())

transformers = [

('num', numeric_pipeline, numeric_columns),

('cat', cat_pipeline, cat_columns)

]

combined_pipe = ColumnTransformer(transformers)

train_clean = combined_pipe.fit_transform(train)

test_clean = combined_pipe.transform(test)

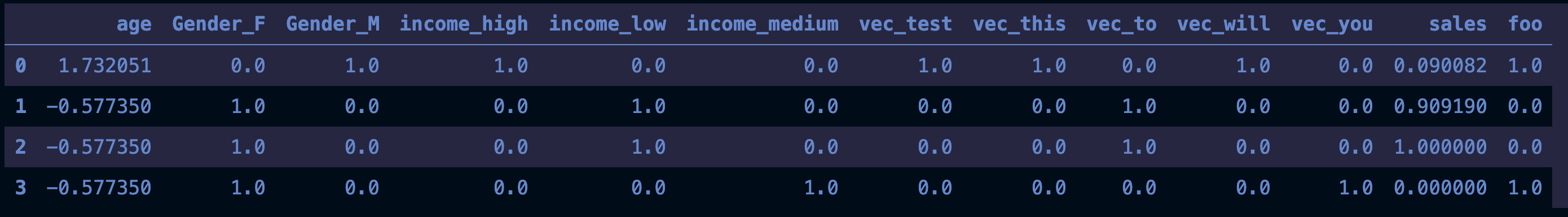

In this example I split up my dataset using the ColumnTransformer and then added additional columns using the OneHotEncoder, so my arrangement of columns is not the same as what I started out with.

I could easily have different arrangements if I used different modules that use the same API. OrdinalEncoer, select_k_best, etc.

If you're doing multi-step transformations, is there a way to consistently see how your new columns relate to your original dataset?

There's an extensive discussion about it here, but I don't think anything has been finalized yet.

i.get_feature_names) – Partite