This is a very useful feature when you have more than one output of a network. Here's a completely made up example: imagine you want to build some random convolutional network that you can ask two questions of: Does the input image contain a cat, and does the image contain a car?

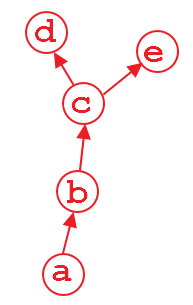

One way of doing this is to have a network that shares the convolutional layers, but that has two parallel classification layers following (forgive my terrible ASCII graph, but this is supposed to be three convlayers, followed by three fully connected layers, one for cats and one for cars):

-- FC - FC - FC - cat?

Conv - Conv - Conv -|

-- FC - FC - FC - car?

Given a picture that we want to run both branches on, when training the network, we can do so in several ways. First (which would probably be the best thing here, illustrating how bad the example is), we simply compute a loss on both assessments and sum the loss, and then backpropagate.

However, there's another scenario - in which we want to do this sequentially. First we want to backprop through one branch, and then through the other (I have had this use-case before, so it is not completely made up). In that case, running .backward() on one graph will destroy any gradient information in the convolutional layers, too, and the second branch's convolutional computations (since these are the only ones shared with the other branch) will not contain a graph anymore! That means, that when we try to backprop through the second branch, Pytorch will throw an error since it cannot find a graph connecting the input to the output!

In these cases, we can solve the problem by simple retaining the graph on the first backward pass. The graph will then not be consumed, but only be consumed by the first backward pass that does not require to retain it.

EDIT: If you retain the graph at all backward passes, the implicit graph definitions attached to the output variables will never be freed. There might be a usecase here as well, but I cannot think of one. So in general, you should make sure that the last backwards pass frees the memory by not retaining the graph information.

As for what happens for multiple backward passes: As you guessed, pytorch accumulates gradients by adding them in-place (to a variable's/parameters .grad property).

This can be very useful, since it means that looping over a batch and processing it once at a time, accumulating the gradients at the end, will do the same optimization step as doing a full batched update (which only sums up all the gradients as well). While a fully batched update can be parallelized more, and is thus generally preferable, there are cases where batched computation is either very, very difficult to implement or simply not possible. Using this accumulation, however, we can still rely on some of the nice stabilizing properties that batching brings. (If not on the performance gain)

retain_graph=Trueyou could just doloss = loss1 + loss2thenloss.backward()– Bacolod