I'm two years late in answering, so please consider this despite only a few up-votes.

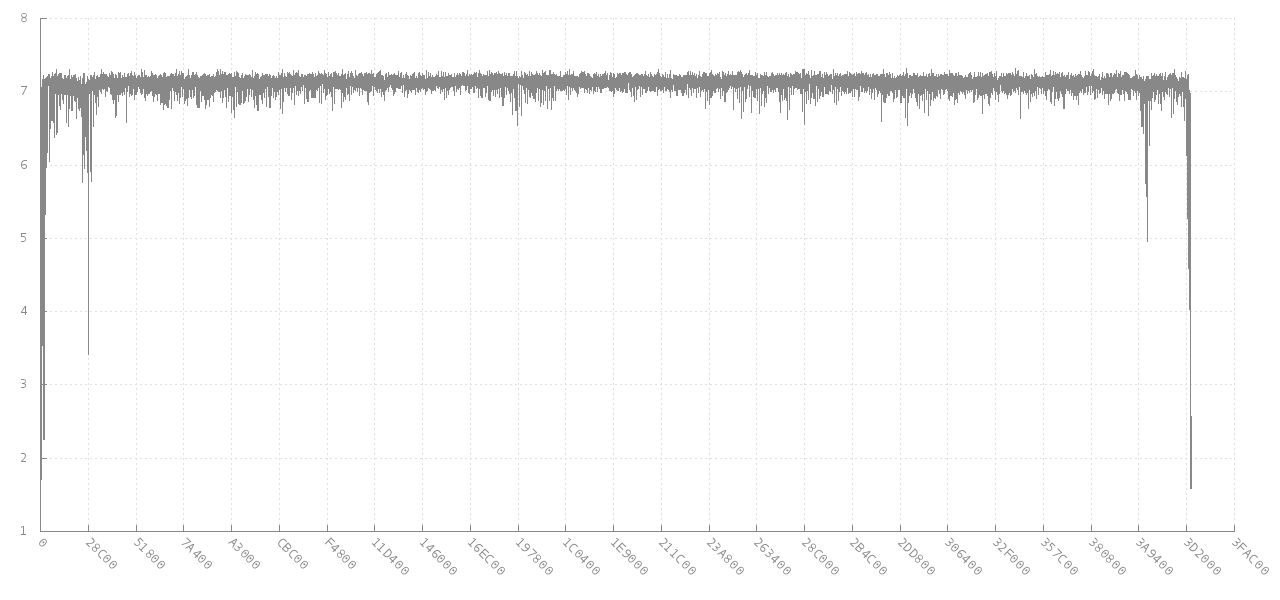

Short answer: use my 1st and 3rd bold equations below to get what most people are thinking about when they say "entropy" of a file in bits. Use just 1st equation if you want Shannon's H entropy which is actually entropy/symbol as he stated 13 times in his paper which most people are not aware of. Some online entropy calculators use this one, but Shannon's H is "specific entropy", not "total entropy" which has caused so much confusion. Use 1st and 2nd equation if you want the answer between 0 and 1 which is normalized entropy/symbol (it's not bits/symbol, but a true statistical measure of the "entropic nature" of the data by letting the data choose its own log base instead of arbitrarily assigning 2, e, or 10).

There 4 types of entropy of files (data) of N symbols long with n unique types of symbols. But keep in mind that by knowing the contents of a file, you know the state it is in and therefore S=0. To be precise, if you have a source that generates a lot of data that you have access to, then you can calculate the expected future entropy/character of that source. If you use the following on a file, it is more accurate to say it is estimating the expected entropy of other files from that source.

- Shannon (specific) entropy H = -1*sum(count_i / N * log(count_i / N))

where count_i is the number of times symbol i occured in N.

Units are bits/symbol if log is base 2, nats/symbol if natural log.

- Normalized specific entropy: H / log(n)

Units are entropy/symbol. Ranges from 0 to 1. 1 means each symbol occurred equally often and near 0 is where all symbols except 1 occurred only once, and the rest of a very long file was the other symbol. The log is in the same base as the H.

- Absolute entropy S = N * H

Units are bits if log is base 2, nats if ln()).

- Normalized absolute entropy S = N * H / log(n)

Unit is "entropy", varies from 0 to N. The log is in the same base as the H.

Although the last one is the truest "entropy", the first one (Shannon entropy H) is what all books call "entropy" without (the needed IMHO) qualification. Most do not clarify (like Shannon did) that it is bits/symbol or entropy per symbol. Calling H "entropy" is speaking too loosely.

For files with equal frequency of each symbol: S = N * H = N. This is the case for most large files of bits. Entropy does not do any compression on the data and is thereby completely ignorant of any patterns, so 000000111111 has the same H and S as 010111101000 (6 1's and 6 0's in both cases).

Like others have said, using a standard compression routine like gzip and dividing before and after will give a better measure of the amount of pre-existing "order" in the file, but that is biased against data that fits the compression scheme better. There's no general purpose perfectly optimized compressor that we can use to define an absolute "order".

Another thing to consider: H changes if you change how you express the data. H will be different if you select different groupings of bits (bits, nibbles, bytes, or hex). So you divide by log(n) where n is the number of unique symbols in the data (2 for binary, 256 for bytes) and H will range from 0 to 1 (this is normalized intensive Shannon entropy in units of entropy per symbol). But technically if only 100 of the 256 types of bytes occur, then n=100, not 256.

H is an "intensive" entropy, i.e. it is per symbol which is analogous to specific entropy in physics which is entropy per kg or per mole. Regular "extensive" entropy of a file analogous to physics' S is S=N*H where N is the number of symbols in the file. H would be exactly analogous to a portion of an ideal gas volume. Information entropy can't simply be made exactly equal to physical entropy in a deeper sense because physical entropy allows for "ordered" as well disordered arrangements: physical entropy comes out more than a completely random entropy (such as a compressed file). One aspect of the different For an ideal gas there is a additional 5/2 factor to account for this: S = k * N * (H+5/2) where H = possible quantum states per molecule = (xp)^3/hbar * 2 * sigma^2 where x=width of the box, p=total non-directional momentum in the system (calculated from kinetic energy and mass per molecule), and sigma=0.341 in keeping with uncertainty principle giving only the number of possible states within 1 std dev.

A little math gives a shorter form of normalized extensive entropy for a file:

S=N * H / log(n) = sum(count_i*log(N/count_i))/log(n)

Units of this are "entropy" (which is not really a unit). It is normalized to be a better universal measure than the "entropy" units of N * H. But it also should not be called "entropy" without clarification because the normal historical convention is to erringly call H "entropy" (which is contrary to the clarifications made in Shannon's text).

Note: I need the whole thing to make assumptions on the file's contents: (plaintext, markup, compressed or some binary, ...)... You just asked for godlike magic, good luck with developing provably optimal data compression. – Catarinacatarrh