I am using the version of Apache Oozie 4.3.0 along with Hadoop 2.7.3

I have developed a very simple Oozie workflow, which simply has a sqoop action to export system events to a MySQL table.

<workflow-app name="WorkflowWithSqoopAction" xmlns="uri:oozie:workflow:0.1">

<start to="sqoopAction"/>

<action name="sqoopAction">

<sqoop xmlns="uri:oozie:sqoop-action:0.2">

<job-tracker>${jobTracker}</job-tracker>

<name-node>${nameNode}</name-node>

<command>export --connect jdbc:mysql://localhost/airawat --username devUser --password myPwd --table eventsgranularreport --direct --enclosed-by '\"' --export-dir /user/hive/warehouse/eventsgranularreport </command>

</sqoop>

<ok to="end"/>

<error to="killJob"/>

</action>

<kill name="killJob">

<message>"Killed job due to error: ${wf:errorMessage(wf:lastErrorNode())}"</message>

</kill>

<end name="end" />

</workflow-app>

I have the application deployed in HDFS as follows:

hdfs dfs -ls -R /oozieProject | awk '{ print $8 }'

/oozieProject/workflowSqoopAction

/oozieProject/workflowSqoopAction/README.md

/oozieProject/workflowSqoopAction/job.properties

/oozieProject/workflowSqoopAction/workflow.xml

hdfs dfs -ls -d /oozieProject

drwxr-xr-x - sergio supergroup 0 2017-04-15 14:08 /oozieProject

I have included the following configuration in the job.properties:

#*****************************

# job.properties

#*****************************

nameNode=hdfs://localhost:9000

jobTracker=localhost:8032

queueName=default

mapreduce.job.user.name=sergio

user.name=sergio

oozie.libpath=${nameNode}/oozieProject/share/lib

oozie.use.system.libpath=true

oozie.wf.rerun.failnodes=true

oozieProjectRoot=${nameNode}/oozieProject

appPath=${oozieProjectRoot}/workflowSqoopAction

oozie.wf.application.path=${appPath}

I then send the job to the Oozie server and start executing it:

oozie job -oozie http://localhost:11000/oozie -config /home/sergio/git/hadoop_samples/hadoop_examples/src/main/java/org/sanchez/sergio/hadoop_examples/oozie/workflowSqoopAction/job.properties -submit

oozie job -oozie http://localhost:11000/oozie -start 0000001-170415112256550-oozie-serg-W

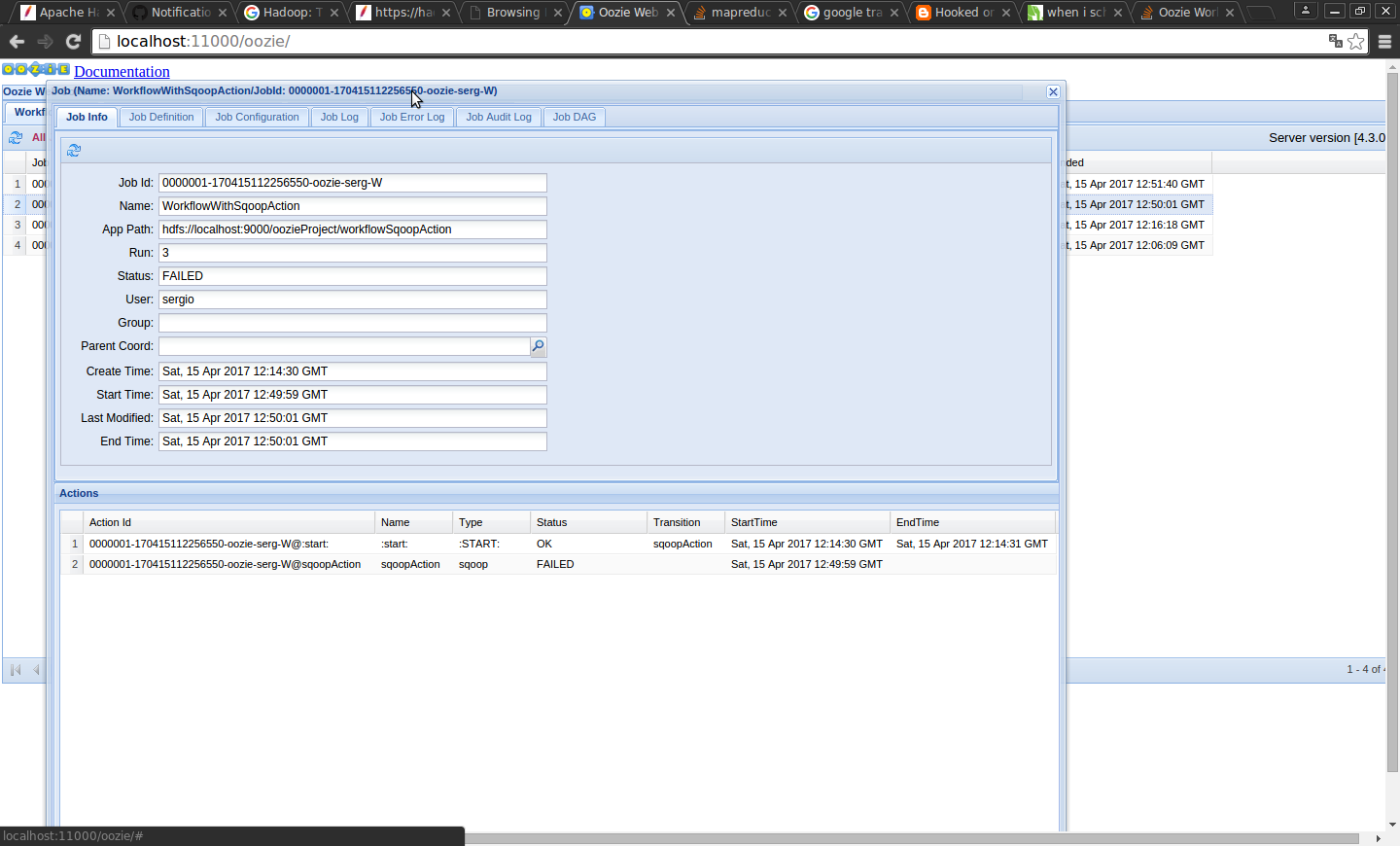

Soon after in the web console of Oozie it is seen that work failed:

The following error message appears in the sqoopAction:

JA017: Could not lookup launched hadoop Job ID [job_local245204272_0008] which was associated with action [0000001-170415112256550-oozie-serg-W@sqoopAction]. Failing this action!

Can anyone guide me about this error?

Running demons:

jps

2576

6130 ResourceManager

3267 DataNode

10102 JobHistoryServer

3129 NameNode

24650 Jps

6270 NodeManager

3470 SecondaryNameNode

4190 Bootstrap

application_local245204272_0008(yes, the "job" prefix is a legacy from Hadoop 1, it is still used by the legacy HistoryServer and by Oozie, but YARN uses "application" prefix). – Incumber