I have Hadoop installed in this location

/usr/local/hadoop$

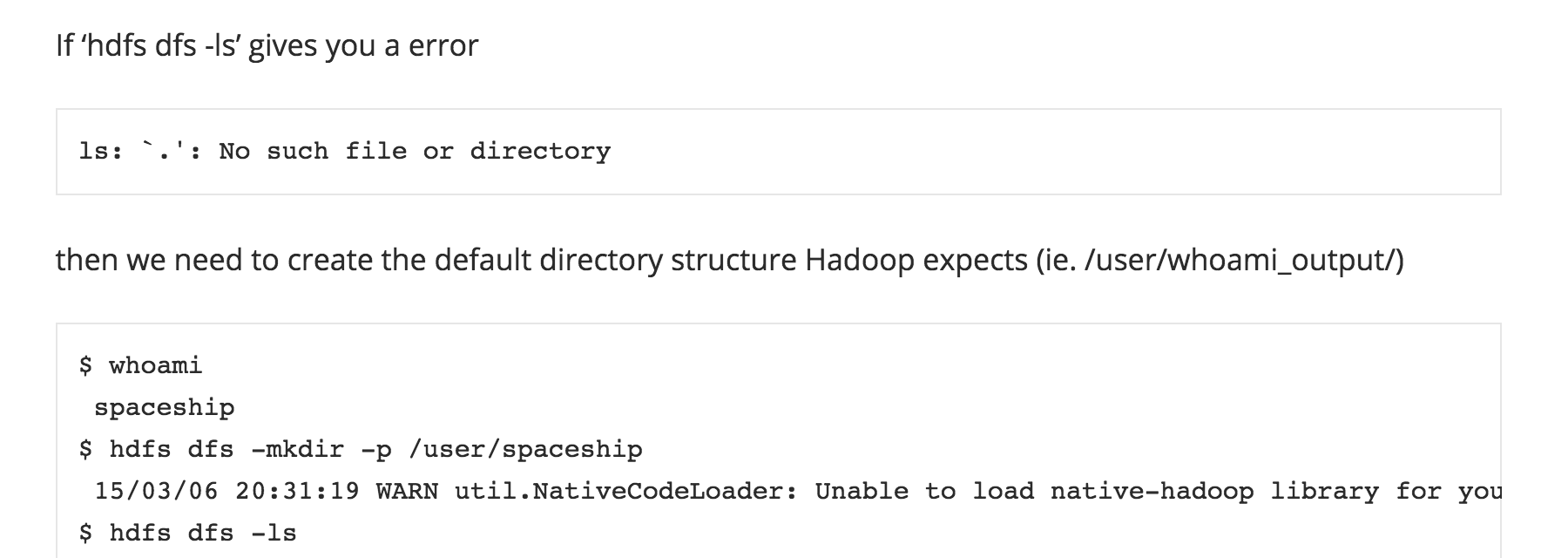

Now I want to list the files inside the dfs. The command I used is :

hduser@ubuntu:/usr/local/hadoop$ bin/hadoop dfs -ls

This gave me the files in the dfs

Found 3 items

drwxr-xr-x - hduser supergroup 0 2014-03-20 03:53 /user/hduser/gutenberg

drwxr-xr-x - hduser supergroup 0 2014-03-24 22:34 /user/hduser/mytext-output

-rw-r--r-- 1 hduser supergroup 126 2014-03-24 22:30 /user/hduser/text.txt

Next time, I tried the same command in a different manner

hduser@ubuntu:/usr/local/hadoop$ hadoop dfs -ls

It also gave me the same result.

Could some one please explain why both are working despite of executing the ls command from different folders. I hope you guys understood my question.Just explain me difference between these two :

hduser@ubuntu:/usr/local/hadoop$ bin/hadoop dfs -ls

hduser@ubuntu:/usr/local/hadoop$ hadoop dfs -ls