I've been working on how to make a SPA crawlable by google based on google's instructions. Even though there are quite a few general explanations I couldn't find anywhere a more thorough step-by-step tutorial with actual examples. After having finished this I would like to share my solution so that others may also make use of it and possibly improve it further.

I am using MVC with Webapi controllers, and Phantomjs on the server side, and Durandal on the client side with push-state enabled; I also use Breezejs for client-server data interaction, all of which I strongly recommend, but I'll try to give a general enough explanation that will also help people using other platforms.

Before starting, please make sure you understand what google requires, particularly the use of pretty and ugly URLs. Now lets see the implementation:

Client Side

On the client side you only have a single html page which interacts with the server dynamically via AJAX calls. that's what SPA is about. All the a tags in the client side are created dynamically in my application, we'll later see how to make these links visible to google's bot in the server. Each such a tag needs to be able to have a pretty URL in the href tag so that google's bot will crawl it. You don't want the href part to be used when the client clicks on it (even though you do want the server to be able to parse it, we'll see that later), because we may not want a new page to load, only to make an AJAX call getting some data to be displayed in part of the page and change the URL via javascript (e.g. using HTML5 pushstate or with Durandaljs). So, we have both an href attribute for google as well as on onclick which does the job when the user clicks on the link. Now, since I use push-state I don't want any # on the URL, so a typical a tag may look like this:

<a href="http://www.xyz.com/#!/category/subCategory/product111" onClick="loadProduct('category','subCategory','product111')>see product111...</a>

'category' and 'subCategory' would probably be other phrases, such as 'communication' and 'phones' or 'computers' and 'laptops' for an electrical appliances store. Obviously there would be many different categories and sub categories. As you can see, the link is directly to the category, sub category and the product, not as extra-parameters to a specific 'store' page such as http://www.xyz.com/store/category/subCategory/product111. This is because I prefer shorter and simpler links. It implies that I there will not be a category with the same name as one of my 'pages', i.e. 'about'.

I will not go into how to load the data via AJAX (the onclick part), search it on google, there are many good explanations. The only important thing here that I do want to mention is that when the user clicks on this link, I want the URL in the browser to look like this:

http://www.xyz.com/category/subCategory/product111. And this is URL is not sent to the server ! remember, this is a SPA where all the interaction between the client and the server is done via AJAX, no links at all! all 'pages' are implemented on the client side, and the different URL does not make a call to the server (the server does need to know how to handle these URLs in case they are used as external links from another site to your site, we'll see that later on the server side part). Now, this is handled wonderfully by Durandal. I strongly recommend it, but you can also skip this part if you prefer other technologies. If you do choose it, and you're also using MS Visual Studio Express 2012 for Web like me, you can install the Durandal Starter Kit, and there, in shell.js, use something like this:

define(['plugins/router', 'durandal/app'], function (router, app) {

return {

router: router,

activate: function () {

router.map([

{ route: '', title: 'Store', moduleId: 'viewmodels/store', nav: true },

{ route: 'about', moduleId: 'viewmodels/about', nav: true }

])

.buildNavigationModel()

.mapUnknownRoutes(function (instruction) {

instruction.config.moduleId = 'viewmodels/store';

instruction.fragment = instruction.fragment.replace("!/", ""); // for pretty-URLs, '#' already removed because of push-state, only ! remains

return instruction;

});

return router.activate({ pushState: true });

}

};

});

There are a few important things to notice here:

- The first route (with

route:'') is for the URL which has no extra data in it, i.e.http://www.xyz.com. In this page you load general data using AJAX. There may actually be noatags at all in this page. You will want to add the following tag so that google's bot will know what to do with it:

<meta name="fragment" content="!">. This tag will make google's bot transform the URL towww.xyz.com?_escaped_fragment_=which we'll see later. - The 'about' route is just an example to a link to other 'pages' you may want on your web application.

- Now, the tricky part is that there is no 'category' route, and there may be many different categories - none of which have a predefined route. This is where

mapUnknownRoutescomes in. It maps these unknown routes to the 'store' route and also removes any '!' from the URL in case it's apretty URLgenerated by google's seach engine. The 'store' route takes the info in the 'fragment' property and makes the AJAX call to get the data, display it, and change the URL locally. In my application, I don't load a different page for every such call; I only change the part of the page where this data is relevant and also change the URL locally. - Notice the

pushState:truewhich instructs Durandal to use push state URLs.

This is all we need in the client side. It can be implemented also with hashed URLs (in Durandal you simple remove the pushState:true for that). The more complex part (at least for me...) was the server part:

Server Side

I'm using MVC 4.5 on the server side with WebAPI controllers. The server actually needs to handle 3 types of URLs: the ones generated by google - both pretty and ugly and also a 'simple' URL with the same format as the one that appears in the client's browser. Lets look on how to do this:

Pretty URLs and 'simple' ones are first interpreted by the server as if trying to reference a non-existent controller. The server sees something like http://www.xyz.com/category/subCategory/product111 and looks for a controller named 'category'. So in web.config I add the following line to redirect these to a specific error handling controller:

<customErrors mode="On" defaultRedirect="Error">

<error statusCode="404" redirect="Error" />

</customErrors><br/>

Now, this transforms the URL to something like: http://www.xyz.com/Error?aspxerrorpath=/category/subCategory/product111. I want the URL to be sent to the client that will load the data via AJAX, so the trick here is to call the default 'index' controller as if not referencing any controller; I do that by adding a hash to the URL before all the 'category' and 'subCategory' parameters; the hashed URL does not require any special controller except the default 'index' controller and the data is sent to the client which then removes the hash and uses the info after the hash to load the data via AJAX. Here is the error handler controller code:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Net;

using System.Net.Http;

using System.Web.Http;

using System.Web.Routing;

namespace eShop.Controllers

{

public class ErrorController : ApiController

{

[HttpGet, HttpPost, HttpPut, HttpDelete, HttpHead, HttpOptions, AcceptVerbs("PATCH"), AllowAnonymous]

public HttpResponseMessage Handle404()

{

string [] parts = Request.RequestUri.OriginalString.Split(new[] { '?' }, StringSplitOptions.RemoveEmptyEntries);

string parameters = parts[ 1 ].Replace("aspxerrorpath=","");

var response = Request.CreateResponse(HttpStatusCode.Redirect);

response.Headers.Location = new Uri(parts[0].Replace("Error","") + string.Format("#{0}", parameters));

return response;

}

}

}

But what about the Ugly URLs? These are created by google's bot and should return plain HTML that contains all the data the user sees in the browser. For this I use phantomjs. Phantom is a headless browser doing what the browser is doing on the client side - but on the server side. In other words, phantom knows (among other things) how to get a web page via a URL, parse it including running all the javascript code in it (as well as getting data via AJAX calls), and give you back the HTML that reflects the DOM. If you're using MS Visual Studio Express you many want to install phantom via this link.

But first, when an ugly URL is sent to the server, we must catch it; For this, I added to the 'App_start' folder the following file:

using System;

using System.Collections.Generic;

using System.Diagnostics;

using System.IO;

using System.Linq;

using System.Reflection;

using System.Web;

using System.Web.Mvc;

using System.Web.Routing;

namespace eShop.App_Start

{

public class AjaxCrawlableAttribute : ActionFilterAttribute

{

private const string Fragment = "_escaped_fragment_";

public override void OnActionExecuting(ActionExecutingContext filterContext)

{

var request = filterContext.RequestContext.HttpContext.Request;

if (request.QueryString[Fragment] != null)

{

var url = request.Url.ToString().Replace("?_escaped_fragment_=", "#");

filterContext.Result = new RedirectToRouteResult(

new RouteValueDictionary { { "controller", "HtmlSnapshot" }, { "action", "returnHTML" }, { "url", url } });

}

return;

}

}

}

This is called from 'filterConfig.cs' also in 'App_start':

using System.Web.Mvc;

using eShop.App_Start;

namespace eShop

{

public class FilterConfig

{

public static void RegisterGlobalFilters(GlobalFilterCollection filters)

{

filters.Add(new HandleErrorAttribute());

filters.Add(new AjaxCrawlableAttribute());

}

}

}

As you can see, 'AjaxCrawlableAttribute' routes ugly URLs to a controller named 'HtmlSnapshot', and here is this controller:

using System;

using System.Collections.Generic;

using System.Diagnostics;

using System.IO;

using System.Linq;

using System.Web;

using System.Web.Mvc;

namespace eShop.Controllers

{

public class HtmlSnapshotController : Controller

{

public ActionResult returnHTML(string url)

{

string appRoot = Path.GetDirectoryName(AppDomain.CurrentDomain.BaseDirectory);

var startInfo = new ProcessStartInfo

{

Arguments = String.Format("{0} {1}", Path.Combine(appRoot, "seo\\createSnapshot.js"), url),

FileName = Path.Combine(appRoot, "bin\\phantomjs.exe"),

UseShellExecute = false,

CreateNoWindow = true,

RedirectStandardOutput = true,

RedirectStandardError = true,

RedirectStandardInput = true,

StandardOutputEncoding = System.Text.Encoding.UTF8

};

var p = new Process();

p.StartInfo = startInfo;

p.Start();

string output = p.StandardOutput.ReadToEnd();

p.WaitForExit();

ViewData["result"] = output;

return View();

}

}

}

The associated view is very simple, just one line of code:

@Html.Raw( ViewBag.result )

As you can see in the controller, phantom loads a javascript file named createSnapshot.js under a folder I created called seo. Here is this javascript file:

var page = require('webpage').create();

var system = require('system');

var lastReceived = new Date().getTime();

var requestCount = 0;

var responseCount = 0;

var requestIds = [];

var startTime = new Date().getTime();

page.onResourceReceived = function (response) {

if (requestIds.indexOf(response.id) !== -1) {

lastReceived = new Date().getTime();

responseCount++;

requestIds[requestIds.indexOf(response.id)] = null;

}

};

page.onResourceRequested = function (request) {

if (requestIds.indexOf(request.id) === -1) {

requestIds.push(request.id);

requestCount++;

}

};

function checkLoaded() {

return page.evaluate(function () {

return document.all["compositionComplete"];

}) != null;

}

// Open the page

page.open(system.args[1], function () { });

var checkComplete = function () {

// We don't allow it to take longer than 5 seconds but

// don't return until all requests are finished

if ((new Date().getTime() - lastReceived > 300 && requestCount === responseCount) || new Date().getTime() - startTime > 10000 || checkLoaded()) {

clearInterval(checkCompleteInterval);

var result = page.content;

//result = result.substring(0, 10000);

console.log(result);

//console.log(results);

phantom.exit();

}

}

// Let us check to see if the page is finished rendering

var checkCompleteInterval = setInterval(checkComplete, 300);

I first want to thank Thomas Davis for the page where I got the basic code from :-).

You will notice something odd here: phantom keeps re-loading the page until the checkLoaded() function returns true. Why is that? this is because my specific SPA makes several AJAX call to get all the data and place it in the DOM on my page, and phantom cannot know when all the calls have completed before returning me back the HTML reflection of the DOM. What I did here is after the final AJAX call I add a <span id='compositionComplete'></span>, so that if this tag exists I know the DOM is completed. I do this in response to Durandal's compositionComplete event, see here for more. If this does not happen withing 10 seconds I give up (it should take only a second to so the most). The HTML returned contains all the links that the user sees in the browser. The script will not work properly because the <script> tags that do exist in the HTML snapshot do not reference the right URL. This can be changed too in the javascript phantom file, but I don't think this is necassary because the HTML snapshort is only used by google to get the a links and not to run javascript; these links do reference a pretty URL, and if fact, if you try to see the HTML snapshot in a browser, you will get javascript errors but all the links will work properly and direct you to the server once again with a pretty URL this time getting the fully working page.

This is it. Now the server know how to handle both pretty and ugly URLs, with push-state enabled on both server and client. All ugly URLs are treated the same way using phantom so there's no need to create a separate controller for each type of call.

One thing you might prefer to change is not to make a general 'category/subCategory/product' call but to add a 'store' so that the link will look something like: http://www.xyz.com/store/category/subCategory/product111. This will avoid the problem in my solution that all invalid URLs are treated as if they are actually calls to the 'index' controller, and I suppose that these can be handled then within the 'store' controller without the addition to the web.config I showed above.

Google is now able to render SPA pages: Deprecating our AJAX crawling scheme

<a href=...> links in the DOM rather than relying on click events for all navigation. 3. Ensure that the correct pages renders when visiting a URL. –

Exhortative Here is a link to a screencast-recording from my Ember.js Training class I hosted in London on August 14th. It outlines a strategy for both your client-side application and for you server-side application, as well as gives a live demonstration of how implementing these features will provide your JavaScript Single-Page-App with graceful degradation even for users with JavaScript turned off.

It uses PhantomJS to aid in crawling your website.

In short, the steps required are:

- Have a hosted version of the web application you want to crawl, this site needs to have ALL of the data you have in production

- Write a JavaScript application (PhantomJS Script) to load your website

- Add index.html ( or “/“ ) to the list of URLs to crawl

- Pop the first URL added to the crawl-list

- Load page and render its DOM

- Find any links on the loaded page that links to your own site (URL filtering)

- Add this link to a list of “crawlable” URLS, if its not already crawled

- Store the rendered DOM to a file on the file system, but strip away ALL script-tags first

- At the end, create a Sitemap.xml file with the crawled URLs

Once this step is done, its up to your backend to serve the static-version of your HTML as part of the noscript-tag on that page. This will allow Google and other search engines to crawl every single page on your website, even though your app originally is a single-page-app.

Link to the screencast with the full details:

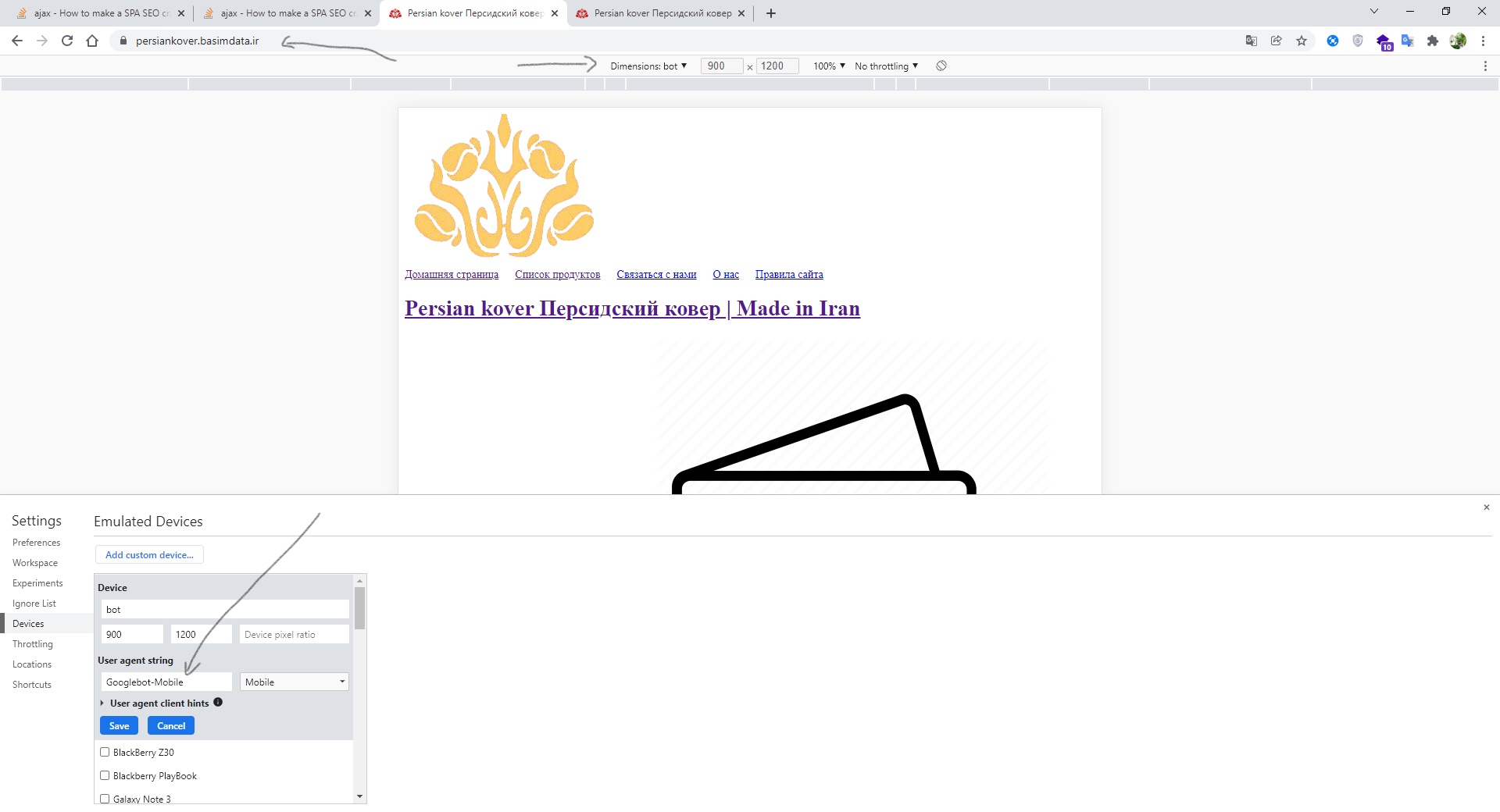

I used Rendertron to solve the SEO problem in ASP.net core and Angular on the client side, it is a middleware that differentiates requests based on being crawler or client, so when the request is from the crawler side the response generated with briefly and quickly on the fly.

In Startup.cs

Configure rendertron services:

public void ConfigureServices(IServiceCollection services)

{

// Add rendertron services

services.AddRendertron(options =>

{

// rendertron service url

options.RendertronUrl = "http://rendertron:3000/render/";

// proxy url for application

options.AppProxyUrl = "http://webapplication";

// prerender for firefox

//options.UserAgents.Add("firefox");

// inject shady dom

options.InjectShadyDom = true;

// use http compression

options.AcceptCompression = true;

});

}

It is true that this method is a little different and requires a short code to produce content specific to the crawler, but it is useful for small projects such as CMS or portal site, etc.

This method can be done in most programming languages or server-side frameworks such as ASP.net core, Python (Django), Express.js, Firebase.

To view the source and more details: https://github.com/GoogleChrome/rendertron

Year 2021 Update

SPA should use History API in order to be SEO friendly.

Transitions between SPA pages are typically effected via

history.pushState(path)call. What happens next is framework dependent. In case React is used, a component called React Router monitorshistoryand displays/renders the React component configured for thepathused.Achieving SEO for a simple SPA is straightforward.

Achieving SEO for a more advanced SPA (that uses selective prerendering for better performance) is more involved as shown in the article. I'm the author.

You can use or create your own service for prerender your SPA with the service called prerender. You can check it out on his website prerender.io and on his github project (It uses PhantomJS and it renderize your website for you).

It's very easy to start with. You only have to redirect crawlers requests to the service and they will receive the rendered html.

© 2022 - 2024 — McMap. All rights reserved.