In Python, how big can a list get? I need a list of about 12000 elements. Will I still be able to run list methods such as sorting, etc?

According to the source code, the maximum size of a list is PY_SSIZE_T_MAX/sizeof(PyObject*).

PY_SSIZE_T_MAX is defined in pyport.h to be ((size_t) -1)>>1

On a regular 32bit system, this is (4294967295 / 2) / 4 or 536870912.

Therefore the maximum size of a python list on a 32 bit system is 536,870,912 elements.

As long as the number of elements you have is equal or below this, all list functions should operate correctly.

PyObject *. That thing is a so called pointer(you recognize them because of the asterix at the end) . Pointers are 4 bytes long and store a memory address to the allocated object. They are "only" 4 bytes long because with 4 bytes you can address every element in a memory of nowadays computers. –

Inunction PY_SSIZE_T_MAX can very greatly. –

Avellaneda As the Python documentation says:

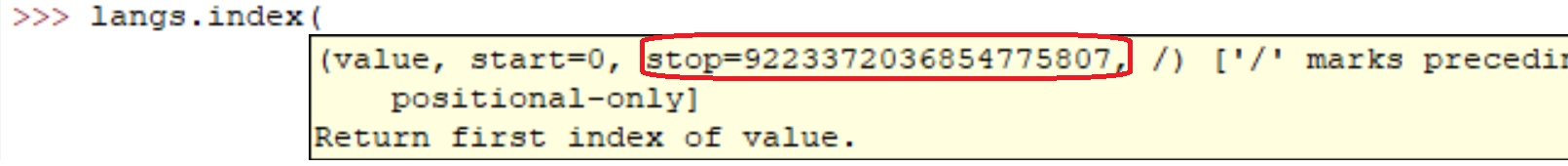

sys.maxsize

The largest positive integer supported by the platform’s Py_ssize_t type, and thus the maximum size lists, strings, dicts, and many other containers can have.

In my computer (Linux x86_64):

>>> import sys

>>> print sys.maxsize

9223372036854775807

sys.maxsize is the answer to the question. Different architectures support different maxima. –

Jacquiline Sure it is OK. Actually you can see for yourself easily:

l = range(12000)

l = sorted(l, reverse=True)

Running the those lines on my machine took:

real 0m0.036s

user 0m0.024s

sys 0m0.004s

But sure as everyone else said. The larger the array the slower the operations will be.

In casual code I've created lists with millions of elements. I believe that Python's implementation of lists are only bound by the amount of memory on your system.

In addition, the list methods / functions should continue to work despite the size of the list.

If you care about performance, it might be worthwhile to look into a library such as NumPy.

12000 elements is nothing in Python... and actually the number of elements can go as far as the Python interpreter has memory on your system.

Performance characteristics for lists are described on Effbot.

Python lists are actually implemented as vector for fast random access, so the container will basically hold as many items as there is space for in memory. (You need space for pointers contained in the list as well as space in memory for the object(s) being pointed to.)

Appending is O(1) (amortized constant complexity), however, inserting into/deleting from the middle of the sequence will require an O(n) (linear complexity) reordering, which will get slower as the number of elements in your list.

Your sorting question is more nuanced, since the comparison operation can take an unbounded amount of time. If you're performing really slow comparisons, it will take a long time, though it's no fault of Python's list data type.

Reversal just takes the amount of time it required to swap all the pointers in the list (necessarily O(n) (linear complexity), since you touch each pointer once).

It varies for different systems (depends on RAM). The easiest way to find out is

import six six.MAXSIZE 9223372036854775807

This gives the max size of list and dict too ,as per the documentation

sys, not six. –

Ronnieronny I'd say you're only limited by the total amount of RAM available. Obviously the larger the array the longer operations on it will take.

I got this from here on a x64 bit system: Python 3.7.0b5 (v3.7.0b5:abb8802389, May 31 2018, 01:54:01) [MSC v.1913 64 bit (AMD64)] on win32

There is no limitation of list number. The main reason which causes your error is the RAM. Please upgrade your memory size.

© 2022 - 2024 — McMap. All rights reserved.

sizeof(PyObject*) == 4?? What does this represent? – Condescend