I have the following class:

import scala.util.{Success, Failure, Try}

class MyClass {

def openFile(fileName: String): Try[String] = {

Failure( new Exception("some message"))

}

def main(args: Array[String]): Unit = {

openFile(args.head)

}

}

Which has the following unit test:

class MyClassTest extends org.scalatest.FunSuite {

test("pass inexistent file name") {

val myClass = new MyClass()

assert(myClass.openFile("./noFile").failed.get.getMessage == "Invalid file name")

}

}

When I run sbt test I get the following error:

java.lang.NoSuchMethodError: scala.Predef$.refArrayOps([Ljava/lang/Object;)Lscala/collection/mutable/ArrayOps;

at org.scalatest.tools.FriendlyParamsTranslator$.translateArguments(FriendlyParamsTranslator.scala:174)

at org.scalatest.tools.Framework.runner(Framework.scala:918)

at sbt.Defaults$$anonfun$createTestRunners$1.apply(Defaults.scala:533)

at sbt.Defaults$$anonfun$createTestRunners$1.apply(Defaults.scala:527)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:244)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:244)

at scala.collection.immutable.Map$Map1.foreach(Map.scala:109)

at scala.collection.TraversableLike$class.map(TraversableLike.scala:244)

at scala.collection.AbstractTraversable.map(Traversable.scala:105)

at sbt.Defaults$.createTestRunners(Defaults.scala:527)

at sbt.Defaults$.allTestGroupsTask(Defaults.scala:543)

at sbt.Defaults$$anonfun$testTasks$4.apply(Defaults.scala:410)

at sbt.Defaults$$anonfun$testTasks$4.apply(Defaults.scala:410)

at scala.Function8$$anonfun$tupled$1.apply(Function8.scala:35)

at scala.Function8$$anonfun$tupled$1.apply(Function8.scala:34)

at scala.Function1$$anonfun$compose$1.apply(Function1.scala:47)

at sbt.$tilde$greater$$anonfun$$u2219$1.apply(TypeFunctions.scala:40)

at sbt.std.Transform$$anon$4.work(System.scala:63)

at sbt.Execute$$anonfun$submit$1$$anonfun$apply$1.apply(Execute.scala:226)

at sbt.Execute$$anonfun$submit$1$$anonfun$apply$1.apply(Execute.scala:226)

at sbt.ErrorHandling$.wideConvert(ErrorHandling.scala:17)

at sbt.Execute.work(Execute.scala:235)

at sbt.Execute$$anonfun$submit$1.apply(Execute.scala:226)

at sbt.Execute$$anonfun$submit$1.apply(Execute.scala:226)

at sbt.ConcurrentRestrictions$$anon$4$$anonfun$1.apply(ConcurrentRestrictions.scala:159)

at sbt.CompletionService$$anon$2.call(CompletionService.scala:28)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

at java.lang.Thread.run(Thread.java:745)

[error] (test:executeTests) java.lang.NoSuchMethodError: scala.Predef$.refArrayOps([Ljava/lang/Object;)Lscala/collection/mutable/ArrayOps;

Build definitions:

version := "1.0"

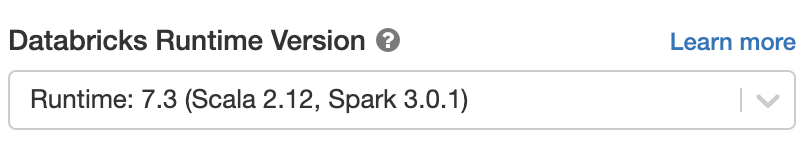

scalaVersion := "2.12.0"

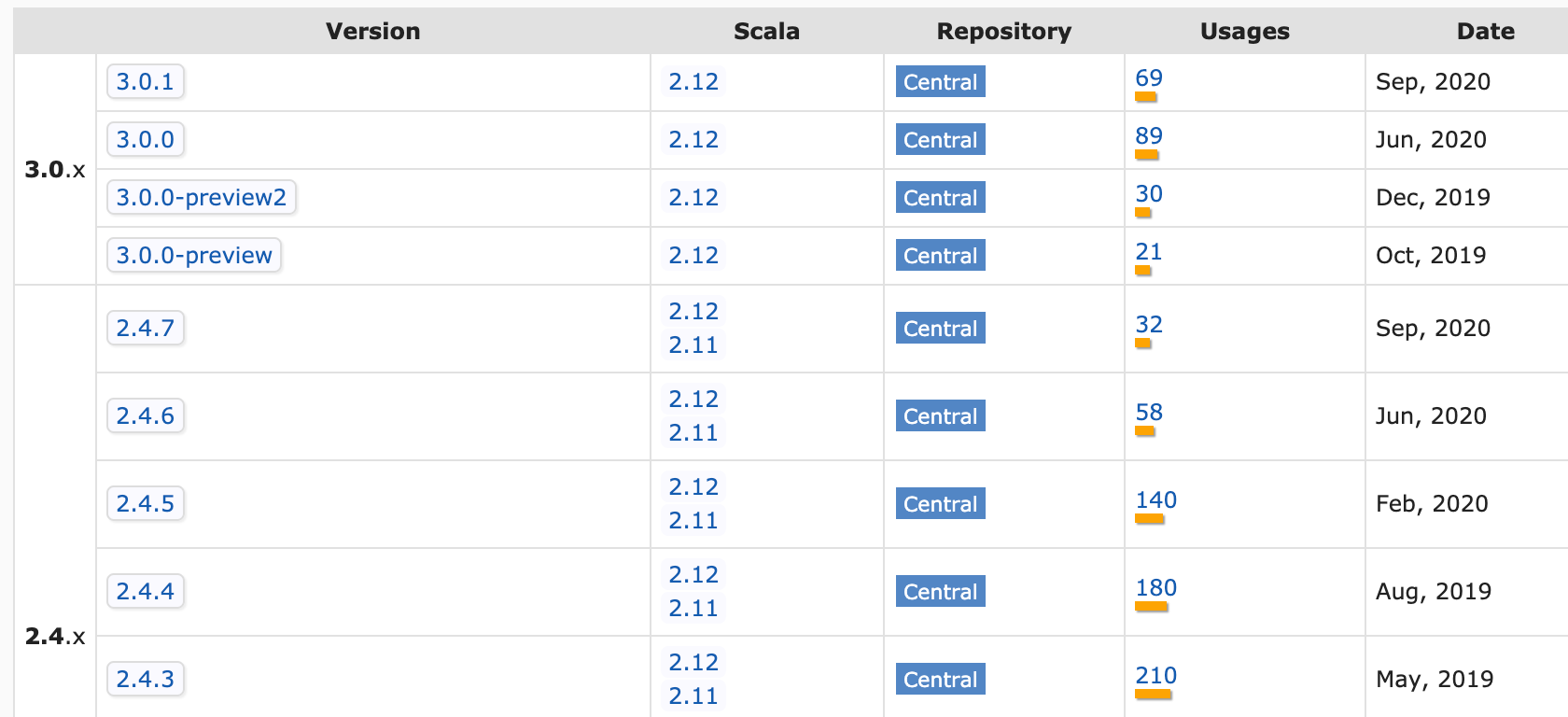

// https://mvnrepository.com/artifact/org.scalatest/scalatest_2.11

libraryDependencies += "org.scalatest" % "scalatest_2.11" % "3.0.0"

I can't figure out what causes this. My class and unit test seem simple enough. Any ideas?

%%operator, as indicated in the accepted question. See my answer to learn more about the SBT%%operator and cross compilation, topics all Scala programmers must understand to avoid headaches. – Phonotypy