I need to create a function which would be the inverse of the np.gradient function.

Where the Vx,Vy arrays (Velocity component vectors) are the input and the output would be an array of anti-derivatives (Arrival Time) at the datapoints x,y.

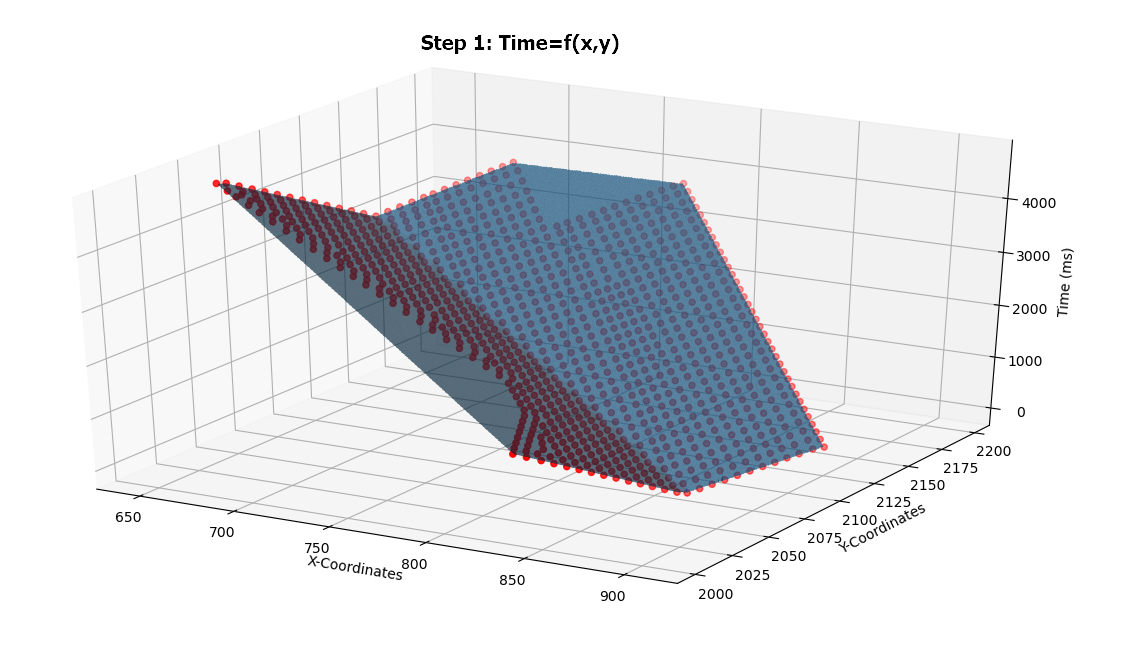

I have data on a (x,y) grid with scalar values (time) at each point.

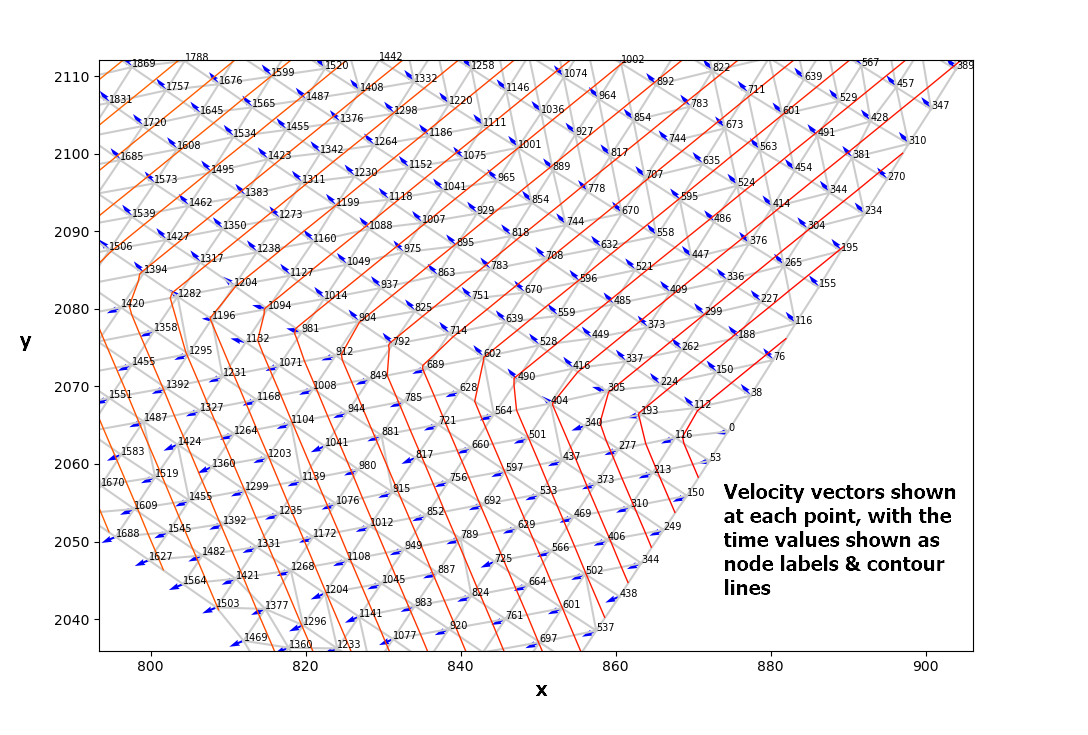

I have used the numpy gradient function and linear interpolation to determine the gradient vector Velocity (Vx,Vy) at each point (See below).

I have achieved this by:

#LinearTriInterpolator applied to a delaunay triangular mesh

LTI= LinearTriInterpolator(masked_triang, time_array)

#Gradient requested at the mesh nodes:

(Vx, Vy) = LTI.gradient(triang.x, triang.y)

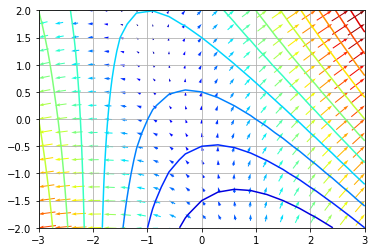

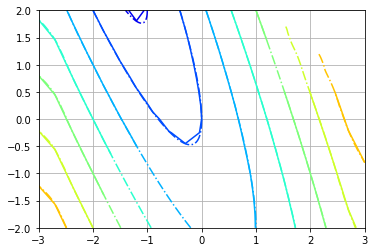

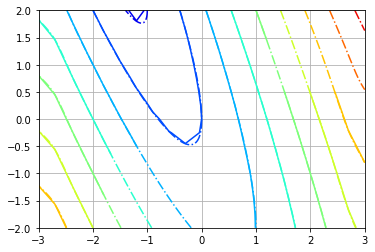

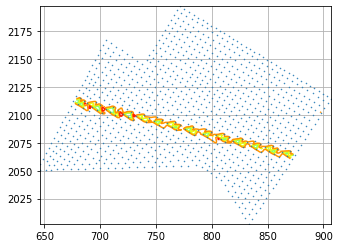

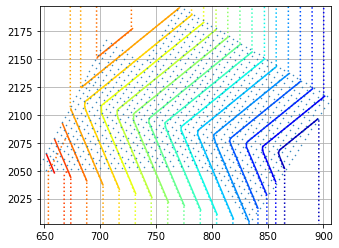

The first image below shows the velocity vectors at each point, and the point labels represent the time value which formed the derivatives (Vx,Vy)

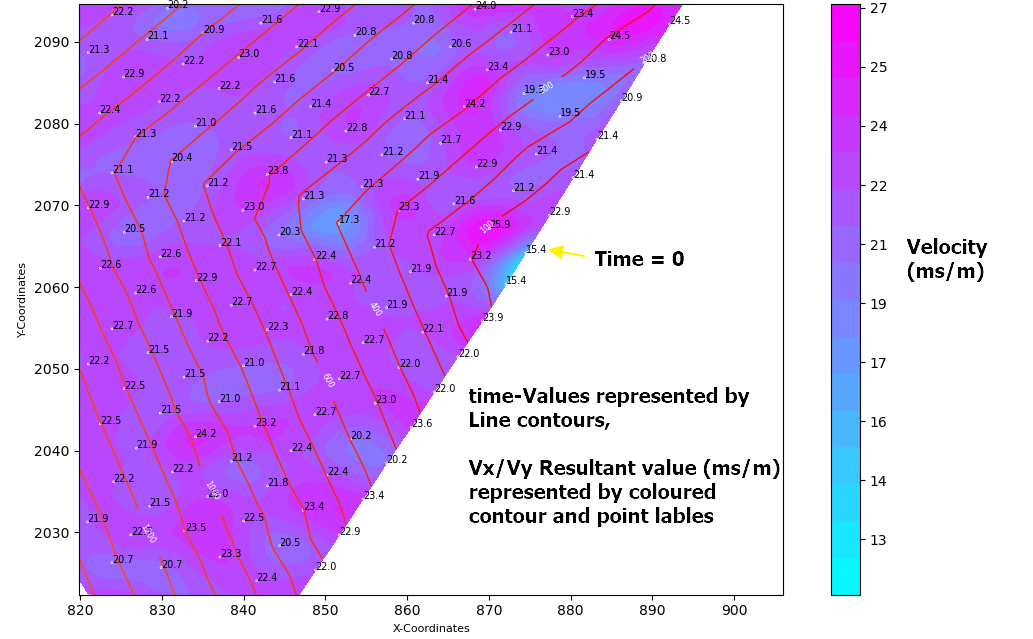

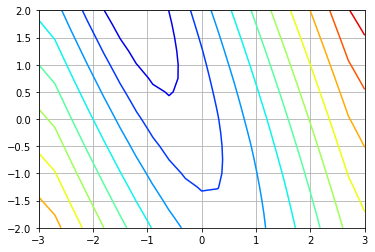

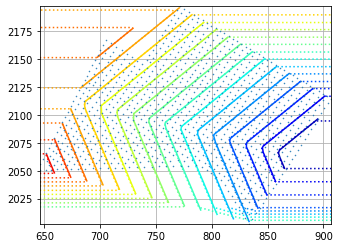

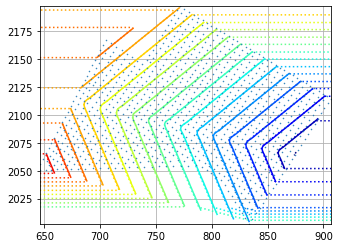

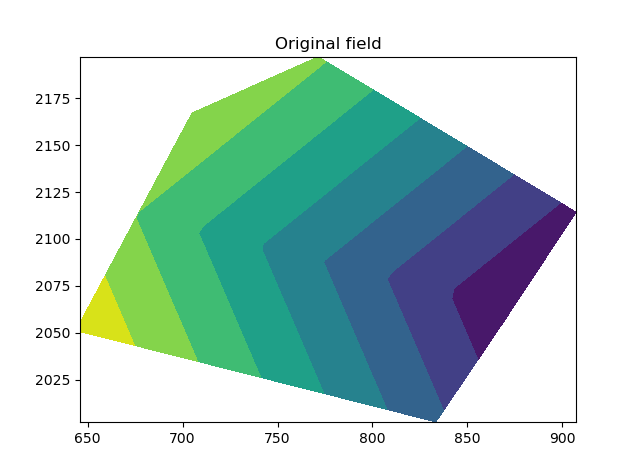

The next image shows the resultant scalar value of the derivatives (Vx,Vy) plotted as a colored contour graph with associated node labels.

So my challenge is:

I need to reverse the process!

Using the gradient vectors (Vx,Vy) or the resultant scalar value to determine the original Time-Value at that point.

Is this possible?

Knowing that the numpy.gradient function is computed using second order accurate central differences in the interior points and either first or second order accurate one-sides (forward or backwards) differences at the boundaries, I am sure there is a function which would reverse this process.

I was thinking that taking a line derivative between the original point (t=0 at x1,y1) to any point (xi,yi) over the Vx,Vy plane would give me the sum of the velocity components. I could then divide this value by the distance between the two points to get the time taken..

Would this approach work? And if so, which numpy integrate function would be best applied?

An example of my data can be found here [http://www.filedropper.com/calculatearrivaltimefromgradientvalues060820]

Your help would be greatly appreciated

EDIT:

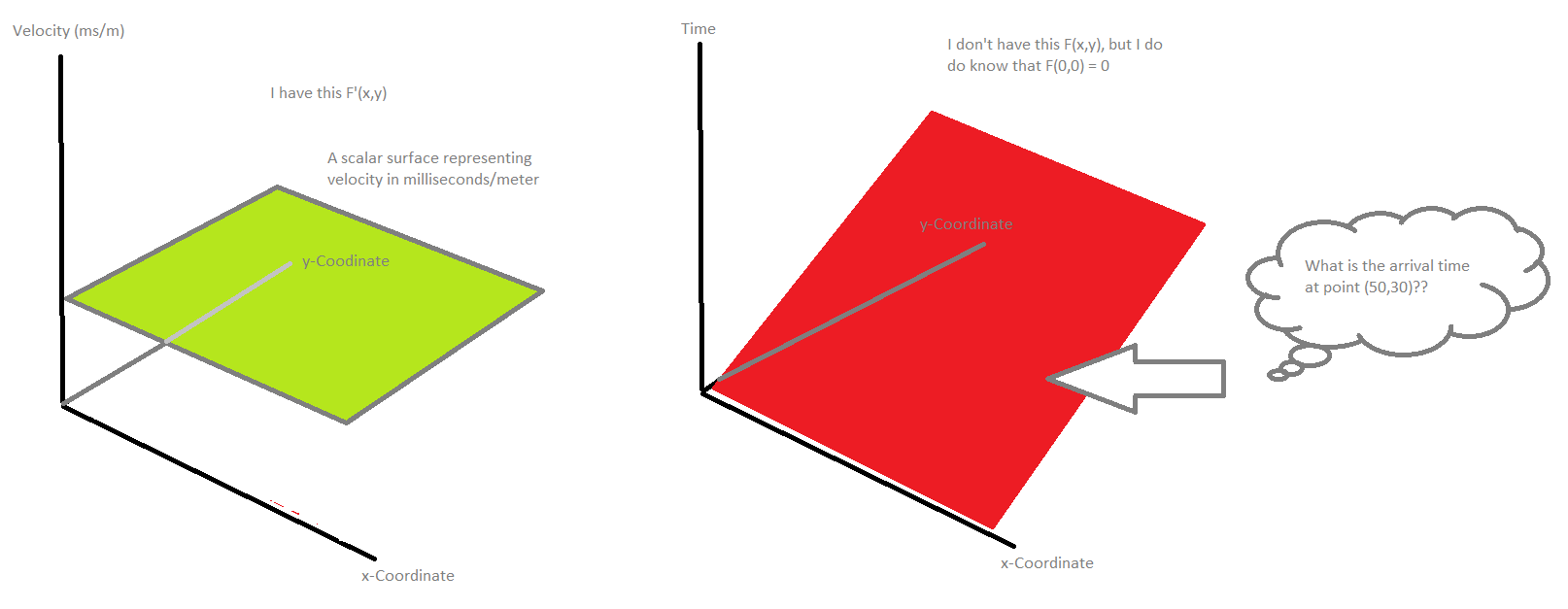

Maybe this simplified drawing might help understand where I'm trying to get to..

EDIT:

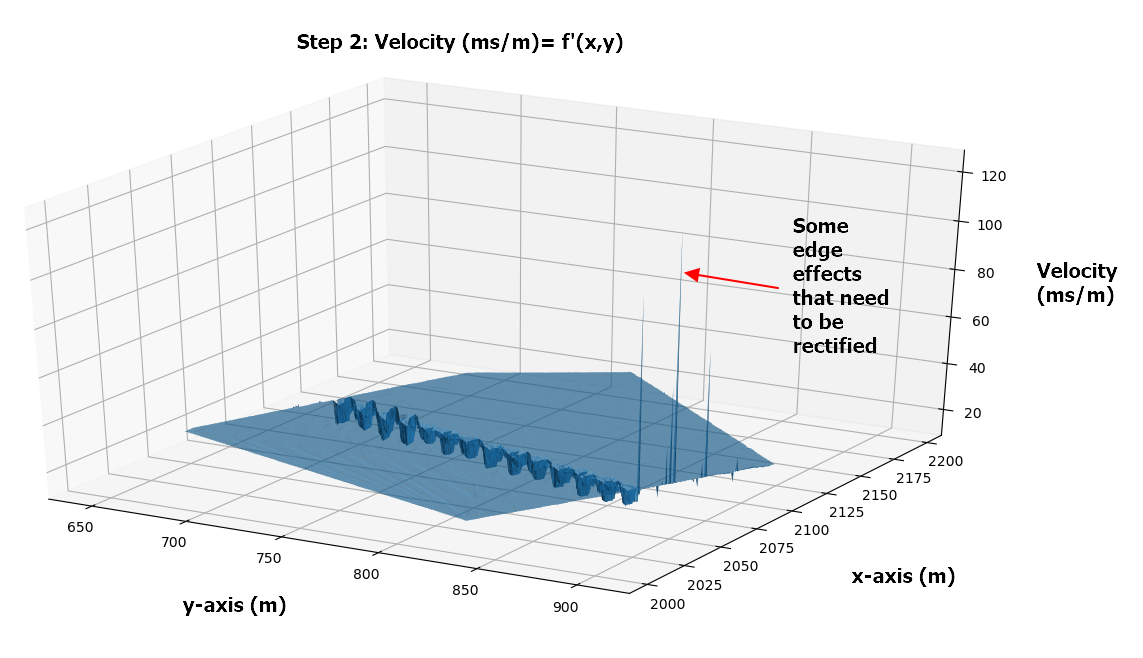

Thanks to @Aguy who has contibuted to this code.. I Have tried to get a more accurate representation using a meshgrid of spacing 0.5 x 0.5m and calculating the gradient at each meshpoint, however I am not able to integrate it properly. I also have some edge affects which are affecting the results that I don't know how to correct.

import numpy as np

from scipy import interpolate

from matplotlib import pyplot

from mpl_toolkits.mplot3d import Axes3D

#Createmesh grid with a spacing of 0.5 x 0.5

stepx = 0.5

stepy = 0.5

xx = np.arange(min(x), max(x), stepx)

yy = np.arange(min(y), max(y), stepy)

xgrid, ygrid = np.meshgrid(xx, yy)

grid_z1 = interpolate.griddata((x,y), Arrival_Time, (xgrid, ygrid), method='linear') #Interpolating the Time values

#Formatdata

X = np.ravel(xgrid)

Y= np.ravel(ygrid)

zs = np.ravel(grid_z1)

Z = zs.reshape(X.shape)

#Calculate Gradient

(dx,dy) = np.gradient(grid_z1) #Find gradient for points on meshgrid

Velocity_dx= dx/stepx #velocity ms/m

Velocity_dy= dy/stepx #velocity ms/m

Resultant = (Velocity_dx**2 + Velocity_dy**2)**0.5 #Resultant scalar value ms/m

Resultant = np.ravel(Resultant)

#Plot Original Data F(X,Y) on the meshgrid

fig = pyplot.figure()

ax = fig.add_subplot(projection='3d')

ax.scatter(x,y,Arrival_Time,color='r')

ax.plot_trisurf(X, Y, Z)

ax.set_xlabel('X-Coordinates')

ax.set_ylabel('Y-Coordinates')

ax.set_zlabel('Time (ms)')

pyplot.show()

#Plot the Derivative of f'(X,Y) on the meshgrid

fig = pyplot.figure()

ax = fig.add_subplot(projection='3d')

ax.scatter(X,Y,Resultant,color='r',s=0.2)

ax.plot_trisurf(X, Y, Resultant)

ax.set_xlabel('X-Coordinates')

ax.set_ylabel('Y-Coordinates')

ax.set_zlabel('Velocity (ms/m)')

pyplot.show()

#Integrate to compare the original data input

dxintegral = np.nancumsum(Velocity_dx, axis=1)*stepx

dyintegral = np.nancumsum(Velocity_dy, axis=0)*stepy

valintegral = np.ma.zeros(dxintegral.shape)

for i in range(len(yy)):

for j in range(len(xx)):

valintegral[i, j] = np.ma.sum([dxintegral[0, len(xx) // 2],

dyintegral[i, len(yy) // 2], dxintegral[i, j], - dxintegral[i, len(xx) // 2]])

valintegral = valintegral * np.isfinite(dxintegral)

Now the np.gradient is applied at every meshnode (dx,dy) = np.gradient(grid_z1)

Now in my process I would analyse the gradient values above and make some adjustments (There is some unsual edge effects that are being create which I need to rectify) and would then integrate the values to get back to a surface which would be very similar to f(x,y) shown above.

I need some help adjusting the integration function:

#Integrate to compare the original data input

dxintegral = np.nancumsum(Velocity_dx, axis=1)*stepx

dyintegral = np.nancumsum(Velocity_dy, axis=0)*stepy

valintegral = np.ma.zeros(dxintegral.shape)

for i in range(len(yy)):

for j in range(len(xx)):

valintegral[i, j] = np.ma.sum([dxintegral[0, len(xx) // 2],

dyintegral[i, len(yy) // 2], dxintegral[i, j], - dxintegral[i, len(xx) // 2]])

valintegral = valintegral * np.isfinite(dxintegral)

And now I need to calculate the new 'Time' values at the original (x,y) point locations.

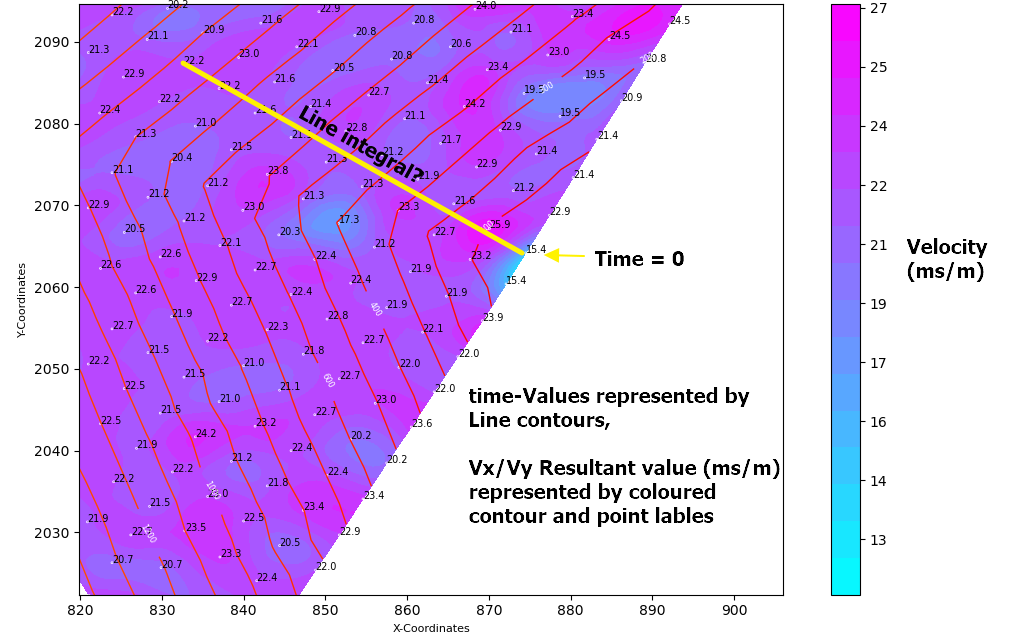

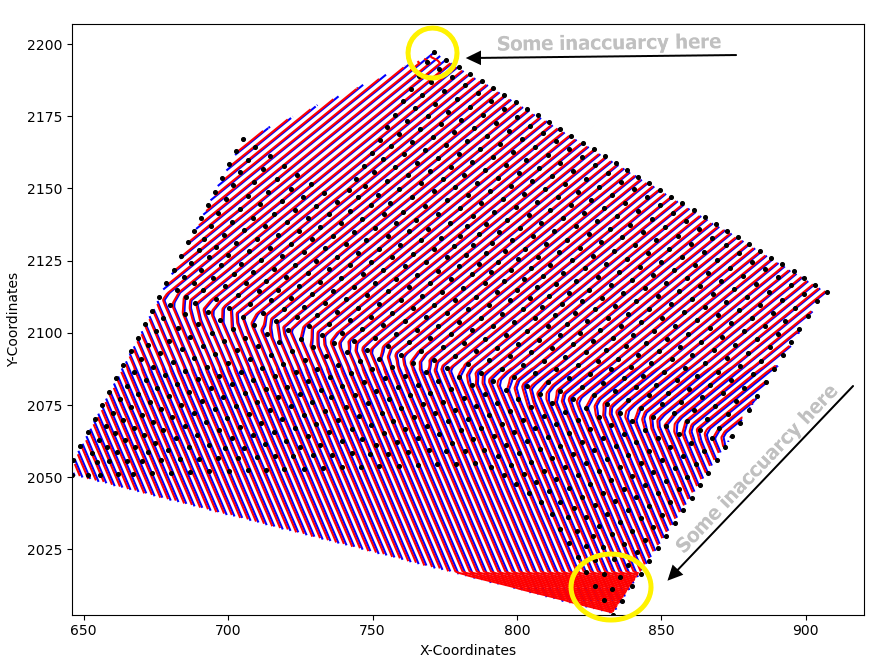

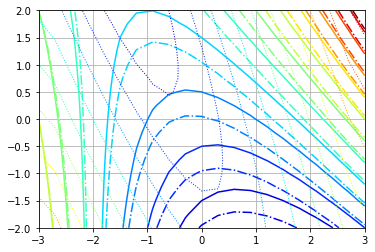

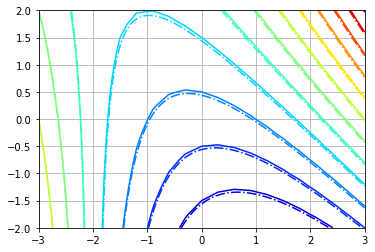

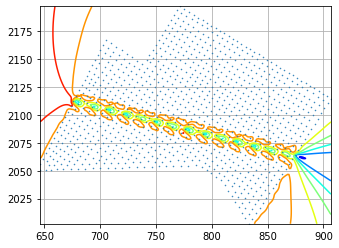

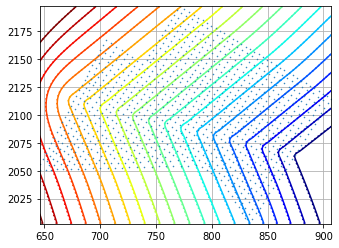

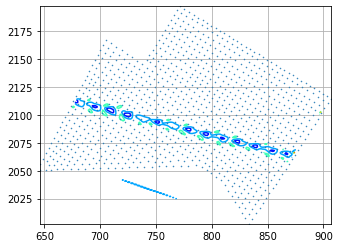

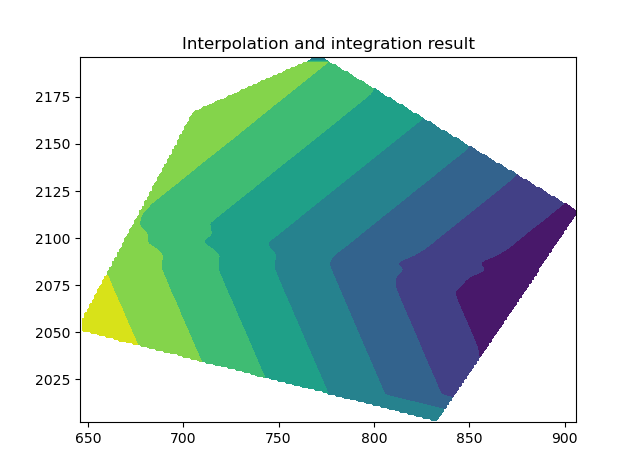

UPDATE (08-09-20) : I am getting some promising results using the help from @Aguy. The results can be seen below (with the blue contours representing the original data, and the red contours representing the integrated values).

I am still working on an integration approach which can remove the inaccuarcies at the areas of min(y) and max(y)

from matplotlib.tri import (Triangulation, UniformTriRefiner,

CubicTriInterpolator,LinearTriInterpolator,TriInterpolator,TriAnalyzer)

import pandas as pd

from scipy.interpolate import griddata

import matplotlib.pyplot as plt

import numpy as np

from scipy import interpolate

#-------------------------------------------------------------------------

# STEP 1: Import data from Excel file, and set variables

#-------------------------------------------------------------------------

df_initial = pd.read_excel(

r'C:\Users\morga\PycharmProjects\venv\Development\Trial'

r'.xlsx')

Inputdata can be found here link

df_initial = df_initial .sort_values(by='Delay', ascending=True) #Update dataframe and sort by Delay

x = df_initial ['X'].to_numpy()

y = df_initial ['Y'].to_numpy()

Arrival_Time = df_initial ['Delay'].to_numpy()

# Createmesh grid with a spacing of 0.5 x 0.5

stepx = 0.5

stepy = 0.5

xx = np.arange(min(x), max(x), stepx)

yy = np.arange(min(y), max(y), stepy)

xgrid, ygrid = np.meshgrid(xx, yy)

grid_z1 = interpolate.griddata((x, y), Arrival_Time, (xgrid, ygrid), method='linear') # Interpolating the Time values

# Calculate Gradient (velocity ms/m)

(dy, dx) = np.gradient(grid_z1) # Find gradient for points on meshgrid

Velocity_dx = dx / stepx # x velocity component ms/m

Velocity_dy = dy / stepx # y velocity component ms/m

# Integrate to compare the original data input

dxintegral = np.nancumsum(Velocity_dx, axis=1) * stepx

dyintegral = np.nancumsum(Velocity_dy, axis=0) * stepy

valintegral = np.ma.zeros(dxintegral.shape) # Makes an array filled with 0's the same shape as dx integral

for i in range(len(yy)):

for j in range(len(xx)):

valintegral[i, j] = np.ma.sum(

[dxintegral[0, len(xx) // 2], dyintegral[i, len(xx) // 2], dxintegral[i, j], - dxintegral[i, len(xx) // 2]])

valintegral[np.isnan(dx)] = np.nan

min_value = np.nanmin(valintegral)

valintegral = valintegral + (min_value * -1)

##Plot Results

fig = plt.figure()

ax = fig.add_subplot()

ax.scatter(x, y, color='black', s=7, zorder=3)

ax.set_xlabel('X-Coordinates')

ax.set_ylabel('Y-Coordinates')

ax.contour(xgrid, ygrid, valintegral, levels=50, colors='red', zorder=2)

ax.contour(xgrid, ygrid, grid_z1, levels=50, colors='blue', zorder=1)

ax.set_aspect('equal')

plt.show()

cumsum? – Skyway