The problem

I am currently working on a project that I sadly can't share with you. The project is about hyper-parameter optimization for neural networks, and it requires that I train multiple neural network models (more than I can store on my GPU) in parallel. The network architectures stay the same, but the network parameters and hyper-parameters are subjected to change between each training interval. I am currently achieving this using PyTorch on a linux environment in order to allow my NVIDIA GTX 1660 (6GB RAM) to use the multiprocessing feature that PyTorch provides.

Code (simplified):

def training_function(checkpoint):

load(checkpoint)

train(checkpoint)

unload(checkpoint)

for step in range(training_steps):

trained_checkpoints = list()

for trained_checkpoint in pool.imap_unordered(training_function, checkpoints):

trained_checkpoints.append(trained_checkpoint)

for optimized_checkpoint in optimize(trained_checkpoints):

checkpoints.update(optimized_checkpoint)

I currently test with a population of 30 neural networks (i.e. 30 checkpoints) with the MNIST and FashionMNIST datasets which consists of 70 000 (50k training, 10k validation, 10k testing) 28x28 images with 1 channel each respectively. The network I train is a simple Lenet5 implementation.

I use a torch.multiprocessing pool and allow 7 processes to be spawned. Each process uses some of the GPU memory available just to initialize the CUDA environment in each process. After training, the checkpoints are adapted with my hyper-parameter optimization technique.

The load function in the training_function loads the model- and optimizer state (holds the network parameter tensors) from a local file into GPU memory using torch.load. The unload saves the newly trained states back to file using torch.save and deletes them from memory. I do this because PyTorch will only detach GPU tensors when no variable is referencing them. I have to do this because I have limited GPU memory.

The current setup works, but each CUDA initialization occupies over 700MB of GPU RAM, and so I am interested if there are other ways I could do this that may use less memory without a penalty to efficiency.

My attempts

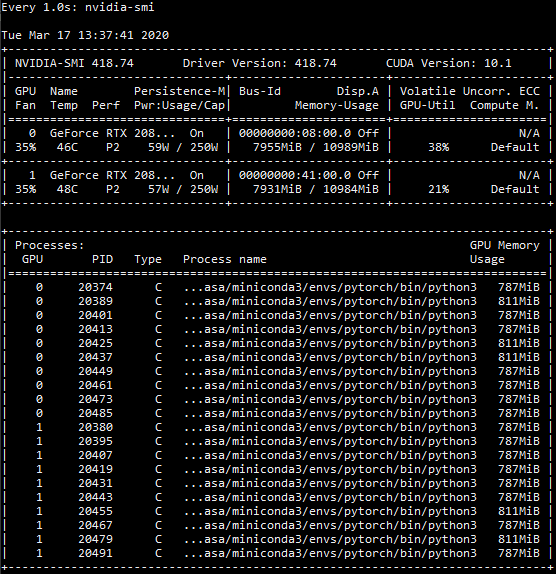

I suspected I could use a thread pool in order to save some memory, and it did. By spawning 7 threads instead of 7 processes, CUDA was only initialized once, which saved almost half of my memory. However, this lead to a new problem in which the GPU only utilized approx. 30% utilization according to nvidia-smi that I am monitoring in a separate linux terminal. Without threads, I get around 85-90% utilization.

I also messed around with torch.multiprocessing.set_sharing_strategy which is currently set to 'file_descriptor', but with no luck.

My questions

- Is there a better way to work with multiple model- and optimizer states without saving and loading them to files while training? I have tried to move the model to CPU using

model.cpu()before saving the state_dict, but this did not work in my implementation (memory leaks). - Is there an efficient way I can train multiple neural networks at the same time that uses less GPU memory? When searching the web, I only find references to

nn.DataParallelwhich trains the same model over multiple GPUs by copying it to each GPU. This does not work for my problem.

I will soon have access to multiple, more powerful GPUs with more memory, and I suspect this problem will be less annoying then, but I wouldn't be surprised if there is a better solution I am not getting.

Update (09.03.2020)

For any future readers, if you set out to do something similar to the pseudo code displayed above, and you plan on using multiple GPUs, please make sure to create one multiprocessing pool for each GPU device. Pools don't execute functions in order with the underlying processes it contains, and so you will end up initializing CUDA multiple times on the same process, wasting memory.

Another important note is that while you may be passing the device (e.g. 'cuda:1') to every torch.cuda-function, you may discover that torch does something with the default cuda device 'cuda:0' somewhere in the code, initializing CUDA on that device for every process, which wastes memory on an unwanted and non-needed CUDA initialization. I fixed this issue by using with torch.cuda.device(device_id) that encapsulate the entire training_function.

I ended up not using multiprocessing pools and instead defined my own custom process class that holds the device and training function. This means I have to maintain in-queues for each device-process, but they all share the same out-queue, meaning I can retrieve the results the moment they are available. I figured writing a custom process class was simpler than writing a custom pool class. I desperately tried to keep using pools as they are easily maintained, but I had to use multiple imap-functions, and so the results were not obtainable one at a time, which lead to a less efficient training-loop.

I am now successfully training on multiple GPUs, but my questions posted above still remains unanswered.

Update (10.03.2020)

I have implemented another way to store model- and optimizer statedicts outside of GPU RAM. I have written function that replaces every tensor in the dicts with it's .to('cpu') equivalent. This costs me some CPU memory, but it is more reliable than storing local files.

Update (11.06.2020)

I have still not found a different approach that leads to fewer CUDA initializations while maintaining the same processing speed. From what I've read and come to understand, PyTorch does not infer too much with how CUDA is operating, and leaves that up to NVIDIA.

I have ended up using a pool of custom, device-specific processes, called Workers, that is maintained by my custom pool class (more about this above). In addition, I let each of these Workers take in one or more checkpoints as well as the function that processes them (training, testing, hp optimization) via a Queue. These checkpoints are then processed simultaneously via a python multiprocessing ThreadPool in each Worker and the results are then returned one by one via the return Queue the moment they are ready.

This gives me the parallel procedure I was needing, but the memory issue is still there. Due to time constraints, I have come to terms with it for now.

Final update (03.08.2020)

If anyone is interested, the source code and master thesis for this work is available here.

result = pool.imap(function, iterator)– Clearheaded