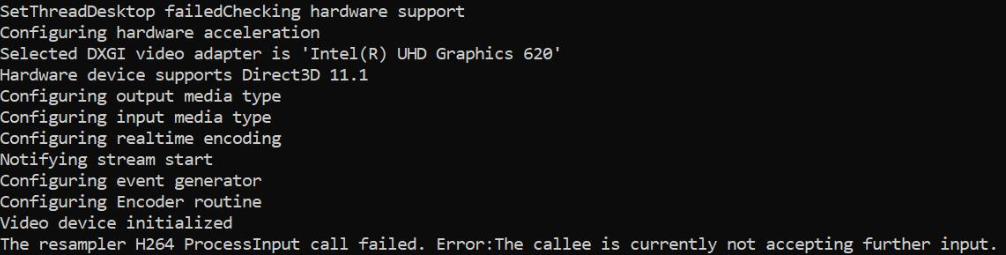

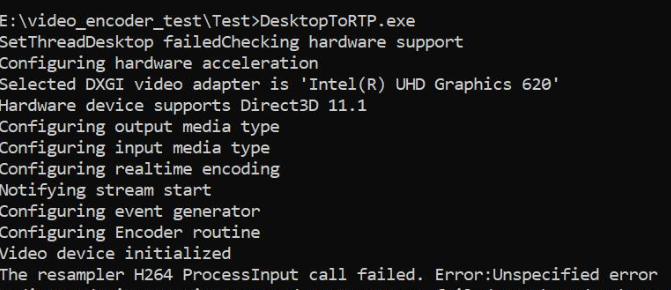

I'm capturing the desktop using DesktopDuplication API and converting the samples from RGBA to NV12 in GPU and feeding the same to MediaFoundation hardware H264 MFT. This works fine with Nvidia graphics, and also with software encoders but fails when only intel graphics hardware MFT is available. The code works fine on the same intel graphics machine if I fallback to Software MFT. I also have ensured that the encoding is actually done in hardware on Nvidia graphics machines.

On Intel graphics, MFT returns MEError ("Unspecified error"), which happens just only after the first sample is fed, and subsequent calls to ProcessInput (when event generator triggers METransformNeedInput) returns "The callee is currently not accepting further input". It's rare that MFT consumes a few more samples before returning these errors. This behavior is confusing, I'm feeding a sample only when the event generator triggers METransformNeedInput asynchronously through IMFAsyncCallback, and also checking properly whether METransformHaveOutput is triggered as soon as a sample is fed. This really baffles me when the same asynchronous logic works fine with Nvidia hardware MFT & Microsoft software encoders.

There is also a similar unresolved question in the intel forum itself. My code is similar to the one mentioned in the intel thread, except for the fact that I'm also setting d3d device manager to the encoder like below.

And, there are three other stack overflow threads reporting a similar issue with no solution given (MFTransform encoder->ProcessInput returns E_FAIL & How to create IMFSample from D11 texture for Intel MFT encoder & Asynchronous MFT is not sending MFTransformHaveOutput Event(Intel Hardware MJPEG Decoder MFT)). I have tried every possible option with no improvement on this.

Color converter code is taken from intel media sdk samples. I have also uploaded my complete code here.

Method to set d3d manager:

void SetD3dManager() {

HRESULT hr = S_OK;

if (!deviceManager) {

// Create device manager

hr = MFCreateDXGIDeviceManager(&resetToken, &deviceManager);

}

if (SUCCEEDED(hr))

{

if (!pD3dDevice) {

pD3dDevice = GetDeviceDirect3D(0);

}

}

if (pD3dDevice) {

// NOTE: Getting ready for multi-threaded operation

const CComQIPtr<ID3D10Multithread> pMultithread = pD3dDevice;

pMultithread->SetMultithreadProtected(TRUE);

hr = deviceManager->ResetDevice(pD3dDevice, resetToken);

CHECK_HR(_pTransform->ProcessMessage(MFT_MESSAGE_SET_D3D_MANAGER, reinterpret_cast<ULONG_PTR>(deviceManager.p)), "Failed to set device manager.");

}

else {

cout << "Failed to get d3d device";

}

}

Getd3ddevice:

CComPtr<ID3D11Device> GetDeviceDirect3D(UINT idxVideoAdapter)

{

// Create DXGI factory:

CComPtr<IDXGIFactory1> dxgiFactory;

DXGI_ADAPTER_DESC1 dxgiAdapterDesc;

// Direct3D feature level codes and names:

struct KeyValPair { int code; const char* name; };

const KeyValPair d3dFLevelNames[] =

{

KeyValPair{ D3D_FEATURE_LEVEL_9_1, "Direct3D 9.1" },

KeyValPair{ D3D_FEATURE_LEVEL_9_2, "Direct3D 9.2" },

KeyValPair{ D3D_FEATURE_LEVEL_9_3, "Direct3D 9.3" },

KeyValPair{ D3D_FEATURE_LEVEL_10_0, "Direct3D 10.0" },

KeyValPair{ D3D_FEATURE_LEVEL_10_1, "Direct3D 10.1" },

KeyValPair{ D3D_FEATURE_LEVEL_11_0, "Direct3D 11.0" },

KeyValPair{ D3D_FEATURE_LEVEL_11_1, "Direct3D 11.1" },

};

// Feature levels for Direct3D support

const D3D_FEATURE_LEVEL d3dFeatureLevels[] =

{

D3D_FEATURE_LEVEL_11_1,

D3D_FEATURE_LEVEL_11_0,

D3D_FEATURE_LEVEL_10_1,

D3D_FEATURE_LEVEL_10_0,

D3D_FEATURE_LEVEL_9_3,

D3D_FEATURE_LEVEL_9_2,

D3D_FEATURE_LEVEL_9_1,

};

constexpr auto nFeatLevels = static_cast<UINT> ((sizeof d3dFeatureLevels) / sizeof(D3D_FEATURE_LEVEL));

CComPtr<IDXGIAdapter1> dxgiAdapter;

D3D_FEATURE_LEVEL featLevelCodeSuccess;

CComPtr<ID3D11Device> d3dDx11Device;

std::wstring_convert<std::codecvt_utf8<wchar_t>> transcoder;

HRESULT hr = CreateDXGIFactory1(IID_PPV_ARGS(&dxgiFactory));

CHECK_HR(hr, "Failed to create DXGI factory");

// Get a video adapter:

dxgiFactory->EnumAdapters1(idxVideoAdapter, &dxgiAdapter);

// Get video adapter description:

dxgiAdapter->GetDesc1(&dxgiAdapterDesc);

CHECK_HR(hr, "Failed to retrieve DXGI video adapter description");

std::cout << "Selected DXGI video adapter is \'"

<< transcoder.to_bytes(dxgiAdapterDesc.Description) << '\'' << std::endl;

// Create Direct3D device:

hr = D3D11CreateDevice(

dxgiAdapter,

D3D_DRIVER_TYPE_UNKNOWN,

nullptr,

(0 * D3D11_CREATE_DEVICE_SINGLETHREADED) | D3D11_CREATE_DEVICE_VIDEO_SUPPORT,

d3dFeatureLevels,

nFeatLevels,

D3D11_SDK_VERSION,

&d3dDx11Device,

&featLevelCodeSuccess,

nullptr

);

// Might have failed for lack of Direct3D 11.1 runtime:

if (hr == E_INVALIDARG)

{

// Try again without Direct3D 11.1:

hr = D3D11CreateDevice(

dxgiAdapter,

D3D_DRIVER_TYPE_UNKNOWN,

nullptr,

(0 * D3D11_CREATE_DEVICE_SINGLETHREADED) | D3D11_CREATE_DEVICE_VIDEO_SUPPORT,

d3dFeatureLevels + 1,

nFeatLevels - 1,

D3D11_SDK_VERSION,

&d3dDx11Device,

&featLevelCodeSuccess,

nullptr

);

}

// Get name of Direct3D feature level that succeeded upon device creation:

std::cout << "Hardware device supports " << std::find_if(

d3dFLevelNames,

d3dFLevelNames + nFeatLevels,

[featLevelCodeSuccess](const KeyValPair& entry)

{

return entry.code == featLevelCodeSuccess;

}

)->name << std::endl;

done:

return d3dDx11Device;

}

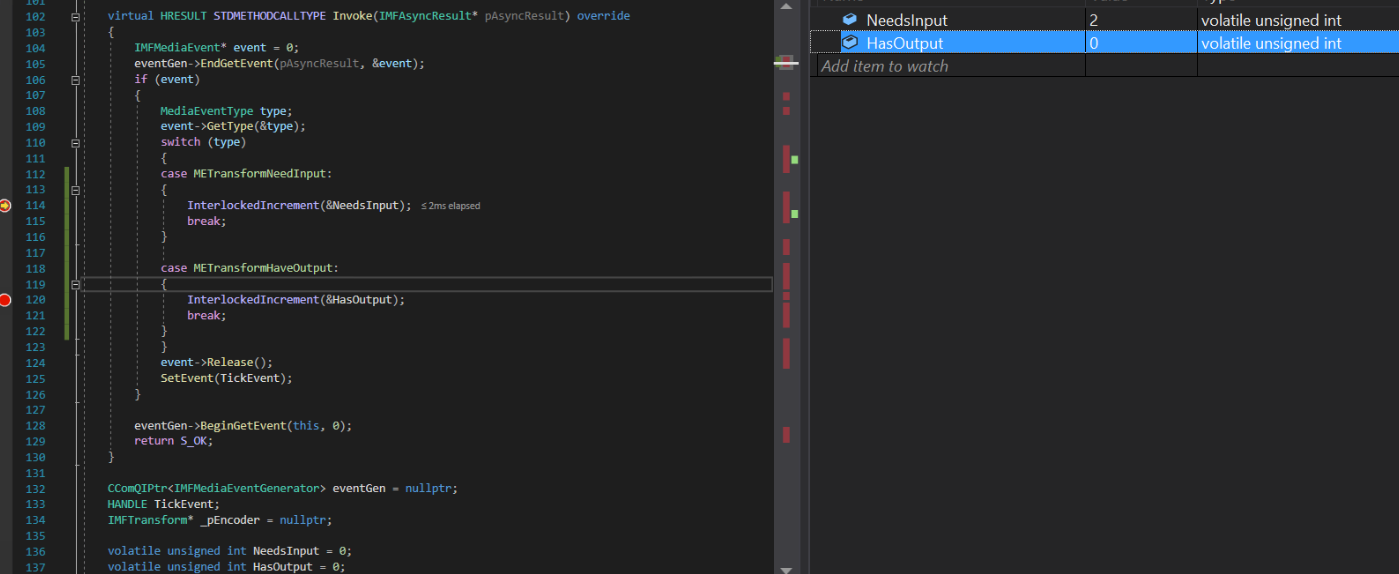

Async callback implementation:

struct EncoderCallbacks : IMFAsyncCallback

{

EncoderCallbacks(IMFTransform* encoder)

{

TickEvent = CreateEvent(0, FALSE, FALSE, 0);

_pEncoder = encoder;

}

~EncoderCallbacks()

{

eventGen = nullptr;

CloseHandle(TickEvent);

}

bool Initialize() {

_pEncoder->QueryInterface(IID_PPV_ARGS(&eventGen));

if (eventGen) {

eventGen->BeginGetEvent(this, 0);

return true;

}

return false;

}

// dummy IUnknown impl

virtual HRESULT STDMETHODCALLTYPE QueryInterface(REFIID riid, void** ppvObject) override { return E_NOTIMPL; }

virtual ULONG STDMETHODCALLTYPE AddRef(void) override { return 1; }

virtual ULONG STDMETHODCALLTYPE Release(void) override { return 1; }

virtual HRESULT STDMETHODCALLTYPE GetParameters(DWORD* pdwFlags, DWORD* pdwQueue) override

{

// we return immediately and don't do anything except signaling another thread

*pdwFlags = MFASYNC_SIGNAL_CALLBACK;

*pdwQueue = MFASYNC_CALLBACK_QUEUE_IO;

return S_OK;

}

virtual HRESULT STDMETHODCALLTYPE Invoke(IMFAsyncResult* pAsyncResult) override

{

IMFMediaEvent* event = 0;

eventGen->EndGetEvent(pAsyncResult, &event);

if (event)

{

MediaEventType type;

event->GetType(&type);

switch (type)

{

case METransformNeedInput: InterlockedIncrement(&NeedsInput); break;

case METransformHaveOutput: InterlockedIncrement(&HasOutput); break;

}

event->Release();

SetEvent(TickEvent);

}

eventGen->BeginGetEvent(this, 0);

return S_OK;

}

CComQIPtr<IMFMediaEventGenerator> eventGen = nullptr;

HANDLE TickEvent;

IMFTransform* _pEncoder = nullptr;

unsigned int NeedsInput = 0;

unsigned int HasOutput = 0;

};

Generate Sample method:

bool GenerateSampleAsync() {

DWORD processOutputStatus = 0;

HRESULT mftProcessOutput = S_OK;

bool frameSent = false;

// Create sample

CComPtr<IMFSample> currentVideoSample = nullptr;

MFT_OUTPUT_STREAM_INFO StreamInfo;

// wait for any callback to come in

WaitForSingleObject(_pEventCallback->TickEvent, INFINITE);

while (_pEventCallback->NeedsInput) {

if (!currentVideoSample) {

(pDesktopDuplication)->releaseBuffer();

(pDesktopDuplication)->cleanUpCurrentFrameObjects();

bool bTimeout = false;

if (pDesktopDuplication->GetCurrentFrameAsVideoSample((void**)& currentVideoSample, waitTime, bTimeout, deviceRect, deviceRect.Width(), deviceRect.Height())) {

prevVideoSample = currentVideoSample;

}

// Feed the previous sample to the encoder in case of no update in display

else {

currentVideoSample = prevVideoSample;

}

}

if (currentVideoSample)

{

InterlockedDecrement(&_pEventCallback->NeedsInput);

_frameCount++;

CHECK_HR(currentVideoSample->SetSampleTime(mTimeStamp), "Error setting the video sample time.");

CHECK_HR(currentVideoSample->SetSampleDuration(VIDEO_FRAME_DURATION), "Error getting video sample duration.");

CHECK_HR(_pTransform->ProcessInput(inputStreamID, currentVideoSample, 0), "The resampler H264 ProcessInput call failed.");

mTimeStamp += VIDEO_FRAME_DURATION;

}

}

while (_pEventCallback->HasOutput) {

CComPtr<IMFSample> mftOutSample = nullptr;

CComPtr<IMFMediaBuffer> pOutMediaBuffer = nullptr;

InterlockedDecrement(&_pEventCallback->HasOutput);

CHECK_HR(_pTransform->GetOutputStreamInfo(outputStreamID, &StreamInfo), "Failed to get output stream info from H264 MFT.");

CHECK_HR(MFCreateSample(&mftOutSample), "Failed to create MF sample.");

CHECK_HR(MFCreateMemoryBuffer(StreamInfo.cbSize, &pOutMediaBuffer), "Failed to create memory buffer.");

CHECK_HR(mftOutSample->AddBuffer(pOutMediaBuffer), "Failed to add sample to buffer.");

MFT_OUTPUT_DATA_BUFFER _outputDataBuffer;

memset(&_outputDataBuffer, 0, sizeof _outputDataBuffer);

_outputDataBuffer.dwStreamID = outputStreamID;

_outputDataBuffer.dwStatus = 0;

_outputDataBuffer.pEvents = nullptr;

_outputDataBuffer.pSample = mftOutSample;

mftProcessOutput = _pTransform->ProcessOutput(0, 1, &_outputDataBuffer, &processOutputStatus);

if (mftProcessOutput != MF_E_TRANSFORM_NEED_MORE_INPUT)

{

if (_outputDataBuffer.pSample) {

CComPtr<IMFMediaBuffer> buf = NULL;

DWORD bufLength;

CHECK_HR(_outputDataBuffer.pSample->ConvertToContiguousBuffer(&buf), "ConvertToContiguousBuffer failed.");

if (buf) {

CHECK_HR(buf->GetCurrentLength(&bufLength), "Get buffer length failed.");

BYTE* rawBuffer = NULL;

fFrameSize = bufLength;

fDurationInMicroseconds = 0;

gettimeofday(&fPresentationTime, NULL);

buf->Lock(&rawBuffer, NULL, NULL);

memmove(fTo, rawBuffer, fFrameSize > fMaxSize ? fMaxSize : fFrameSize);

bytesTransfered += bufLength;

FramedSource::afterGetting(this);

buf->Unlock();

frameSent = true;

}

}

if (_outputDataBuffer.pEvents)

_outputDataBuffer.pEvents->Release();

}

else if (MF_E_TRANSFORM_STREAM_CHANGE == mftProcessOutput) {

// some encoders want to renegotiate the output format.

if (_outputDataBuffer.dwStatus & MFT_OUTPUT_DATA_BUFFER_FORMAT_CHANGE)

{

CComPtr<IMFMediaType> pNewOutputMediaType = nullptr;

HRESULT res = _pTransform->GetOutputAvailableType(outputStreamID, 1, &pNewOutputMediaType);

res = _pTransform->SetOutputType(0, pNewOutputMediaType, 0);//setting the type again

CHECK_HR(res, "Failed to set output type during stream change");

}

}

else {

HandleFailure();

}

}

return frameSent;

}

Create video sample & color conversion:

bool GetCurrentFrameAsVideoSample(void **videoSample, int waitTime, bool &isTimeout, CRect &deviceRect, int surfaceWidth, int surfaceHeight)

{

FRAME_DATA currentFrameData;

m_LastErrorCode = m_DuplicationManager.GetFrame(¤tFrameData, waitTime, &isTimeout);

if (!isTimeout && SUCCEEDED(m_LastErrorCode)) {

m_CurrentFrameTexture = currentFrameData.Frame;

if (!pDstTexture) {

D3D11_TEXTURE2D_DESC desc;

ZeroMemory(&desc, sizeof(D3D11_TEXTURE2D_DESC));

desc.Format = DXGI_FORMAT_NV12;

desc.Width = surfaceWidth;

desc.Height = surfaceHeight;

desc.MipLevels = 1;

desc.ArraySize = 1;

desc.SampleDesc.Count = 1;

desc.CPUAccessFlags = 0;

desc.Usage = D3D11_USAGE_DEFAULT;

desc.BindFlags = D3D11_BIND_RENDER_TARGET;

m_LastErrorCode = m_Id3d11Device->CreateTexture2D(&desc, NULL, &pDstTexture);

}

if (m_CurrentFrameTexture && pDstTexture) {

// Copy diff area texels to new temp texture

//m_Id3d11DeviceContext->CopySubresourceRegion(pNewTexture, D3D11CalcSubresource(0, 0, 1), 0, 0, 0, m_CurrentFrameTexture, 0, NULL);

HRESULT hr = pColorConv->Convert(m_CurrentFrameTexture, pDstTexture);

if (SUCCEEDED(hr)) {

CComPtr<IMFMediaBuffer> pMediaBuffer = nullptr;

MFCreateDXGISurfaceBuffer(__uuidof(ID3D11Texture2D), pDstTexture, 0, FALSE, (IMFMediaBuffer**)&pMediaBuffer);

if (pMediaBuffer) {

CComPtr<IMF2DBuffer> p2DBuffer = NULL;

DWORD length = 0;

(((IMFMediaBuffer*)pMediaBuffer))->QueryInterface(__uuidof(IMF2DBuffer), reinterpret_cast<void**>(&p2DBuffer));

p2DBuffer->GetContiguousLength(&length);

(((IMFMediaBuffer*)pMediaBuffer))->SetCurrentLength(length);

//MFCreateVideoSampleFromSurface(NULL, (IMFSample**)videoSample);

MFCreateSample((IMFSample * *)videoSample);

if (videoSample) {

(*((IMFSample **)videoSample))->AddBuffer((((IMFMediaBuffer*)pMediaBuffer)));

}

return true;

}

}

}

}

return false;

}

The intel graphics driver in the machine is already up to date.

Only the TransformNeedInput event is getting triggered all the time yet the encoder complains that it couldn't accept any more input. TransformHaveOutput event has never been triggered.

Similar issues reported on intel & msdn forums: 1) https://software.intel.com/en-us/forums/intel-media-sdk/topic/607189 2) https://social.msdn.microsoft.com/Forums/SECURITY/en-US/fe051dd5-b522-4e4b-9cbb-2c06a5450e40/imfsinkwriter-merit-validation-failed-for-mft-intel-quick-sync-video-h264-encoder-mft?forum=mediafoundationdevelopment

Update: I have tried to mock just the input source (By programmatically creating an animating rectangle NV12 sample) leaving everything else untouched. This time, the intel encoder doesn't complain anything, I have even got output samples. Except the fact that the output video of intel encoder is distorted whereas Nvidia encoder works perfectly fine.

Furthermore, I'm still getting the ProcessInput error for my original NV12 source with intel encoder. I have no issues with Nvidia MFT and software encoders.

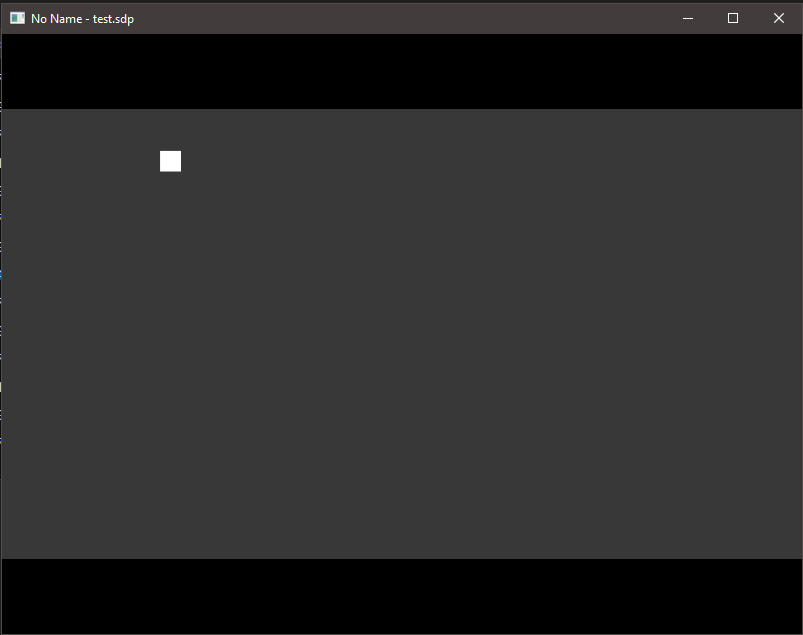

Output of Intel hardware MFT: (Please look at Nvidia encoder's output)

Output of Nvidia hardware MFT:

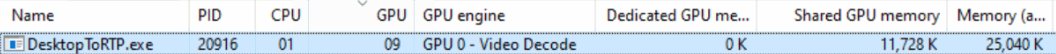

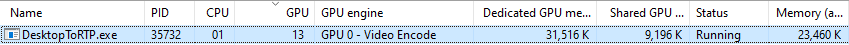

Intel graphics usage stats (I don't understand why GPU engine is displayed as video decode):

ProcessInput. – CircumstantialityTestHardwareMFTin your archive is a copy/derivative of code from Intel forum. As I mentioned a few days ago, it's working on my Intel(R) UHD Graphics 630, it should work with other, I don't see a reason why not. I just re-checked additionally on Intel® HD Graphics 615 on tablet - works too. I don't like how the code styled but it produces output, so should be a good starting point for you. I don't have capacity to check your bigger project and code snippets posted here - it's too complicated and does not do sufficient job in narrowing down the scope of the problem. – Circumstantiality